See also

| This disambiguation page lists articles associated with the title Smoothing. If an internal link led you here, you may wish to change the link to point directly to the intended article. |

Smoothing is the reduction or elimination in the roughness or unevenness of a surface or other thing.

Smoothing may refer to:

In statistics and image processing, to smooth a data set is to create an approximating function that attempts to capture important patterns in the data, while leaving out noise or other fine-scale structures/rapid phenomena. In smoothing, the data points of a signal are modified so individual points are reduced, and points that are lower than the adjacent points are increased leading to a smoother signal. Smoothing may be used in two important ways that can aid in data analysis (1) by being able to extract more information from the data as long as the assumption of smoothing is reasonable and (2) by being able to provide analyses that are both flexible and robust. Many different algorithms are used in smoothing.

In numerical mathematics, relaxation methods are iterative methods for solving systems of equations, including nonlinear systems.

The Smoothing problem refers to Recursive Bayesian estimation also known as Bayes filter is the problem of estimating an unknown probability density function recursively over time using incremental incoming measurements. It is one of the main problems defined by Norbert Wiener .

| This disambiguation page lists articles associated with the title Smoothing. If an internal link led you here, you may wish to change the link to point directly to the intended article. |

In probability theory and related fields, a stochastic or random process is a mathematical object usually defined as a collection of random variables. Historically, the random variables were associated with or indexed by a set of numbers, usually viewed as points in time, giving the interpretation of a stochastic process representing numerical values of some system randomly changing over time, such as the growth of a bacterial population, an electrical current fluctuating due to thermal noise, or the movement of a gas molecule. Stochastic processes are widely used as mathematical models of systems and phenomena that appear to vary in a random manner. They have applications in many disciplines including sciences such as biology, chemistry, ecology, neuroscience, and physics as well as technology and engineering fields such as image processing, signal processing, information theory, computer science, cryptography and telecommunications. Furthermore, seemingly random changes in financial markets have motivated the extensive use of stochastic processes in finance.

In mathematics, computer science and operations research, mathematical optimization or mathematical programming is the selection of a best element from some set of available alternatives.

Generator may refer to:

Stochastic calculus is a branch of mathematics that operates on stochastic processes. It allows a consistent theory of integration to be defined for integrals of stochastic processes with respect to stochastic processes. It is used to model systems that behave randomly.

The word stochastic is an adjective in English that describes something that was randomly determined. The word first appeared in English to describe a mathematical object called a stochastic process, but now in mathematics the terms stochastic process and random process are considered interchangeable. The word, with its current definition meaning random, came from German, but it originally came from Greek στόχος (stókhos), meaning 'aim, guess'.

In computer science, local search is a heuristic method for solving computationally hard optimization problems. Local search can be used on problems that can be formulated as finding a solution maximizing a criterion among a number of candidate solutions. Local search algorithms move from solution to solution in the space of candidate solutions by applying local changes, until a solution deemed optimal is found or a time bound is elapsed.

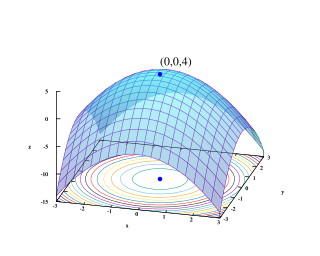

In numerical analysis, hill climbing is a mathematical optimization technique which belongs to the family of local search. It is an iterative algorithm that starts with an arbitrary solution to a problem, then attempts to find a better solution by making an incremental change to the solution. If the change produces a better solution, another incremental change is made to the new solution, and so on until no further improvements can be found.

In computer science and mathematical optimization, a metaheuristic is a higher-level procedure or heuristic designed to find, generate, or select a heuristic that may provide a sufficiently good solution to an optimization problem, especially with incomplete or imperfect information or limited computation capacity. Metaheuristics sample a set of solutions which is too large to be completely sampled. Metaheuristics may make few assumptions about the optimization problem being solved, and so they may be usable for a variety of problems.

A Markov decision process (MDP) is a discrete time stochastic control process. It provides a mathematical framework for modeling decision making in situations where outcomes are partly random and partly under the control of a decision maker. MDPs are useful for studying optimization problems solved via dynamic programming and reinforcement learning. MDPs were known at least as early as the 1950s; a core body of research on Markov decision processes resulted from Howard's 1960 book, Dynamic Programming and Markov Processes. They are used in many disciplines, including robotics, automatic control, economics and manufacturing. The name of MDPs comes from the Russian mathematician Andrey Markov.

Stochastic gradient descent, also known as incremental gradient descent, is an iterative method for optimizing a differentiable objective function, a stochastic approximation of gradient descent optimization. A 2018 article implicitly credits Herbert Robbins and Sutton Monro for developing SGD in their 1951 article titled "A Stochastic Approximation Method"; see Stochastic approximation for more information. It is called stochastic because samples are selected randomly instead of as a single group or in the order they appear in the training set.

Stochastic optimization (SO) methods are optimization methods that generate and use random variables. For stochastic problems, the random variables appear in the formulation of the optimization problem itself, which involves random objective functions or random constraints. Stochastic optimization methods also include methods with random iterates. Some stochastic optimization methods use random iterates to solve stochastic problems, combining both meanings of stochastic optimization. Stochastic optimization methods generalize deterministic methods for deterministic problems.

Stochastic approximation algorithms are recursive update rules that can be used, among other things, to solve optimization problems and fixed point equations when the collected data is subject to noise. In engineering, optimization problems are often of this type when you do not have a mathematical model of the system but still would like to optimize its behavior by adjusting certain parameters.

In the theory of stochastic processes, the filtering problem is a mathematical model for a number of state estimation problems in signal processing and related fields. The general idea is to establish a "best estimate" for the true value of some system from an incomplete, potentially noisy set of observations on that system. The problem of optimal non-linear filtering was solved by Ruslan L. Stratonovich, see also Harold J. Kushner's work and Moshe Zakai's, who introduced a simplified dynamics for the unnormalized conditional law of the filter known as Zakai equation. The solution, however, is infinite-dimensional in the general case. Certain approximations and special cases are well understood: for example, the linear filters are optimal for Gaussian random variables, and are known as the Wiener filter and the Kalman-Bucy filter. More generally, as the solution is infinite dimensional, it requires finite dimensional approximations to be implemented in a computer with finite memory. A finite dimensional approximated nonlinear filter may be more based on heuristics, such as the Extended Kalman Filter or the Assumed Density Filters, or more methodologically oriented such as for example the Projection Filters, some sub-families of which are shown to coincide with the Assumed Density Filters.

Stochastic control or stochastic optimal control is a sub field of control theory that deals with the existence of uncertainty either in observations or in the noise that drives the evolution of the system. The system designer assumes, in a Bayesian probability-driven fashion, that random noise with known probability distribution affects the evolution and observation of the state variables. Stochastic control aims to design the time path of the controlled variables that performs the desired control task with minimum cost, somehow defined, despite the presence of this noise. The context may be either discrete time or continuous time.

In time series analysis — as conducted in statistics, signal processing, and many other fields — the innovation is the difference between the observed value of a variable at time t and the optimal forecast of that value based on information available prior to time t. If the forecasting method is working correctly, successive innovations are uncorrelated with each other, i.e., constitute a white noise time series. Thus it can be said that the innovation time series is obtained from the measurement time series by a process of 'whitening', or removing the predictable component. The use of the term innovation in the sense described here is due to Hendrik Bode and Claude Shannon (1950) in their discussion of the Wiener filter problem, although the notion was already implicit in the work of Kolmogorov.

Stochastic computing is a collection of techniques that represent continuous values by streams of random bits. Complex computations can then be computed by simple bit-wise operations on the streams. Stochastic computing is distinct from the study of randomized algorithms.

Augmented Lagrangian methods are a certain class of algorithms for solving constrained optimization problems. They have similarities to penalty methods in that they replace a constrained optimization problem by a series of unconstrained problems and add a penalty term to the objective; the difference is that the augmented Lagrangian method adds yet another term, designed to mimic a Lagrange multiplier. The augmented Lagrangian is not the same as the method of Lagrange multipliers.

Coordinate descent is an optimization algorithm that successively minimizes along coordinate directions to find the minimum of a function. At each iteration, the algorithm determines a coordinate or coordinate block via a coordinate selection rule, then exactly or inexactly minimizes over the corresponding coordinate hyperplane while fixing all other coordinates or coordinate blocks. A line search along the coordinate direction can be performed at the current iterate to determine the appropriate step size. Coordinate descent is applicable in both differentiable and derivative-free contexts.