Related Research Articles

Cyc is a long-term artificial intelligence project that aims to assemble a comprehensive ontology and knowledge base that spans the basic concepts and rules about how the world works. Hoping to capture common sense knowledge, Cyc focuses on implicit knowledge. The project began in July 1984 at MCC and was developed later by the Cycorp company.

The Defense Advanced Research Projects Agency (DARPA) is a research and development agency of the United States Department of Defense responsible for the development of emerging technologies for use by the military.

Knowledge representation and reasoning is the field of artificial intelligence (AI) dedicated to representing information about the world in a form that a computer system can use to solve complex tasks such as diagnosing a medical condition or having a dialog in a natural language. Knowledge representation incorporates findings from psychology about how humans solve problems and represent knowledge, in order to design formalisms that will make complex systems easier to design and build. Knowledge representation and reasoning also incorporates findings from logic to automate various kinds of reasoning.

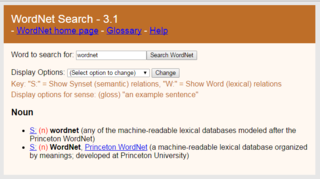

WordNet is a lexical database of semantic relations between words that links words into semantic relations including synonyms, hyponyms, and meronyms. The synonyms are grouped into synsets with short definitions and usage examples. It can thus be seen as a combination and extension of a dictionary and thesaurus. While it is accessible to human users via a web browser, its primary use is in automatic text analysis and artificial intelligence applications. It was first created in the English language and the English WordNet database and software tools have been released under a BSD style license and are freely available for download from that WordNet website. There are now WordNets in more than 200 languages.

In information science, an ontology encompasses a representation, formal naming, and definitions of the categories, properties, and relations between the concepts, data, or entities that pertain to one, many, or all domains of discourse. More simply, an ontology is a way of showing the properties of a subject area and how they are related, by defining a set of terms and relational expressions that represent the entities in that subject area. The field which studies ontologies so conceived is sometimes referred to as applied ontology.

The DARPA Agent Markup Language (DAML) was the name of a US funding program at the US Defense Advanced Research Projects Agency (DARPA) started in 1999 by then-Program Manager James Hendler, and later run by Murray Burke, Mark Greaves and Michael Pagels. The program focused on the creation of machine-readable representations for the Web.

Ramanathan V. Guha is the creator of widely used web standards such as RSS, RDF and Schema.org. He is also responsible for products such as Google Custom Search. He was a co-founder of Epinions and Alpiri. He worked at Google for nearly two decades, most recently as a Google Fellow, before announcing his departure from the company in August 2024.

Open Mind Common Sense (OMCS) is an artificial intelligence project based at the Massachusetts Institute of Technology (MIT) Media Lab whose goal is to build and utilize a large commonsense knowledge base from the contributions of many thousands of people across the Web. It has been active from 1999 to 2016.

The Fifth Generation Computer Systems was a 10-year initiative launched in 1982 by Japan's Ministry of International Trade and Industry (MITI) to develop computers based on massively parallel computing and logic programming. The project aimed to create an "epoch-making computer" with supercomputer-like performance and to establish a platform for future advancements in artificial intelligence. Although FGCS was ahead of its time, its ambitious goals ultimately led to commercial failure. However, on a theoretical level, the project significantly contributed to the development of concurrent logic programming.

In artificial intelligence research, commonsense knowledge consists of facts about the everyday world, such as "Lemons are sour", or "Cows say moo", that all humans are expected to know. It is currently an unsolved problem in Artificial General Intelligence. The first AI program to address common sense knowledge was Advice Taker in 1959 by John McCarthy.

James Alexander Hendler is an artificial intelligence researcher at Rensselaer Polytechnic Institute, United States, and one of the originators of the Semantic Web. He is a Fellow of the National Academy of Public Administration.

A semantic wiki is a wiki that has an underlying model of the knowledge described in its pages. Regular, or syntactic, wikis have structured text and untyped hyperlinks. Semantic wikis, on the other hand, provide the ability to capture or identify information about the data within pages, and the relationships between pages, in ways that can be queried or exported like a database through semantic queries.

In information science, an upper ontology is an ontology that consists of very general terms that are common across all domains. An important function of an upper ontology is to support broad semantic interoperability among a large number of domain-specific ontologies by providing a common starting point for the formulation of definitions. Terms in the domain ontology are ranked under the terms in the upper ontology, e.g., the upper ontology classes are superclasses or supersets of all the classes in the domain ontologies.

In the history of artificial intelligence, an AI winter is a period of reduced funding and interest in artificial intelligence research. The field has experienced several hype cycles, followed by disappointment and criticism, followed by funding cuts, followed by renewed interest years or even decades later.

Frames are an artificial intelligence data structure used to divide knowledge into substructures by representing "stereotyped situations". They were proposed by Marvin Minsky in his 1974 article "A Framework for Representing Knowledge". Frames are the primary data structure used in artificial intelligence frame languages; they are stored as ontologies of sets.

The Large-Scale Concept Ontology for Multimedia project was a series of workshops held from April 2004 to September 2006 for the purpose of defining a standard formal vocabulary for the annotation and retrieval of video.

In computer science, information science and systems engineering, ontology engineering is a field which studies the methods and methodologies for building ontologies, which encompasses a representation, formal naming and definition of the categories, properties and relations between the concepts, data and entities of a given domain of interest. In a broader sense, this field also includes a knowledge construction of the domain using formal ontology representations such as OWL/RDF. A large-scale representation of abstract concepts such as actions, time, physical objects and beliefs would be an example of ontological engineering. Ontology engineering is one of the areas of applied ontology, and can be seen as an application of philosophical ontology. Core ideas and objectives of ontology engineering are also central in conceptual modeling.

YAGO is an open source knowledge base developed at the Max Planck Institute for Informatics in Saarbrücken. It is automatically extracted from Wikidata and Schema.org.

Never-Ending Language Learning system (NELL) is a semantic machine learning system that as of 2010 was being developed by a research team at Carnegie Mellon University, and supported by grants from DARPA, Google, NSF, and CNPq with portions of the system running on a supercomputing cluster provided by Yahoo!.

References

Web Intelligence: First Asia-Pacific Conference, Wi 2001, Maebashi City, Japan, October 23–26, by N Zhong, Y Yao, J Liu