Normalization or normalisation refers to a process that makes something more normal or regular.

Normalization or normalisation refers to a process that makes something more normal or regular.

In mathematics, Fourier analysis is the study of the way general functions may be represented or approximated by sums of simpler trigonometric functions. Fourier analysis grew from the study of Fourier series, and is named after Joseph Fourier, who showed that representing a function as a sum of trigonometric functions greatly simplifies the study of heat transfer.

Harmonic analysis is a branch of mathematics concerned with investigating the connections between a function and its representation in frequency. The frequency representation is found by using the Fourier transform for functions on unbounded domains such as the full real line or by Fourier series for functions on bounded domains, especially periodic functions on finite intervals. Generalizing these transforms to other domains is generally called Fourier analysis, although the term is sometimes used interchangeably with harmonic analysis. Harmonic analysis has become a vast subject with applications in areas as diverse as number theory, representation theory, signal processing, quantum mechanics, tidal analysis, Spectral Analysis, and neuroscience.

In probability theory and statistics, a probability distribution is the mathematical function that gives the probabilities of occurrence of possible outcomes for an experiment. It is a mathematical description of a random phenomenon in terms of its sample space and the probabilities of events.

In mathematics, the Fourier transform (FT) is an integral transform that takes a function as input and outputs another function that describes the extent to which various frequencies are present in the original function. The output of the transform is a complex-valued function of frequency. The term Fourier transform refers to both this complex-valued function and the mathematical operation. When a distinction needs to be made, the output of the operation is sometimes called the frequency domain representation of the original function. The Fourier transform is analogous to decomposing the sound of a musical chord into the intensities of its constituent pitches.

Normal form may refer to:

In mathematics, a von Neumann algebra or W*-algebra is a *-algebra of bounded operators on a Hilbert space that is closed in the weak operator topology and contains the identity operator. It is a special type of C*-algebra.

The adjective canonical is applied in many contexts to mean 'according to the canon' – the standard, rule or primary source that is accepted as authoritative for the body of knowledge or literature in that context. In mathematics, canonical example is often used to mean 'archetype'.

Probability is a measure of the likeliness that an event will occur. Probability is used to quantify an attitude of mind towards some proposition whose truth is not certain. The proposition of interest is usually of the form "A specific event will occur." The attitude of mind is of the form "How certain is it that the event will occur?" The certainty that is adopted can be described in terms of a numerical measure, and this number, between 0 and 1 is called the probability. Probability theory is used extensively in statistics, mathematics, science and philosophy to draw conclusions about the likelihood of potential events and the underlying mechanics of complex systems.

In quantum mechanics, a probability amplitude is a complex number used for describing the behaviour of systems. The square of the modulus of this quantity represents a probability density.

In mathematics and computer science, a canonical, normal, or standardform of a mathematical object is a standard way of presenting that object as a mathematical expression. Often, it is one which provides the simplest representation of an object and allows it to be identified in a unique way. The distinction between "canonical" and "normal" forms varies from subfield to subfield. In most fields, a canonical form specifies a unique representation for every object, while a normal form simply specifies its form, without the requirement of uniqueness.

In mathematics, operator theory is the study of linear operators on function spaces, beginning with differential operators and integral operators. The operators may be presented abstractly by their characteristics, such as bounded linear operators or closed operators, and consideration may be given to nonlinear operators. The study, which depends heavily on the topology of function spaces, is a branch of functional analysis.

In mathematics, a Boolean function is a function whose arguments and result assume values from a two-element set. Alternative names are switching function, used especially in older computer science literature, and truth function, used in logic. Boolean functions are the subject of Boolean algebra and switching theory.

In abstract rewriting, an object is in normal form if it cannot be rewritten any further, i.e. it is irreducible. Depending on the rewriting system, an object may rewrite to several normal forms or none at all. Many properties of rewriting systems relate to normal forms.

Unicode equivalence is the specification by the Unicode character encoding standard that some sequences of code points represent essentially the same character. This feature was introduced in the standard to allow compatibility with pre-existing standard character sets, which often included similar or identical characters.

In programming language semantics, normalisation by evaluation (NBE) is a method of obtaining the normal form of terms in the λ-calculus by appealing to their denotational semantics. A term is first interpreted into a denotational model of the λ-term structure, and then a canonical (β-normal and η-long) representative is extracted by reifying the denotation. Such an essentially semantic, reduction-free, approach differs from the more traditional syntactic, reduction-based, description of normalisation as reductions in a term rewrite system where β-reductions are allowed deep inside λ-terms.

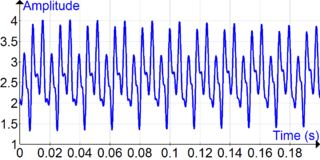

In statistical signal processing, the goal of spectral density estimation (SDE) or simply spectral estimation is to estimate the spectral density of a signal from a sequence of time samples of the signal. Intuitively speaking, the spectral density characterizes the frequency content of the signal. One purpose of estimating the spectral density is to detect any periodicities in the data, by observing peaks at the frequencies corresponding to these periodicities.

This page lists articles related to probability theory. In particular, it lists many articles corresponding to specific probability distributions. Such articles are marked here by a code of the form (X:Y), which refers to number of random variables involved and the type of the distribution. For example (2:DC) indicates a distribution with two random variables, discrete or continuous. Other codes are just abbreviations for topics. The list of codes can be found in the table of contents.