Continuum mechanics is a branch of mechanics that deals with the deformation of and transmission of forces through materials modeled as a continuous medium rather than as discrete particles.

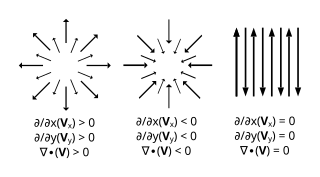

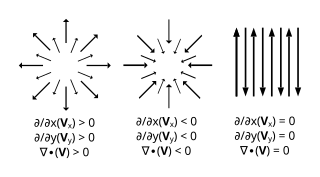

In vector calculus, divergence is a vector operator that operates on a vector field, producing a scalar field giving the quantity of the vector field's source at each point. More technically, the divergence represents the volume density of the outward flux of a vector field from an infinitesimal volume around a given point.

In computability theory, a primitive recursive function is, roughly speaking, a function that can be computed by a computer program whose loops are all "for" loops. Primitive recursive functions form a strict subset of those general recursive functions that are also total functions.

A simple or regular continued fraction is a continued fraction with numerators all equal one, and denominators built from a sequence of integer numbers. The sequence can be finite or infinite, resulting in a finite continued fraction like

In mathematics, a partition of unity of a topological space is a set of continuous functions from to the unit interval [0,1] such that for every point :

In numerical analysis, polynomial interpolation is the interpolation of a given bivariate data set by the polynomial of lowest possible degree that passes through the points of the dataset.

In the mathematical field of numerical analysis, a Newton polynomial, named after its inventor Isaac Newton, is an interpolation polynomial for a given set of data points. The Newton polynomial is sometimes called Newton's divided differences interpolation polynomial because the coefficients of the polynomial are calculated using Newton's divided differences method.

In statistics, the Pearson correlation coefficient (PCC) is a correlation coefficient that measures linear correlation between two sets of data. It is the ratio between the covariance of two variables and the product of their standard deviations; thus, it is essentially a normalized measurement of the covariance, such that the result always has a value between −1 and 1. As with covariance itself, the measure can only reflect a linear correlation of variables, and ignores many other types of relationships or correlations. As a simple example, one would expect the age and height of a sample of children from a primary school to have a Pearson correlation coefficient significantly greater than 0, but less than 1.

In the calculus of variations, a field of mathematical analysis, the functional derivative relates a change in a functional to a change in a function on which the functional depends.

In mathematics, the spectral radius of a square matrix is the maximum of the absolute values of its eigenvalues. More generally, the spectral radius of a bounded linear operator is the supremum of the absolute values of the elements of its spectrum. The spectral radius is often denoted by ρ(·).

In thermodynamics, the Onsager reciprocal relations express the equality of certain ratios between flows and forces in thermodynamic systems out of equilibrium, but where a notion of local equilibrium exists.

In statistics, propagation of uncertainty is the effect of variables' uncertainties on the uncertainty of a function based on them. When the variables are the values of experimental measurements they have uncertainties due to measurement limitations which propagate due to the combination of variables in the function.

In mathematics, the plastic ratio is a geometrical proportion close to 53/40. Its true value is the real solution of the equation x3 = x + 1.

In mathematics (specifically multivariable calculus), a multiple integral is a definite integral of a function of several real variables, for instance, f(x, y) or f(x, y, z).

In mathematics, the reciprocal difference of a finite sequence of numbers on a function is defined inductively by the following formulas:

In complex analysis, a Padé table is an array, possibly of infinite extent, of the rational Padé approximants

In mathematics, a determinantal point process is a stochastic point process, the probability distribution of which is characterized as a determinant of some function. They are suited for modelling global negative correlations, and for efficient algorithms of sampling, marginalization, conditioning, and other inference tasks. Such processes arise as important tools in random matrix theory, combinatorics, physics, machine learning, and wireless network modeling.

In mathematics, infinite compositions of analytic functions (ICAF) offer alternative formulations of analytic continued fractions, series, products and other infinite expansions, and the theory evolving from such compositions may shed light on the convergence/divergence of these expansions. Some functions can actually be expanded directly as infinite compositions. In addition, it is possible to use ICAF to evaluate solutions of fixed point equations involving infinite expansions. Complex dynamics offers another venue for iteration of systems of functions rather than a single function. For infinite compositions of a single function see Iterated function. For compositions of a finite number of functions, useful in fractal theory, see Iterated function system.

In probability and statistics, a factorial moment measure is a mathematical quantity, function or, more precisely, measure that is defined in relation to mathematical objects known as point processes, which are types of stochastic processes often used as mathematical models of physical phenomena representable as randomly positioned points in time, space or both. Moment measures generalize the idea of factorial moments, which are useful for studying non-negative integer-valued random variables.

In mathematics and theoretical computer science, analysis of Boolean functions is the study of real-valued functions on or from a spectral perspective. The functions studied are often, but not always, Boolean-valued, making them Boolean functions. The area has found many applications in combinatorics, social choice theory, random graphs, and theoretical computer science, especially in hardness of approximation, property testing, and PAC learning.