In mathematics, the associative property is a property of some binary operations, which means that rearranging the parentheses in an expression will not change the result. In propositional logic, associativity is a valid rule of replacement for expressions in logical proofs.

In mathematics, an associative algebraA is an algebraic structure with compatible operations of addition, multiplication, and a scalar multiplication by elements in some field K. The addition and multiplication operations together give A the structure of a ring; the addition and scalar multiplication operations together give A the structure of a vector space over K. In this article we will also use the term K-algebra to mean an associative algebra over the field K. A standard first example of a K-algebra is a ring of square matrices over a field K, with the usual matrix multiplication.

In mathematics, a binary relation associates elements of one set, called the domain, with elements of another set, called the codomain. A binary relation over sets X and Y is a new set of ordered pairs (x, y) consisting of elements x in X and y in Y. It is a generalization of the more widely understood idea of a unary function. It encodes the common concept of relation: an element x is related to an element y, if and only if the pair (x, y) belongs to the set of ordered pairs that defines the binary relation. A binary relation is the most studied special case n = 2 of an n-ary relation over sets X1, ..., Xn, which is a subset of the Cartesian product

In mathematics, convolution is a mathematical operation on two functions that produces a third function that expresses how the shape of one is modified by the other. The term convolution refers to both the result function and to the process of computing it. It is defined as the integral of the product of the two functions after one is reflected about the y-axis and shifted. The choice of which function is reflected and shifted before the integral does not change the integral result. The integral is evaluated for all values of shift, producing the convolution function.

In mathematics, the determinant is a scalar value that is a function of the entries of a square matrix. It characterizes some properties of the matrix and the linear map represented by the matrix. In particular, the determinant is nonzero if and only if the matrix is invertible and the linear map represented by the matrix is an isomorphism. The determinant of a product of matrices is the product of their determinants (the preceding property is a corollary of this one). The determinant of a matrix A is denoted det(A), det A, or |A|.

In abstract algebra, a branch of mathematics, a monoid is a set equipped with an associative binary operation and an identity element. For example, the nonnegative integers with addition form a monoid, the identity element being 0.

Multiplication is one of the four elementary mathematical operations of arithmetic, with the other ones being addition, subtraction, and division. The result of a multiplication operation is called a product.

In mathematics, a semigroup is an algebraic structure consisting of a set together with an associative internal binary operation on it.

In mathematical analysis, the Haar measure assigns an "invariant volume" to subsets of locally compact topological groups, consequently defining an integral for functions on those groups.

In mathematics, the concept of an inverse element generalises the concepts of opposite and reciprocal of numbers.

In mathematics, rings are algebraic structures that generalize fields: multiplication need not be commutative and multiplicative inverses need not exist. In other words, a ring is a set equipped with two binary operations satisfying properties analogous to those of addition and multiplication of integers. Ring elements may be numbers such as integers or complex numbers, but they may also be non-numerical objects such as polynomials, square matrices, functions, and power series.

In linear algebra, the identity matrix of size is the square matrix with ones on the main diagonal and zeros elsewhere. It has unique properties, for example when the identity matrix represents a geometric transformation, the object remains unchanged by the transformation. In other contexts, it is analogous to multiplying by the number 1.

In mathematics, particularly in linear algebra, matrix multiplication is a binary operation that produces a matrix from two matrices. For matrix multiplication, the number of columns in the first matrix must be equal to the number of rows in the second matrix. The resulting matrix, known as the matrix product, has the number of rows of the first and the number of columns of the second matrix. The product of matrices A and B is denoted as AB.

In mathematics, an empty product, or nullary product or vacuous product, is the result of multiplying no factors. It is by convention equal to the multiplicative identity, just as the empty sum—the result of adding no numbers—is by convention zero, or the additive identity. When numbers are implied, the empty product becomes one.

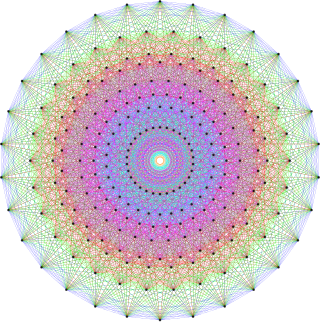

In mathematics, an algebra over a field is a vector space equipped with a bilinear product. Thus, an algebra is an algebraic structure consisting of a set together with operations of multiplication and addition and scalar multiplication by elements of a field and satisfying the axioms implied by "vector space" and "bilinear".

In linear algebra, an n-by-n square matrix A is called invertible, if there exists an n-by-n square matrix B such that

In abstract algebra, a semiring is an algebraic structure. It is a generalization of a ring, dropping the requirement that each element must have an additive inverse. At the same time, it is a generalization of bounded distributive lattices.

In linear algebra, the Strassen algorithm, named after Volker Strassen, is an algorithm for matrix multiplication. It is faster than the standard matrix multiplication algorithm for large matrices, with a better asymptotic complexity, although the naive algorithm is often better for smaller matrices. The Strassen algorithm is slower than the fastest known algorithms for extremely large matrices, but such galactic algorithms are not useful in practice, as they are much slower for matrices of practical size. For small matrices even faster algorithms exist.

In theoretical computer science, the computational complexity of matrix multiplication dictates how quickly the operation of matrix multiplication can be performed. Matrix multiplication algorithms are a central subroutine in theoretical and numerical algorithms for numerical linear algebra and optimization, so finding the right amount of time it should take is of major practical relevance.

In the theory of Lie groups, the exponential map is a map from the Lie algebra of a Lie group to the group, which allows one to recapture the local group structure from the Lie algebra. The existence of the exponential map is one of the primary reasons that Lie algebras are a useful tool for studying Lie groups.