Types of Biclusters

Bicluster with constant values (a)

When a Biclustering algorithm tries to find a constant-value Bicluster, it reorders the rows and columns of the matrix to group together similar rows and columns, eventually grouping Biclusters with similar values. This method is sufficient when the data is normalized. A perfect constant Bicluster is a matrix(I,J) in which all values a(i,j) are equal to a given constant μ. In tangible data, these entries a(i,j) may be represented with the form n(i,j) + μ where n(i,j) denotes the noise. According to Hartigan's algorithm, by splitting the original data matrix into a set of Biclusters, variance is used to compute constant Biclusters. Hence, a perfect Bicluster may be equivalently defined as a matrix with a variance of zero. In order to prevent the partitioning of the data matrix into Biclusters with the only one row and one column; Hartigan assumes that there are, for example, K Biclusters within the data matrix. When the data matrix is partitioned into K Biclusters, the algorithm ends.

Bicluster with constant values on rows (b) or columns (c)

Unlike the constant-value Biclusters, these types of Biclusters cannot be evaluated solely based on the variance of their values. To finish the identification, the columns and the rows should be normalized first. There are, however, other algorithms, without the normalization step, that can find Biclusters which have rows and columns with different approaches.

Bicluster with coherent values (d, e)

For Biclusters with coherent values on rows and columns, an overall improvement over the algorithms for Biclusters with constant values on rows or on columns should be considered. This algorithm may contain analysis of variance between groups, using co-variance between both rows and columns. In Cheng and Church's theorem, a Bicluster is defined as a subset of rows and columns with almost the same score. The similarity score is used to measure the coherence of rows and columns.

a) Bicluster with constant values| 2.0 | 2.0 | 2.0 | 2.0 | 2.0 | | 2.0 | 2.0 | 2.0 | 2.0 | 2.0 | | 2.0 | 2.0 | 2.0 | 2.0 | 2.0 | | 2.0 | 2.0 | 2.0 | 2.0 | 2.0 | | 2.0 | 2.0 | 2.0 | 2.0 | 2.0 | | b) Bicluster with constant values on rows| 1.0 | 1.0 | 1.0 | 1.0 | 1.0 | | 2.0 | 2.0 | 2.0 | 2.0 | 2.0 | | 3.0 | 3.0 | 3.0 | 3.0 | 3.0 | | 4.0 | 4.0 | 4.0 | 4.0 | 4.0 | | 5.0 | 5.0 | 5.0 | 5.0 | 5.0 | | c) Bicluster with constant values on columns| 1.0 | 2.0 | 3.0 | 4.0 | 5.0 | | 1.0 | 2.0 | 3.0 | 4.0 | 5.0 | | 1.0 | 2.0 | 3.0 | 4.0 | 5.0 | | 1.0 | 2.0 | 3.0 | 4.0 | 5.0 | | 1.0 | 2.0 | 3.0 | 4.0 | 5.0 | |

d) Bicluster with coherent values (additive)| 1.0 | 4.0 | 5.0 | 0.0 | 1.5 | | 4.0 | 7.0 | 8.0 | 3.0 | 4.5 | | 3.0 | 6.0 | 7.0 | 2.0 | 3.5 | | 5.0 | 8.0 | 9.0 | 4.0 | 5.5 | | 2.0 | 5.0 | 6.0 | 1.0 | 2.5 | | e) Bicluster with coherent values (multiplicative)| 1.0 | 0.5 | 2.0 | 0.2 | 0.8 | | 2.0 | 1.0 | 4.0 | 0.4 | 1.6 | | 3.0 | 1.5 | 6.0 | 0.6 | 2.4 | | 4.0 | 2.0 | 8.0 | 0.8 | 3.2 | | 5.0 | 2.5 | 10.0 | 1.0 | 4.0 | |

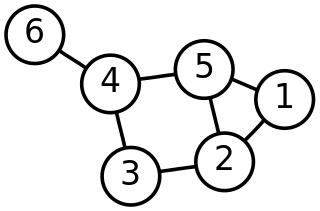

The relationship between these cluster models and other types of clustering such as correlation clustering is discussed in. [15]

Algorithms

There are many Biclustering algorithms developed for bioinformatics, including: block clustering, CTWC (Coupled Two-Way Clustering), ITWC (Interrelated Two-Way Clustering), δ-bicluster, δ-pCluster, δ-pattern, FLOC, OPC, Plaid Model, OPSMs (Order-preserving submatrixes), Gibbs, SAMBA (Statistical-Algorithmic Method for Bicluster Analysis), [16] Robust Biclustering Algorithm (RoBA), Crossing Minimization, [17] cMonkey, [18] PRMs, DCC, LEB (Localize and Extract Biclusters), QUBIC (QUalitative BIClustering), BCCA (Bi-Correlation Clustering Algorithm) BIMAX, ISA and FABIA (Factor analysis for Bicluster Acquisition), [19] runibic, [20] and recently proposed hybrid method EBIC (evolutionary-based Biclustering), [21] which was shown to detect multiple patterns with very high accuracy. More recently, IMMD-CC [22] is proposed that is developed based on the iterative complexity reduction concept. IMMD-CC is able to identify co-cluster centroids from highly sparse transformation obtained by iterative multi-mode discretization.

Biclustering algorithms have also been proposed and used in other application fields under the names co-clustering, bi-dimensional clustering, and subspace clustering. [14]

Given the known importance of discovering local patterns in time-series data. Recent proposals have addressed the Biclustering problem in the specific case of time-series gene expression data. In this case, the interesting Biclusters can be restricted to those with contiguous columns. This restriction leads to a tractable problem and enables the development of efficient exhaustive enumeration algorithms such as CCC-Biclustering [23] and e-CCC-Biclustering. [24] The approximate patterns in CCC-Biclustering algorithms allow a given number of errors, per gene, relatively to an expression profile representing the expression pattern in the Bicluster. The e-CCC-Biclustering algorithm uses approximate expressions to find and report all maximal CCC-Bicluster's by a discretized matrix A and efficient string processing techniques.

These algorithms find and report all maximal Biclusters with coherent and contiguous columns with perfect/approximate expression patterns, in time linear/polynomial which is obtained by manipulating a discretized version of original expression matrix in the size of the time-series gene expression matrix using efficient string processing techniques based on suffix trees. These algorithms are also applied to solve problems and sketch the analysis of computational complexity.

Some recent algorithms have attempted to include additional support for Biclustering rectangular matrices in the form of other datatypes, including cMonkey.

There is an ongoing debate about how to judge the results of these methods, as Biclustering allows overlap between clusters and some algorithms allow the exclusion of hard-to-reconcile columns/conditions. Not all of the available algorithms are deterministic and the analyst must pay attention to the degree to which results represent stable minima. Because this is an unsupervised classification problem, the lack of a gold standard makes it difficult to spot errors in the results. One approach is to utilize multiple Biclustering algorithms, with the majority or super-majority voting amongst them to decide the best result. Another way is to analyze the quality of shifting and scaling patterns in Biclusters. [25] Biclustering has been used in the domain of text mining (or classification) which is popularly known as co-clustering. [26] Text corpora are represented in a vectoral form as a matrix D whose rows denote the documents and whose columns denote the words in the dictionary. Matrix elements Dij denote occurrence of word j in document i. Co-clustering algorithms are then applied to discover blocks in D that correspond to a group of documents (rows) characterized by a group of words(columns).

Text clustering can solve the high-dimensional sparse problem, which means clustering text and words at the same time. When clustering text, we need to think about not only the words information, but also the information of words clusters that was composed by words. Then, according to similarity of feature words in the text, will eventually cluster the feature words. This is called co-clustering. There are two advantages of co-clustering: one is clustering the test based on words clusters can extremely decrease the dimension of clustering, it can also appropriate to measure the distance between the tests. Second is mining more useful information and can get the corresponding information in test clusters and words clusters. This corresponding information can be used to describe the type of texts and words, at the same time, the result of words clustering can be also used to text mining and information retrieval.

Several approaches have been proposed based on the information contents of the resulting blocks: matrix-based approaches such as SVD and BVD, and graph-based approaches. Information-theoretic algorithms iteratively assign each row to a cluster of documents and each column to a cluster of words such that the mutual information is maximized. Matrix-based methods focus on the decomposition of matrices into blocks such that the error between the original matrix and the regenerated matrices from the decomposition is minimized. Graph-based methods tend to minimize the cuts between the clusters. Given two groups of documents d1 and d2, the number of cuts can be measured as the number of words that occur in documents of groups d1 and d2.

More recently (Bisson and Hussain) [26] have proposed a new approach of using the similarity between words and the similarity between documents to co-cluster the matrix. Their method (known as χ-Sim, for cross similarity) is based on finding document-document similarity and word-word similarity, and then using classical clustering methods such as hierarchical clustering. Instead of explicitly clustering rows and columns alternately, they consider higher-order occurrences of words, inherently taking into account the documents in which they occur. Thus, the similarity between two words is calculated based on the documents in which they occur and also the documents in which "similar" words occur. The idea here is that two documents about the same topic do not necessarily use the same set of words to describe it, but a subset of the words and other similar words that are characteristic of that topic. This approach of taking higher-order similarities takes the latent semantic structure of the whole corpus into consideration with the result of generating a better clustering of the documents and words.

In text databases, for a document collection defined by a document by term D matrix (of size m by n, m: number of documents, n: number of terms) the cover-coefficient based clustering methodology [27] yields the same number of clusters both for documents and terms (words) using a double-stage probability experiment. According to the cover coefficient concept number of clusters can also be roughly estimated by the following formula  where t is the number of non-zero entries in D. Note that in D each row and each column must contain at least one non-zero element.

where t is the number of non-zero entries in D. Note that in D each row and each column must contain at least one non-zero element.

In contrast to other approaches, FABIA is a multiplicative model that assumes realistic non-Gaussian signal distributions with heavy tails. FABIA utilizes well understood model selection techniques like variational approaches and applies the Bayesian framework. The generative framework allows FABIA to determine the information content of each Bicluster to separate spurious Biclusters from true Biclusters.