Related Research Articles

A cognitive bias is a systematic pattern of deviation from norm or rationality in judgment. Individuals create their own "subjective reality" from their perception of the input. An individual's construction of reality, not the objective input, may dictate their behavior in the world. Thus, cognitive biases may sometimes lead to perceptual distortion, inaccurate judgment, illogical interpretation, and irrationality.

The availability heuristic, also known as availability bias, is a mental shortcut that relies on immediate examples that come to a given person's mind when evaluating a specific topic, concept, method, or decision. This heuristic, operating on the notion that, if something can be recalled, it must be important, or at least more important than alternative solutions not as readily recalled, is inherently biased toward recently acquired information.

Decision theory is a branch of applied probability theory and analytic philosophy concerned with the theory of making decisions based on assigning probabilities to various factors and assigning numerical consequences to the outcome.

The representativeness heuristic is used when making judgments about the probability of an event under uncertainty. It is one of a group of heuristics proposed by psychologists Amos Tversky and Daniel Kahneman in the early 1970s as "the degree to which [an event] (i) is similar in essential characteristics to its parent population, and (ii) reflects the salient features of the process by which it is generated". Heuristics are described as "judgmental shortcuts that generally get us where we need to go – and quickly – but at the cost of occasionally sending us off course." Heuristics are useful because they use effort-reduction and simplification in decision-making.

There are two main uses of the term calibration in statistics that denote special types of statistical inference problems. "Calibration" can mean

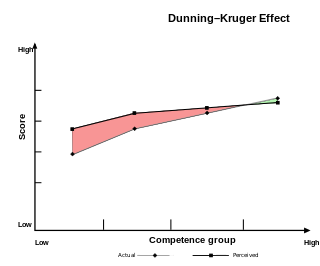

The Dunning–Kruger effect is a cognitive bias whereby people with low ability, expertise, or experience regarding a certain type of task or area of knowledge tend to overestimate their ability or knowledge. Some researchers also include the opposite effect for high performers: their tendency to underestimate their skills. In popular culture, the Dunning–Kruger effect is often misunderstood as a claim about general overconfidence of people with low intelligence instead of specific overconfidence of people unskilled at a particular task.

Risk perception is the subjective judgement that people make about the characteristics and severity of a risk. Risk perceptions often differ from statistical assessments of risk since are affected by a wide range of affective, cognitive, contextual, and individual factors. Several theories have been proposed to explain why different people make different estimates of the dangerousness of risks. Three major families of theory have been developed: psychology approaches, anthropology/sociology approaches and interdisciplinary approaches.

The overconfidence effect is a well-established bias in which a person's subjective confidence in their judgments is reliably greater than the objective accuracy of those judgments, especially when confidence is relatively high. Overconfidence is one example of a miscalibration of subjective probabilities. Throughout the research literature, overconfidence has been defined in three distinct ways: (1) overestimation of one's actual performance; (2) overplacement of one's performance relative to others; and (3) overprecision in expressing unwarranted certainty in the accuracy of one's beliefs.

Uncertainty quantification (UQ) is the science of quantitative characterization and estimation of uncertainties in both computational and real world applications. It tries to determine how likely certain outcomes are if some aspects of the system are not exactly known. An example would be to predict the acceleration of a human body in a head-on crash with another car: even if the speed was exactly known, small differences in the manufacturing of individual cars, how tightly every bolt has been tightened, etc., will lead to different results that can only be predicted in a statistical sense.

Standard-setting study is an official research study conducted by an organization that sponsors tests to determine a cutscore for the test. To be legally defensible in the US, in particular for high-stakes assessments, and meet the Standards for Educational and Psychological Testing, a cutscore cannot be arbitrarily determined; it must be empirically justified. For example, the organization cannot merely decide that the cutscore will be 70% correct. Instead, a study is conducted to determine what score best differentiates the classifications of examinees, such as competent vs. incompetent. Such studies require quite an amount of resources, involving a number of professionals, in particular with psychometric background. Standard-setting studies are for that reason impractical for regular class room situations, yet in every layer of education, standard setting is performed and multiple methods exist.

Absolute probability judgement is a technique used in the field of human reliability assessment (HRA), for the purposes of evaluating the probability of a human error occurring throughout the completion of a specific task. From such analyses measures can then be taken to reduce the likelihood of errors occurring within a system and therefore lead to an improvement in the overall levels of safety. There exist three primary reasons for conducting an HRA; error identification, error quantification and error reduction. As there exist a number of techniques used for such purposes, they can be split into one of two classifications; first generation techniques and second generation techniques. First generation techniques work on the basis of the simple dichotomy of 'fits/doesn't fit' in the matching of the error situation in context with related error identification and quantification and second generation techniques are more theory based in their assessment and quantification of errors. 'HRA techniques have been utilised in a range of industries including healthcare, engineering, nuclear, transportation and business sector; each technique has varying uses within different disciplines.

In simple terms, risk is the possibility of something bad happening. Risk involves uncertainty about the effects/implications of an activity with respect to something that humans value, often focusing on negative, undesirable consequences. Many different definitions have been proposed. The international standard definition of risk for common understanding in different applications is “effect of uncertainty on objectives”.

Heuristics is the process by which humans use mental short cuts to arrive at decisions. Heuristics are simple strategies that humans, animals, organizations, and even machines use to quickly form judgments, make decisions, and find solutions to complex problems. Often this involves focusing on the most relevant aspects of a problem or situation to formulate a solution. While heuristic processes are used to find the answers and solutions that are most likely to work or be correct, they are not always right or the most accurate. Judgments and decisions based on heuristics are simply good enough to satisfy a pressing need in situations of uncertainty, where information is incomplete. In that sense they can differ from answers given by logic and probability.

Illusion of validity is a cognitive bias in which a person overestimates their ability to interpret and predict accurately the outcome when analyzing a set of data, in particular when the data analyzed show a very consistent pattern—that is, when the data "tell" a coherent story.

In cognitive psychology and decision science, conservatism or conservatism bias is a bias which refers to the tendency to revise one's belief insufficiently when presented with new evidence. This bias describes human belief revision in which people over-weigh the prior distribution and under-weigh new sample evidence when compared to Bayesian belief-revision.

The curse of knowledge is a cognitive bias that occurs when an individual, who is communicating with other individuals, assumes that other individuals have similar background and depth of knowledge to understand. This bias is also called by some authors the curse of expertise.

The hard–easy effect is a cognitive bias that manifests itself as a tendency to overestimate the probability of one's success at a task perceived as hard, and to underestimate the likelihood of one's success at a task perceived as easy. The hard-easy effect takes place, for example, when individuals exhibit a degree of underconfidence in answering relatively easy questions and a degree of overconfidence in answering relatively difficult questions. "Hard tasks tend to produce overconfidence but worse-than-average perceptions," reported Katherine A. Burson, Richard P. Larrick, and Jack B. Soll in a 2005 study, "whereas easy tasks tend to produce underconfidence and better-than-average effects."

Radiocarbon dating measurements produce ages in "radiocarbon years", which must be converted to calendar ages by a process called calibration. Calibration is needed because the atmospheric 14

C:12

C ratio, which is a key element in calculating radiocarbon ages, has not been constant historically.

Debiasing is the reduction of bias, particularly with respect to judgment and decision making. Biased judgment and decision making is that which systematically deviates from the prescriptions of objective standards such as facts, logic, and rational behavior or prescriptive norms. Biased judgment and decision making exists in consequential domains such as medicine, law, policy, and business, as well as in everyday life. Investors, for example, tend to hold onto falling stocks too long and sell rising stocks too quickly. Employers exhibit considerable discrimination in hiring and employment practices, and some parents continue to believe that vaccinations cause autism despite knowing that this link is based on falsified evidence. At an individual level, people who exhibit less decision bias have more intact social environments, reduced risk of alcohol and drug use, lower childhood delinquency rates, and superior planning and problem solving abilities.

Non-homogeneous Gaussian regression (NGR) is a type of statistical regression analysis used in the atmospheric sciences as a way to convert ensemble forecasts into probabilistic forecasts. Relative to simple linear regression, NGR uses the ensemble spread as an additional predictor, which is used to improve the prediction of uncertainty and allows the predicted uncertainty to vary from case to case. The prediction of uncertainty in NGR is derived from both past forecast errors statistics and the ensemble spread. NGR was originally developed for site-specific medium range temperature forecasting, but has since also been applied to site-specific medium-range wind forecasting and to seasonal forecasts, and has been adapted for precipitation forecasting. The introduction of NGR was the first demonstration that probabilistic forecasts that take account of the varying ensemble spread could achieve better skill scores than forecasts based on standard Model output statistics approaches applied to the ensemble mean.

References

- 1 2 3 S. Lichtenstein, B. Fischhoff, and L. D. Phillips, "Calibration of Probabilities: The State of the Art to 1980", in Judgement under Uncertainty: Heuristics and Biases, ed. D. Kahneman and A. Tversky, (Cambridge University Press, 1982)

- ↑ J. Edward Russo, Paul J. H. Schoemaker, Decision Traps, Simon & Schuster, 1989

- ↑ Regina Kwon, "The Probability Problem", Baseline Magazine, Dec 10 2001

- ↑ Douglas Hubbard "How to Measure Anything: Finding the Value of Intangibles in Business", John Wiley & Sons, 2007

- ↑ Kynn, M. (2008), The ‘heuristics and biases’ bias in expert elicitation. Journal of the Royal Statistical Society, Series A (Statistics in Society), 171: 239–264. doi:10.1111/j.1467-985X.2007.00499.x

- ↑ Lichtenstein, S., & Fischhoff, B. (1980). Training for calibration. Organizational Behavior and Human Performance, 26(2), 149–171. doi: 10.1016/0030-5073(80)90052-5