Parapsychology is the study of alleged psychic phenomena and other paranormal claims, for example, those related to near-death experiences, synchronicity, apparitional experiences, etc. Criticized as being a pseudoscience, the majority of mainstream scientists reject it. Parapsychology has also been criticised by mainstream critics for claims by many of its practitioners that their studies are plausible despite a lack of convincing evidence after more than a century of research for the existence of any psychic phenomena.

Pathological science is an area of research where "people are tricked into false results ... by subjective effects, wishful thinking or threshold interactions." The term was first used by Irving Langmuir, Nobel Prize-winning chemist, during a 1953 colloquium at the Knolls Research Laboratory. Langmuir said a pathological science is an area of research that simply will not "go away"—long after it was given up on as "false" by the majority of scientists in the field. He called pathological science "the science of things that aren't so."

The scientific method is an empirical method for acquiring knowledge that has characterized the development of science since at least the 17th century It involves careful observation, applying rigorous skepticism about what is observed, given that cognitive assumptions can distort how one interprets the observation. It involves formulating hypotheses, via induction, based on such observations; the testability of hypotheses, experimental and the measurement-based statistical testing of deductions drawn from the hypotheses; and refinement of the hypotheses based on the experimental findings. These are principles of the scientific method, as distinguished from a definitive series of steps applicable to all scientific enterprises.

Reproducibility, closely related to replicability and repeatability, is a major principle underpinning the scientific method. For the findings of a study to be reproducible means that results obtained by an experiment or an observational study or in a statistical analysis of a data set should be achieved again with a high degree of reliability when the study is replicated. There are different kinds of replication but typically replication studies involve different researchers using the same methodology. Only after one or several such successful replications should a result be recognized as scientific knowledge.

Confirmation bias is the tendency to search for, interpret, favor, and recall information in a way that confirms or supports one's prior beliefs or values. People display this bias when they select information that supports their views, ignoring contrary information, or when they interpret ambiguous evidence as supporting their existing attitudes. The effect is strongest for desired outcomes, for emotionally charged issues, and for deeply entrenched beliefs. Confirmation bias is insuperable for most people, but they can manage it, for example, by education and training in critical thinking skills.

An experiment is a procedure carried out to support or refute a hypothesis, or determine the efficacy or likelihood of something previously untried. Experiments provide insight into cause-and-effect by demonstrating what outcome occurs when a particular factor is manipulated. Experiments vary greatly in goal and scale but always rely on repeatable procedure and logical analysis of the results. There also exist natural experimental studies.

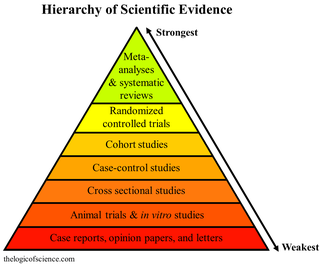

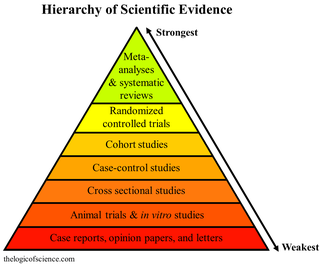

A meta-analysis is a statistical analysis that combines the results of multiple scientific studies. Meta-analyses can be performed when there are multiple scientific studies addressing the same question, with each individual study reporting measurements that are expected to have some degree of error. The aim then is to use approaches from statistics to derive a pooled estimate closest to the unknown common truth based on how this error is perceived. It is thus a basic methodology of metascience. Meta-analytic results are considered the most trustworthy source of evidence by the evidence-based medicine literature.

In a blind or blinded experiment, information which may influence the participants of the experiment is withheld until after the experiment is complete. Good blinding can reduce or eliminate experimental biases that arise from a participants' expectations, observer's effect on the participants, observer bias, confirmation bias, and other sources. A blind can be imposed on any participant of an experiment, including subjects, researchers, technicians, data analysts, and evaluators. In some cases, while blinding would be useful, it is impossible or unethical. For example, it is not possible to blind a patient to their treatment in a physical therapy intervention. A good clinical protocol ensures that blinding is as effective as possible within ethical and practical constraints.

In published academic research, publication bias occurs when the outcome of an experiment or research study biases the decision to publish or otherwise distribute it. Publishing only results that show a significant finding disturbs the balance of findings in favor of positive results. The study of publication bias is an important topic in metascience.

In science, experimenter's regress refers to a loop of dependence between theory and evidence. In order to judge whether evidence is erroneous we must rely on theory-based expectations, and to judge the value of competing theories we rely on evidence. Cognitive bias affects experiments, and experiments determine which theory is valid. This issue is particularly important in new fields of science where there is no community consensus regarding the relative values of various competing theories, and where sources of experimental error are not well known.

The "ceiling effect" is one type of scale attenuation effect; the other scale attenuation effect is the "floor effect". The ceiling effect is observed when an independent variable no longer has an effect on a dependent variable, or the level above which variance in an independent variable is no longer measurable. The specific application varies slightly in differentiating between two areas of use for this term: pharmacological or statistical. An example of use in the first area, a ceiling effect in treatment, is pain relief by some kinds of analgesic drugs, which have no further effect on pain above a particular dosage level. An example of use in the second area, a ceiling effect in data-gathering, is a survey that groups all respondents into income categories, not distinguishing incomes of respondents above the highest level measured in the survey instrument. The maximum income level able to be reported creates a "ceiling" that results in measurement inaccuracy, as the dependent variable range is not inclusive of the true values above that point. The ceiling effect can occur any time a measure involves a set range in which a normal distribution predicts multiple scores at or above the maximum value for the dependent variable.

John P. A. Ioannidis is a Greek-American physician-scientist, writer and Stanford University professor who has made contributions to evidence-based medicine, epidemiology, and clinical research. Ioannidis studies scientific research itself, meta-research primarily in clinical medicine and the social sciences.

"Why Most Published Research Findings Are False" is a 2005 essay written by John Ioannidis, a professor at the Stanford School of Medicine, and published in PLOS Medicine. It is considered foundational to the field of metascience.

Criticism of science addresses problems within science in order to improve science as a whole and its role in society. Criticisms come from philosophy, from social movements like feminism, and from within science itself.

Funding bias, also known as sponsorship bias, funding outcome bias, funding publication bias, and funding effect, refers to the tendency of a scientific study to support the interests of the study's financial sponsor. This phenomenon is recognized sufficiently that researchers undertake studies to examine bias in past published studies. Funding bias has been associated, in particular, with research into chemical toxicity, tobacco, and pharmaceutical drugs. It is an instance of experimenter's bias.

White hat bias (WHB) is a purported "bias leading to the distortion of information in the service of what may be perceived to be righteous ends", which consist of both cherry picking the evidence and publication bias. Public health researchers David Allison and Mark Cope first discussed this bias in a 2010 paper and explained the motivation behind it in terms of "righteous zeal, indignation toward certain aspects of industry", and other factors.

Invalid science consists of scientific claims based on experiments that cannot be reproduced or that are contradicted by experiments that can be reproduced. Recent analyses indicate that the proportion of retracted claims in the scientific literature is steadily increasing. The number of retractions has grown tenfold over the past decade, but they still make up approximately 0.2% of the 1.4m papers published annually in scholarly journals.

The replication crisis is an ongoing methodological crisis in which the results of many scientific studies are difficult or impossible to reproduce. Because the reproducibility of empirical results is an essential part of the scientific method, such failures undermine the credibility of theories building on them and potentially call into question substantial parts of scientific knowledge.

The Reproducibility Project is a series of crowdsourced collaborations aiming to reproduce published scientific studies, finding high rates of results which could not be replicated. It has resulted in two major initiatives focusing on the fields of psychology and cancer biology. The project has brought attention to the replication crisis, and has contributed to shifts in scientific culture and publishing practices to address it.

Brian Arthur Nosek is an American social-cognitive psychologist, professor of psychology at the University of Virginia, and the co-founder and director of the Center for Open Science. He also co-founded the Society for the Improvement of Psychological Science and Project Implicit. He has been on the faculty of the University of Virginia since 2002.