Related Research Articles

Computational linguistics is an interdisciplinary field concerned with the computational modelling of natural language, as well as the study of appropriate computational approaches to linguistic questions. In general, computational linguistics draws upon linguistics, computer science, artificial intelligence, mathematics, logic, philosophy, cognitive science, cognitive psychology, psycholinguistics, anthropology and neuroscience, among others.

Natural language processing (NLP) is an interdisciplinary subfield of computer science and linguistics. It is primarily concerned with giving computers the ability to support and manipulate human language. It involves processing natural language datasets, such as text corpora or speech corpora, using either rule-based or probabilistic machine learning approaches. The goal is a computer capable of "understanding" the contents of documents, including the contextual nuances of the language within them. The technology can then accurately extract information and insights contained in the documents as well as categorize and organize the documents themselves.

Word-sense disambiguation (WSD) is the process of identifying which sense of a word is meant in a sentence or other segment of context. In human language processing and cognition, it is usually subconscious/automatic but can often come to conscious attention when ambiguity impairs clarity of communication, given the pervasive polysemy in natural language. In computational linguistics, it is an open problem that affects other computer-related writing, such as discourse, improving relevance of search engines, anaphora resolution, coherence, and inference.

Natural-language understanding (NLU) or natural-language interpretation (NLI) is a subset of natural-language processing in artificial intelligence that deals with machine reading comprehension. Natural-language understanding is considered an AI-hard problem.

Computational semiotics is an interdisciplinary field that applies, conducts, and draws on research in logic, mathematics, the theory and practice of computation, formal and natural language studies, the cognitive sciences generally, and semiotics proper. The term encompasses both the application of semiotics to computer hardware and software design and, conversely, the use of computation for performing semiotic analysis. The former focuses on what semiotics can bring to computation; the latter on what computation can bring to semiotics.

The Association for Computational Linguistics (ACL) is a scientific and professional organization for people working on natural language processing. Its namesake conference is one of the primary high impact conferences for natural language processing research, along with EMNLP. The conference is held each summer in locations where significant computational linguistics research is carried out.

In semantics, mathematical logic and related disciplines, the principle of compositionality is the principle that the meaning of a complex expression is determined by the meanings of its constituent expressions and the rules used to combine them. The principle is also called Frege's principle, because Gottlob Frege is widely credited for the first modern formulation of it. However, the principle has never been explicitly stated by Frege, and arguably it was already assumed by George Boole decades before Frege's work.

Computational semantics is the study of how to automate the process of constructing and reasoning with meaning representations of natural language expressions. It consequently plays an important role in natural-language processing and computational linguistics.

Distributional semantics is a research area that develops and studies theories and methods for quantifying and categorizing semantic similarities between linguistic items based on their distributional properties in large samples of language data. The basic idea of distributional semantics can be summed up in the so-called distributional hypothesis: linguistic items with similar distributions have similar meanings.

Yorick Alexander Wilks FBCS was a British computer scientist. He was an emeritus professor of artificial intelligence at the University of Sheffield, visiting professor of artificial intelligence at Gresham College, senior research fellow at the Oxford Internet Institute, senior scientist at the Florida Institute for Human and Machine Cognition, and a member of the Epiphany Philosophers.

In semantics, donkey sentences are sentences that contain a pronoun with clear meaning but whose syntactic role in the sentence poses challenges to linguists. Such sentences defy straightforward attempts to generate their formal language equivalents. The difficulty is with understanding how English speakers parse such sentences.

SemEval is an ongoing series of evaluations of computational semantic analysis systems; it evolved from the Senseval word sense evaluation series. The evaluations are intended to explore the nature of meaning in language. While meaning is intuitive to humans, transferring those intuitions to computational analysis has proved elusive.

In natural language processing, textual entailment (TE), also known as natural language inference (NLI), is a directional relation between text fragments. The relation holds whenever the truth of one text fragment follows from another text.

Deep linguistic processing is a natural language processing framework which draws on theoretical and descriptive linguistics. It models language predominantly by way of theoretical syntactic/semantic theory. Deep linguistic processing approaches differ from "shallower" methods in that they yield more expressive and structural representations which directly capture long-distance dependencies and underlying predicate-argument structures.

The knowledge-intensive approach of deep linguistic processing requires considerable computational power, and has in the past sometimes been judged as being intractable. However, research in the early 2000s had made considerable advancement in efficiency of deep processing. Today, efficiency is no longer a major problem for applications using deep linguistic processing.

The following outline is provided as an overview of and topical guide to natural-language processing:

Temporal annotation is the study of how to automatically add semantic information regarding time to natural language documents. It plays a role in natural language processing and computational linguistics.

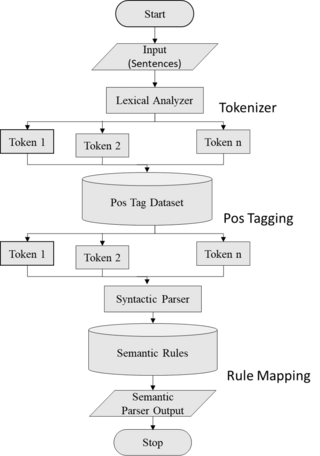

Semantic parsing is the task of converting a natural language utterance to a logical form: a machine-understandable representation of its meaning. Semantic parsing can thus be understood as extracting the precise meaning of an utterance. Applications of semantic parsing include machine translation, question answering, ontology induction, automated reasoning, and code generation. The phrase was first used in the 1970s by Yorick Wilks as the basis for machine translation programs working with only semantic representations. Semantic parsing is one of the important tasks in computational linguistics and natural language processing.

Preslav Nakov is a computer scientist who works on natural language processing. He is particularly known for his research on fake news detection, automatic detection of offensive language, and biomedical text mining. Nakov obtained a PhD in computer science under the supervision of Marti Hearst from the University of California, Berkeley. He was the first person to receive the prestigious John Atanasov Presidential Award for achievements in the development of the information society by the President of Bulgaria.

Mona Talat Diab is a computer science professor and director of Carnegie Mellon University's Language Technologies Institute. Previously, she was a professor at George Washington University and a research scientist with Facebook AI. Her research focuses on natural language processing, computational linguistics, cross lingual/multilingual processing, computational socio-pragmatics, Arabic language processing, and applied machine learning.

Ellen Riloff is an American computer scientist currently serving as a professor at the School of Computing at the University of Utah. Her research focuses on natural language processing and computational linguistics, specifically information extraction, sentiment analysis, semantic class induction, and bootstrapping methods that learn from unannotated texts.

References

- ↑ Sergei Nirenburg; H. L. Somers; Yorick Wilks (2003). Readings in Machine Translation. Cambridge, MA: MIT Press. pp. 371–. ISBN 978-0-262-14074-4.

- ↑ Blackburn, P., and Bos, J. (2005), Representation and Inference for Natural Language: A First Course in Computational Semantics, Stanford, CA: CSLI Publications. ISBN 1-57586-496-7.