Privacy is the ability of an individual or group to seclude themselves or information about themselves, and thereby express themselves selectively.

Information privacy is the relationship between the collection and dissemination of data, technology, the public expectation of privacy, contextual information norms, and the legal and political issues surrounding them. It is also known as data privacy or data protection.

The right to privacy is an element of various legal traditions that intends to restrain governmental and private actions that threaten the privacy of individuals. Over 150 national constitutions mention the right to privacy. On 10 December 1948, the United Nations General Assembly adopted the Universal Declaration of Human Rights (UDHR), originally written to guarantee individual rights of everyone everywhere; while right to privacy does not appear in the document, many interpret this through Article 12, which states: "No one shall be subjected to arbitrary interference with his privacy, family, home or correspondence, nor to attacks upon his honour and reputation. Everyone has the right to the protection of the law against such interference or attacks."

Media ethics is the subdivision dealing with the specific ethical principles and standards of media, including broadcast media, film, theatre, the arts, print media and the internet. The field covers many varied and highly controversial topics, ranging from war journalism to Benetton ad campaigns.

Data portability is a concept to protect users from having their data stored in "silos" or "walled gardens" that are incompatible with one another, i.e. closed platforms, thus subjecting them to vendor lock-in and making the creation of data backups or moving accounts between services difficult.

Cyber ethics is the philosophic study of ethics pertaining to computers, encompassing user behavior and what computers are programmed to do, and how this affects individuals and society. For years, various governments have enacted regulations while organizations have defined policies about cyberethics.

Communication privacy management (CPM), originally known as communication boundary management, is a systematic research theory developed by Sandra Petronio in 1991. CPM theory aims to develop an evidence-based understanding of the way people make decisions about revealing and concealing private information. It suggests that individuals maintain and coordinate privacy boundaries with various communication partners depending on the perceived benefits and costs of information disclosure. Petronio believes disclosing private information will strengthen one's connections with others, and that we can better understand the rules for disclosure in relationships through negotiating privacy boundaries.

Digital privacy is often used in contexts that promote advocacy on behalf of individual and consumer privacy rights in e-services and is typically used in opposition to the business practices of many e-marketers, businesses, and companies to collect and use such information and data. Digital privacy can be defined under three sub-related categories: information privacy, communication privacy, and individual privacy.

Privacy for research participants is a concept in research ethics which states that a person in human subject research has a right to privacy when participating in research. Some typical scenarios this would apply to include, or example, a surveyor doing social research conducts an interview with a participant, or a medical researcher in a clinical trial asks for a blood sample from a participant to see if there is a relationship between something which can be measured in blood and a person's health. In both cases, the ideal outcome is that any participant can join the study and neither the researcher nor the study design nor the publication of the study results would ever identify any participant in the study. Thus, the privacy rights of these individuals can be preserved.

De-identification is the process used to prevent someone's personal identity from being revealed. For example, data produced during human subject research might be de-identified to preserve the privacy of research participants. Biological data may be de-identified in order to comply with HIPAA regulations that define and stipulate patient privacy laws.

The right to be forgotten (RTBF) is the right to have private information about a person be removed from Internet searches and other directories under some circumstances. The concept has been discussed and put into practice in several jurisdictions, including Argentina, the European Union (EU), and the Philippines. The issue has arisen from desires of individuals to "determine the development of their life in an autonomous way, without being perpetually or periodically stigmatized as a consequence of a specific action performed in the past".

Google Spain SL, Google Inc. v Agencia Española de Protección de Datos, Mario Costeja González (2014) is a decision by the Court of Justice of the European Union (CJEU). It held that an Internet search engine operator is responsible for the processing that it carries out of personal information which appears on web pages published by third parties.

Data re-identification or de-anonymization is the practice of matching anonymous data with publicly available information, or auxiliary data, in order to discover the person the data belong to. This is a concern because companies with privacy policies, health care providers, and financial institutions may release the data they collect after the data has gone through the de-identification process.

Critical data studies is the exploration of and engagement with social, cultural, and ethical challenges that arise when working with big data. It is through various unique perspectives and taking a critical approach that this form of study can be practiced. As its name implies, critical data studies draws heavily on the influence of critical theory, which has a strong focus on addressing the organization of power structures. This idea is then applied to the study of data.

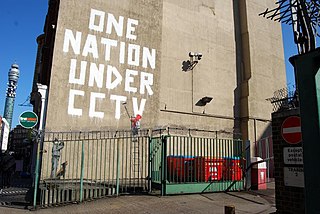

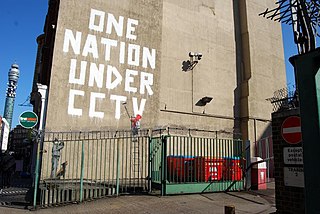

Data politics encompasses the political aspects of data including topics ranging from data activism, open data and open government. The ways in which data is collected, accessed, and what we do with that data has changed in contemporary society due to a number of factors surrounding issues of politics. An issue that arises from political data is often how disconnected people are from their own data, rarely gaining access to the data they produce. Large platforms like Google have a "better to ask forgiveness than permission" stance on data collection to which the greater population is largely ignorant, leading to movements within data activism.

DNA encryption is the process of hiding or perplexing genetic information by a computational method in order to improve genetic privacy in DNA sequencing processes. The human genome is complex and long, but it is very possible to interpret important, and identifying, information from smaller variabilities, rather than reading the entire genome. A whole human genome is a string of 3.2 billion base paired nucleotides, the building blocks of life, but between individuals the genetic variation differs only by 0.5%, an important 0.5% that accounts for all of human diversity, the pathology of different diseases, and ancestral story. Emerging strategies incorporate different methods, such as randomization algorithms and cryptographic approaches, to de-identify the genetic sequence from the individual, and fundamentally, isolate only the necessary information while protecting the rest of the genome from unnecessary inquiry. The priority now is to ascertain which methods are robust, and how policy should ensure the ongoing protection of genetic privacy.

Data shadows refer to the information that a person leaves behind unintentionally while taking part in daily activities such as checking their e-mails, scrolling through social media or even by using their debit or credit card.

Digital self-determination is a multidisciplinary concept derived from the legal concept of self-determination and applied to the digital sphere, to address the unique challenges to individual and collective agency and autonomy arising with increasing digitalization of many aspects of society and daily life.

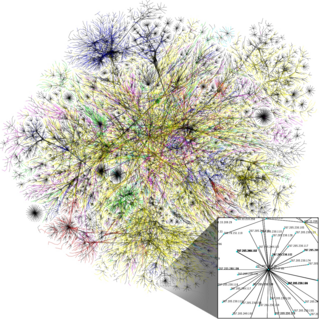

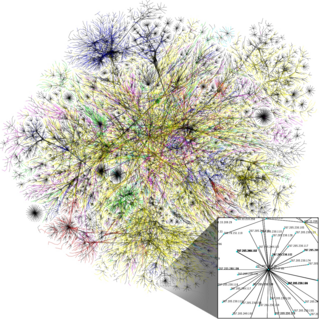

A data ecosystem is the complex environment of co-dependent networks and actors that contribute to data collection, transfer and use. They can span across sectors - such as healthcare or finance, to inform one another's practices. A data ecosystem often consists of numerous data assemblages. Research into data ecosystems has developed in response to the rapid proliferation and availability of information through the web, which has contributed to the commodification of data.

Data care refers to treating people and their private information fairly and with dignity. Data has progressively become more and more utilized in our society all over the world. When it comes to securely storing a medical patient's data, an employee's data, or a citizen's private data. The concept of data care emerged from the increase of data usage over the years, it is a term used to describe the act of treating people and their data with care and respect. This concept elaborates on how caring for people's data is the responsibility of those who govern data, for example, businesses and policy makers. Along with how to care for it in an ethical manner, while keeping in mind the people that the data belongs to. And discussing the concept of 'slow computing' on how this can be properly utilized to help in creating and maintaining proper data care.