Neural networks are a branch of machine learning models inspired by the neuronal organization found in the biological neural networks in animal brains.

Jürgen Schmidhuber is a German computer scientist noted for his work in the field of artificial intelligence,specifically artificial neural networks. He is a scientific director of the Dalle Molle Institute for Artificial Intelligence Research in Switzerland. He is also director of the Artificial Intelligence Initiative and professor of the Computer Science program in the Computer,Electrical,and Mathematical Sciences and Engineering (CEMSE) division at the King Abdullah University of Science and Technology (KAUST) in Saudi Arabia.

Neuromorphic computing is an approach to computing that is inspired by the structure and function of the human brain. A neuromorphic computer/chip is any device that uses physical artificial neurons to do computations. In recent times,the term neuromorphic has been used to describe analog,digital,mixed-mode analog/digital VLSI,and software systems that implement models of neural systems. The implementation of neuromorphic computing on the hardware level can be realized by oxide-based memristors,spintronic memories,threshold switches,transistors,among others. Training software-based neuromorphic systems of spiking neural networks can be achieved using error backpropagation,e.g.,using Python based frameworks such as snnTorch,or using canonical learning rules from the biological learning literature,e.g.,using BindsNet.

Developmental robotics (DevRob),sometimes called epigenetic robotics,is a scientific field which aims at studying the developmental mechanisms,architectures and constraints that allow lifelong and open-ended learning of new skills and new knowledge in embodied machines. As in human children,learning is expected to be cumulative and of progressively increasing complexity,and to result from self-exploration of the world in combination with social interaction. The typical methodological approach consists in starting from theories of human and animal development elaborated in fields such as developmental psychology,neuroscience,developmental and evolutionary biology,and linguistics,then to formalize and implement them in robots,sometimes exploring extensions or variants of them. The experimentation of those models in robots allows researchers to confront them with reality,and as a consequence,developmental robotics also provides feedback and novel hypotheses on theories of human and animal development.

The expression computational intelligence (CI) usually refers to the ability of a computer to learn a specific task from data or experimental observation. Even though it is commonly considered a synonym of soft computing,there is still no commonly accepted definition of computational intelligence.

For holographic data storage,holographic associative memory (HAM) is an information storage and retrieval system based on the principles of holography. Holograms are made by using two beams of light,called a "reference beam" and an "object beam". They produce a pattern on the film that contains them both. Afterwards,by reproducing the reference beam,the hologram recreates a visual image of the original object. In theory,one could use the object beam to do the same thing:reproduce the original reference beam. In HAM,the pieces of information act like the two beams. Each can be used to retrieve the other from the pattern. It can be thought of as an artificial neural network which mimics the way the brain uses information. The information is presented in abstract form by a complex vector which may be expressed directly by a waveform possessing frequency and magnitude. This waveform is analogous to electrochemical impulses believed to transmit information between biological neuron cells.

The neocognitron is a hierarchical,multilayered artificial neural network proposed by Kunihiko Fukushima in 1979. It has been used for Japanese handwritten character recognition and other pattern recognition tasks,and served as the inspiration for convolutional neural networks.

Computational neurogenetic modeling (CNGM) is concerned with the study and development of dynamic neuronal models for modeling brain functions with respect to genes and dynamic interactions between genes. These include neural network models and their integration with gene network models. This area brings together knowledge from various scientific disciplines,such as computer and information science,neuroscience and cognitive science,genetics and molecular biology,as well as engineering.

Spiking neural networks (SNNs) are artificial neural networks (ANN) that more closely mimic natural neural networks. In addition to neuronal and synaptic state,SNNs incorporate the concept of time into their operating model. The idea is that neurons in the SNN do not transmit information at each propagation cycle,but rather transmit information only when a membrane potential—an intrinsic quality of the neuron related to its membrane electrical charge—reaches a specific value,called the threshold. When the membrane potential reaches the threshold,the neuron fires,and generates a signal that travels to other neurons which,in turn,increase or decrease their potentials in response to this signal. A neuron model that fires at the moment of threshold crossing is also called a spiking neuron model.

Neurorobotics is the combined study of neuroscience,robotics,and artificial intelligence. It is the science and technology of embodied autonomous neural systems. Neural systems include brain-inspired algorithms,computational models of biological neural networks and actual biological systems. Such neural systems can be embodied in machines with mechanic or any other forms of physical actuation. This includes robots,prosthetic or wearable systems but also,at smaller scale,micro-machines and,at the larger scales,furniture and infrastructures.

An area of computer vision is active vision,sometimes also called active computer vision. An active vision system is one that can manipulate the viewpoint of the camera(s) in order to investigate the environment and get better information from it.

Kunihiko Fukushima is a Japanese computer scientist,most noted for his work on artificial neural networks and deep learning. He is currently working part-time as a senior research scientist at the Fuzzy Logic Systems Institute in Fukuoka,Japan.

Morphogenetic robotics generally refers to the methodologies that address challenges in robotics inspired by biological morphogenesis.

Fusion adaptive resonance theory (fusion ART) is a generalization of self-organizing neural networks known as the original Adaptive Resonance Theory models for learning recognition categories across multiple pattern channels. There is a separate stream of work on fusion ARTMAP,that extends fuzzy ARTMAP consisting of two fuzzy ART modules connected by an inter-ART map field to an extended architecture consisting of multiple ART modules.

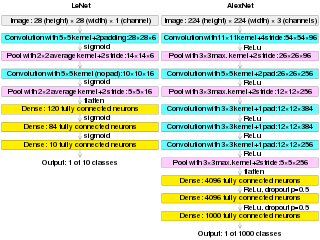

AlexNet is the name of a convolutional neural network (CNN) architecture,designed by Alex Krizhevsky in collaboration with Ilya Sutskever and Geoffrey Hinton,who was Krizhevsky's Ph.D. advisor at the University of Toronto.

Artificial neural networks (ANNs) are models created using machine learning to perform a number of tasks. Their creation was inspired by neural circuitry. While some of the computational implementations ANNs relate to earlier discoveries in mathematics,the first implementation of ANNs was by psychologist Frank Rosenblatt,who developed the perceptron. Little research was conducted on ANNs in the 1970s and 1980s,with the AAAI calling that period an "AI winter".

Silvia Ferrari is an Italian-American aerospace engineer. She is John Brancaccio Professor at the Sibley School of Mechanical and Aerospace Engineering at Cornell University and also the director of the Laboratory for Intelligent Systems and Control (LISC) at the same university.

Aude G. Billard is a Swiss physicist in the fields of machine learning and human-robot interactions. As a full professor at the School of Engineering at Swiss Federal Institute of Technology in Lausanne (EPFL),Billard’s research focuses on applying machine learning to support robot learning through human guidance. Billard’s work on human-robot interactions has been recognized numerous times by the Institute of Electrical and Electronics Engineers (IEEE) and she currently holds a leadership position on the executive committee of the IEEE Robotics and Automation Society (RAS) as the vice president of publication activities.

Javier Andreu-Perez is a British computer scientist and a Senior Lecturer and Chair in Smart Health Technologies at the University of Essex. He is also associate editor-in-chief of Neurocomputing for the area of Deep Learning and Machine Learning. Andreu-Perez research is mainly focused on Human-Centered Artificial Intelligence (HCAI). He also chairs a interdisciplinary lab in this area,HCAI-Essex.

Small object detection is a particular case of object detection where various techniques are employed to detect small objects in digital images and videos. "Small objects" are objects having a small pixel footprint in the input image. In areas such as aerial imagery,state-of-the-art object detection techniques under performed because of small objects.