Related Research Articles

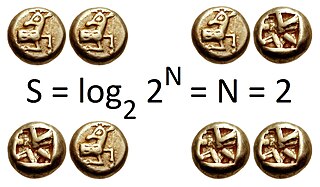

The bit is the most basic unit of information in computing and analog and digital communications. The name is a portmanteau of binary digit. The bit represents a logical state with one of two possible values. These values are most commonly represented as either "1" or "0", but other representations such as true/false, yes/no, on/off, or +/− are also widely used.

In computer science and information theory, a Huffman code is a particular type of optimal prefix code that is commonly used for lossless data compression. The process of finding or using such a code is Huffman coding, an algorithm developed by David A. Huffman while he was a Sc.D. student at MIT, and published in the 1952 paper "A Method for the Construction of Minimum-Redundancy Codes".

Information theory is the mathematical study of the quantification, storage, and communication of information. The field was originally established by the works of Harry Nyquist and Ralph Hartley, in the 1920s, and Claude Shannon in the 1940s. The field, in applied mathematics, is at the intersection of probability theory, statistics, computer science, statistical mechanics, information engineering, and electrical engineering.

In information theory, the entropy of a random variable is the average level of "information", "surprise", or "uncertainty" inherent to the variable's possible outcomes. Given a discrete random variable , which takes values in the alphabet and is distributed according to :

In the field of data compression, Shannon–Fano coding, named after Claude Shannon and Robert Fano, is a name given to two different but related techniques for constructing a prefix code based on a set of symbols and their probabilities.

In information theory, the Shannon–Hartley theorem tells the maximum rate at which information can be transmitted over a communications channel of a specified bandwidth in the presence of noise. It is an application of the noisy-channel coding theorem to the archetypal case of a continuous-time analog communications channel subject to Gaussian noise. The theorem establishes Shannon's channel capacity for such a communication link, a bound on the maximum amount of error-free information per time unit that can be transmitted with a specified bandwidth in the presence of the noise interference, assuming that the signal power is bounded, and that the Gaussian noise process is characterized by a known power or power spectral density. The law is named after Claude Shannon and Ralph Hartley.

A communication channel refers either to a physical transmission medium such as a wire, or to a logical connection over a multiplexed medium such as a radio channel in telecommunications and computer networking. A channel is used for information transfer of, for example, a digital bit stream, from one or several senders to one or several receivers. A channel has a certain capacity for transmitting information, often measured by its bandwidth in Hz or its data rate in bits per second.

Channel capacity, in electrical engineering, computer science, and information theory, is the tight upper bound on the rate at which information can be reliably transmitted over a communication channel.

In information theory, the information content, self-information, surprisal, or Shannon information is a basic quantity derived from the probability of a particular event occurring from a random variable. It can be thought of as an alternative way of expressing probability, much like odds or log-odds, but which has particular mathematical advantages in the setting of information theory.

In information theory, Shannon's source coding theorem establishes the statistical limits to possible data compression for data whose source is an independent identically-distributed random variable, and the operational meaning of the Shannon entropy.

In information theory, redundancy measures the fractional difference between the entropy H(X) of an ensemble X, and its maximum possible value . Informally, it is the amount of wasted "space" used to transmit certain data. Data compression is a way to reduce or eliminate unwanted redundancy, while forward error correction is a way of adding desired redundancy for purposes of error detection and correction when communicating over a noisy channel of limited capacity.

The natural unit of information, sometimes also nit or nepit, is a unit of information or information entropy, based on natural logarithms and powers of e, rather than the powers of 2 and base 2 logarithms, which define the shannon. This unit is also known by its unit symbol, the nat. One nat is the information content of an event when the probability of that event occurring is 1/e.

The mathematical expressions for thermodynamic entropy in the statistical thermodynamics formulation established by Ludwig Boltzmann and J. Willard Gibbs in the 1870s are similar to the information entropy by Claude Shannon and Ralph Hartley, developed in the 1940s.

In information theory, the noisy-channel coding theorem, establishes that for any given degree of noise contamination of a communication channel, it is possible to communicate discrete data nearly error-free up to a computable maximum rate through the channel. This result was presented by Claude Shannon in 1948 and was based in part on earlier work and ideas of Harry Nyquist and Ralph Hartley.

A timeline of events related to information theory, quantum information theory and statistical physics, data compression, error correcting codes and related subjects.

The decisive event which established the discipline of information theory, and brought it to immediate worldwide attention, was the publication of Claude E. Shannon's classic paper "A Mathematical Theory of Communication" in the Bell System Technical Journal in July and October 1948.

The mathematical theory of information is based on probability theory and statistics, and measures information with several quantities of information. The choice of logarithmic base in the following formulae determines the unit of information entropy that is used. The most common unit of information is the bit, or more correctly the shannon, based on the binary logarithm. Although "bit" is more frequently used in place of "shannon", its name is not distinguished from the bit as used in data-processing to refer to a binary value or stream regardless of its entropy Other units include the nat, based on the natural logarithm, and the hartley, based on the base 10 or common logarithm.

ISO 80000 or IEC 80000, Quantities and units, is an international standard describing the International System of Quantities (ISQ). It was developed and promulgated jointly by the International Organization for Standardization (ISO) and the International Electrotechnical Commission (IEC). It serves as a style guide for using physical quantities and units of measurement, formulas involving them, and their corresponding units, in scientific and educational documents for worldwide use. The ISO/IEC 80000 family of standards was completed with the publication of Part 1 in November 2009.

In computing and telecommunications, a unit of information is the capacity of some standard data storage system or communication channel, used to measure the capacities of other systems and channels. In information theory, units of information are also used to measure information contained in messages and the entropy of random variables.

The hartley, also called a ban, or a dit, is a logarithmic unit that measures information or entropy, based on base 10 logarithms and powers of 10. One hartley is the information content of an event if the probability of that event occurring is 1⁄10. It is therefore equal to the information contained in one decimal digit, assuming a priori equiprobability of each possible value. It is named after Ralph Hartley.

References

- ↑ Since the information associated with an event outcome that has a priori probability p, e.g. that a single given data bit takes the value 0, is given by H = −log p, and p can lie anywhere in the range 0 < p ≤ 1, the information content can lie anywhere in the range 0 ≤ H < ∞.

- ↑ Olivier Rioul (2018). "This is IT: A primer on Shannon's entropy and Information" (PDF). L'Information, Séminaire Poincaré. XXIII: 43–77. Retrieved 2021-05-23.

The Système International d'unités recommends the use of the shannon (Sh) as the information unit in place of the bit to distinguish the amount of information from the quantity of data that may be used to represent this information. Thus, according to the SI standard, H(X) should actually be expressed in shannons. The entropy of one bit lies between 0 and 1 Sh.

- ↑ "IEC 80000-13:2008". International Organization for Standardization . Retrieved 21 July 2013.