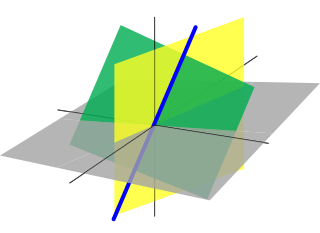

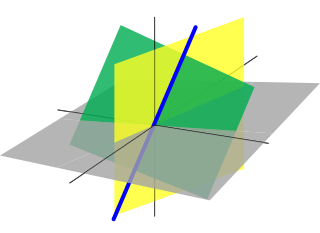

In mathematics, and more specifically in linear algebra, a linear map is a mapping between two vector spaces that preserves the operations of vector addition and scalar multiplication. The same names and the same definition are also used for the more general case of modules over a ring; see Module homomorphism.

Linear algebra is the branch of mathematics concerning linear equations such as:

In linear algebra, the rank of a matrix A is the dimension of the vector space generated by its columns. This corresponds to the maximal number of linearly independent columns of A. This, in turn, is identical to the dimension of the vector space spanned by its rows. Rank is thus a measure of the "nondegenerateness" of the system of linear equations and linear transformation encoded by A. There are multiple equivalent definitions of rank. A matrix's rank is one of its most fundamental characteristics.

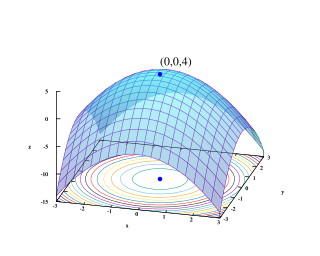

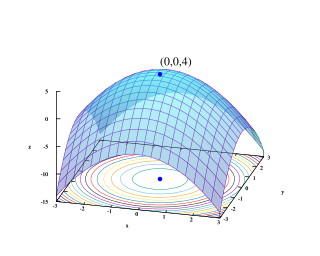

Quadratic programming (QP) is the process of solving certain mathematical optimization problems involving quadratic functions. Specifically, one seeks to optimize a multivariate quadratic function subject to linear constraints on the variables. Quadratic programming is a type of nonlinear programming.

Linear programming (LP), also called linear optimization, is a method to achieve the best outcome in a mathematical model whose requirements are represented by linear relationships. Linear programming is a special case of mathematical programming.

Mathematical optimization or mathematical programming is the selection of a best element, with regard to some criterion, from some set of available alternatives. Optimization problems of sorts arise in all quantitative disciplines from computer science and engineering to operations research and economics, and the development of solution methods has been of interest in mathematics for centuries.

In mathematics, a system of linear equations is a collection of one or more linear equations involving the same variables.

In mathematical optimization, Dantzig's simplex algorithm is a popular algorithm for linear programming.

An integer programming problem is a mathematical optimization or feasibility program in which some or all of the variables are restricted to be integers. In many settings the term refers to integer linear programming (ILP), in which the objective function and the constraints are linear.

Multi-disciplinary design optimization (MDO) is a field of engineering that uses optimization methods to solve design problems incorporating a number of disciplines. It is also known as multidisciplinary system design optimization (MSDO), and Multidisciplinary Design Analysis and Optimization (MDAO).

Convex optimization is a subfield of mathematical optimization that studies the problem of minimizing convex functions over convex sets. Many classes of convex optimization problems admit polynomial-time algorithms, whereas mathematical optimization is in general NP-hard.

In mathematical optimization, the Karush–Kuhn–Tucker (KKT) conditions, also known as the Kuhn–Tucker conditions, are first derivative tests for a solution in nonlinear programming to be optimal, provided that some regularity conditions are satisfied.

Farkas' lemma is a solvability theorem for a finite system of linear inequalities in mathematics. It was originally proven by the Hungarian mathematician Gyula Farkas. Farkas' lemma is the key result underpinning the linear programming duality and has played a central role in the development of mathematical optimization. It is used amongst other things in the proof of the Karush–Kuhn–Tucker theorem in nonlinear programming. Remarkably, in the area of the foundations of quantum theory, the lemma also underlies the complete set of Bell inequalities in the form of necessary and sufficient conditions for the existence of a local hidden-variable theory, given data from any specific set of measurements.

In mathematical optimization, constrained optimization is the process of optimizing an objective function with respect to some variables in the presence of constraints on those variables. The objective function is either a cost function or energy function, which is to be minimized, or a reward function or utility function, which is to be maximized. Constraints can be either hard constraints, which set conditions for the variables that are required to be satisfied, or soft constraints, which have some variable values that are penalized in the objective function if, and based on the extent that, the conditions on the variables are not satisfied.

In an optimization problem, a slack variable is a variable that is added to an inequality constraint to transform it into an equality. Introducing a slack variable replaces an inequality constraint with an equality constraint and a non-negativity constraint on the slack variable.

In mathematics, a constraint is a condition of an optimization problem that the solution must satisfy. There are several types of constraints—primarily equality constraints, inequality constraints, and integer constraints. The set of candidate solutions that satisfy all constraints is called the feasible set.

Numerical continuation is a method of computing approximate solutions of a system of parameterized nonlinear equations,

The eight-point algorithm is an algorithm used in computer vision to estimate the essential matrix or the fundamental matrix related to a stereo camera pair from a set of corresponding image points. It was introduced by Christopher Longuet-Higgins in 1981 for the case of the essential matrix. In theory, this algorithm can be used also for the fundamental matrix, but in practice the normalized eight-point algorithm, described by Richard Hartley in 1997, is better suited for this case.

In constrained least squares one solves a linear least squares problem with an additional constraint on the solution. I.e., the unconstrained equation must be fit as closely as possible while ensuring that some other property of is maintained.

In the theory of linear programming, a basic feasible solution (BFS) is a solution with a minimal set of non-zero variables. Geometrically, each BFS corresponds to a corner of the polyhedron of feasible solutions. If there exists an optimal solution, then there exists an optimal BFS. Hence, to find an optimal solution, it is sufficient to consider the BFS-s. This fact is used by the simplex algorithm, which essentially travels from some BFS to another until an optimal one is found.