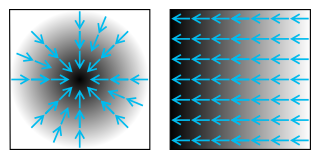

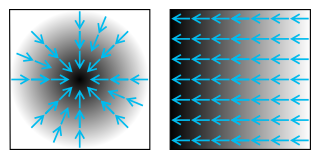

In vector calculus, the gradient of a scalar-valued differentiable function of several variables is the vector field whose value at a point gives the direction and the rate of fastest increase. The gradient transforms like a vector under change of basis of the space of variables of . If the gradient of a function is non-zero at a point , the direction of the gradient is the direction in which the function increases most quickly from , and the magnitude of the gradient is the rate of increase in that direction, the greatest absolute directional derivative. Further, a point where the gradient is the zero vector is known as a stationary point. The gradient thus plays a fundamental role in optimization theory, where it is used to minimize a function by gradient descent. In coordinate-free terms, the gradient of a function may be defined by:

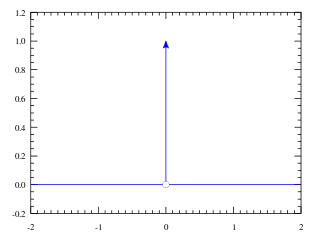

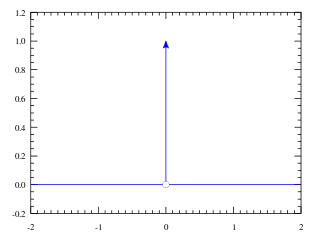

In mathematical analysis, the Dirac delta function, also known as the unit impulse, is a generalized function on the real numbers, whose value is zero everywhere except at zero, and whose integral over the entire real line is equal to one. Since there is no function having this property, to model the delta "function" rigorously involves the use of limits or, as is common in mathematics, measure theory and the theory of distributions.

In calculus, Taylor's theorem gives an approximation of a -times differentiable function around a given point by a polynomial of degree , called the -th-order Taylor polynomial. For a smooth function, the Taylor polynomial is the truncation at the order of the Taylor series of the function. The first-order Taylor polynomial is the linear approximation of the function, and the second-order Taylor polynomial is often referred to as the quadratic approximation. There are several versions of Taylor's theorem, some giving explicit estimates of the approximation error of the function by its Taylor polynomial.

In physics, engineering and mathematics, the Fourier transform (FT) is an integral transform that converts a function into a form that describes the frequencies present in the original function. The output of the transform is a complex-valued function of frequency. The term Fourier transform refers to both this complex-valued function and the mathematical operation. When a distinction needs to be made the Fourier transform is sometimes called the frequency domain representation of the original function. The Fourier transform is analogous to decomposing the sound of a musical chord into the intensities of its constituent pitches.

In mathematics, the Laplace operator or Laplacian is a differential operator given by the divergence of the gradient of a scalar function on Euclidean space. It is usually denoted by the symbols , (where is the nabla operator), or . In a Cartesian coordinate system, the Laplacian is given by the sum of second partial derivatives of the function with respect to each independent variable. In other coordinate systems, such as cylindrical and spherical coordinates, the Laplacian also has a useful form. Informally, the Laplacian Δf (p) of a function f at a point p measures by how much the average value of f over small spheres or balls centered at p deviates from f (p).

In mathematics, a differential operator is an operator defined as a function of the differentiation operator. It is helpful, as a matter of notation first, to consider differentiation as an abstract operation that accepts a function and returns another function.

In differential geometry, the Ricci curvature tensor, named after Gregorio Ricci-Curbastro, is a geometric object which is determined by a choice of Riemannian or pseudo-Riemannian metric on a manifold. It can be considered, broadly, as a measure of the degree to which the geometry of a given metric tensor differs locally from that of ordinary Euclidean space or pseudo-Euclidean space.

In complex analysis, the Hardy spacesHp are certain spaces of holomorphic functions on the unit disk or upper half plane. They were introduced by Frigyes Riesz, who named them after G. H. Hardy, because of the paper. In real analysis Hardy spaces are certain spaces of distributions on the real line, which are boundary values of the holomorphic functions of the complex Hardy spaces, and are related to the Lp spaces of functional analysis. For 1 ≤ p < ∞ these real Hardy spaces Hp are certain subsets of Lp, while for p < 1 the Lp spaces have some undesirable properties, and the Hardy spaces are much better behaved.

In mathematics, the Legendre transformation, first introduced by Adrien-Marie Legendre in 1787 when studying the minimal surface problem, is an involutive transformation on real-valued functions that are convex on a real variable. Specifically, if a real-valued multivariable function is convex on one of its independent real variables, then the Legendre transform with respect to this variable is applicable to the function.

In mathematics, the Radon transform is the integral transform which takes a function f defined on the plane to a function Rf defined on the (two-dimensional) space of lines in the plane, whose value at a particular line is equal to the line integral of the function over that line. The transform was introduced in 1917 by Johann Radon, who also provided a formula for the inverse transform. Radon further included formulas for the transform in three dimensions, in which the integral is taken over planes. It was later generalized to higher-dimensional Euclidean spaces and more broadly in the context of integral geometry. The complex analogue of the Radon transform is known as the Penrose transform. The Radon transform is widely applicable to tomography, the creation of an image from the projection data associated with cross-sectional scans of an object.

In physics and mathematics, supermanifolds are generalizations of the manifold concept based on ideas coming from supersymmetry. Several definitions are in use, some of which are described below.

In physics, the Hamilton–Jacobi equation, named after William Rowan Hamilton and Carl Gustav Jacob Jacobi, is an alternative formulation of classical mechanics, equivalent to other formulations such as Newton's laws of motion, Lagrangian mechanics and Hamiltonian mechanics.

In mathematics, the Riemann–Lebesgue lemma, named after Bernhard Riemann and Henri Lebesgue, states that the Fourier transform or Laplace transform of an L1 function vanishes at infinity. It is of importance in harmonic analysis and asymptotic analysis.

In mathematics, in particular in algebraic geometry and differential geometry, Dolbeault cohomology (named after Pierre Dolbeault) is an analog of de Rham cohomology for complex manifolds. Let M be a complex manifold. Then the Dolbeault cohomology groups depend on a pair of integers p and q and are realized as a subquotient of the space of complex differential forms of degree (p,q).

In the fields of actuarial science and financial economics there are a number of ways that risk can be defined; to clarify the concept theoreticians have described a number of properties that a risk measure might or might not have. A coherent risk measure is a function that satisfies properties of monotonicity, sub-additivity, homogeneity, and translational invariance.

An -superprocess, , within mathematics probability theory is a stochastic process on that is usually constructed as a special limit of near-critical branching diffusions.

In mathematics, the class of Muckenhoupt weightsAp consists of those weights ω for which the Hardy–Littlewood maximal operator is bounded on Lp(dω). Specifically, we consider functions f on Rn and their associated maximal functions M( f ) defined as

In mathematics, the spectral theory of ordinary differential equations is the part of spectral theory concerned with the determination of the spectrum and eigenfunction expansion associated with a linear ordinary differential equation. In his dissertation, Hermann Weyl generalized the classical Sturm–Liouville theory on a finite closed interval to second order differential operators with singularities at the endpoints of the interval, possibly semi-infinite or infinite. Unlike the classical case, the spectrum may no longer consist of just a countable set of eigenvalues, but may also contain a continuous part. In this case the eigenfunction expansion involves an integral over the continuous part with respect to a spectral measure, given by the Titchmarsh–Kodaira formula. The theory was put in its final simplified form for singular differential equations of even degree by Kodaira and others, using von Neumann's spectral theorem. It has had important applications in quantum mechanics, operator theory and harmonic analysis on semisimple Lie groups.

In the mathematical field of geometric measure theory, the coarea formula expresses the integral of a function over an open set in Euclidean space in terms of integrals over the level sets of another function. A special case is Fubini's theorem, which says under suitable hypotheses that the integral of a function over the region enclosed by a rectangular box can be written as the iterated integral over the level sets of the coordinate functions. Another special case is integration in spherical coordinates, in which the integral of a function on Rn is related to the integral of the function over spherical shells: level sets of the radial function. The formula plays a decisive role in the modern study of isoperimetric problems.

In mathematics, calculus on Euclidean space is a generalization of calculus of functions in one or several variables to calculus of functions on Euclidean space as well as a finite-dimensional real vector space. This calculus is also known as advanced calculus, especially in the United States. It is similar to multivariable calculus but is somewhat more sophisticated in that it uses linear algebra more extensively and covers some concepts from differential geometry such as differential forms and Stokes' formula in terms of differential forms. This extensive use of linear algebra also allows a natural generalization of multivariable calculus to calculus on Banach spaces or topological vector spaces.