In number theory, the prime number theorem (PNT) describes the asymptotic distribution of the prime numbers among the positive integers. It formalizes the intuitive idea that primes become less common as they become larger by precisely quantifying the rate at which this occurs. The theorem was proved independently by Jacques Hadamard and Charles Jean de la Vallée Poussin in 1896 using ideas introduced by Bernhard Riemann.

In probability theory, the central limit theorem (CLT) establishes that, in some situations, when independent random variables are added, their properly normalized sum tends toward a normal distribution even if the original variables themselves are not normally distributed. The theorem is a key concept in probability theory because it implies that probabilistic and statistical methods that work for normal distributions can be applicable to many problems involving other types of distributions.

In mathematics, the limit inferior and limit superior of a sequence can be thought of as limiting bounds on the sequence. They can be thought of in a similar fashion for a function. For a set, they are the infimum and supremum of the set's limit points, respectively. In general, when there are multiple objects around which a sequence, function, or set accumulates, the inferior and superior limits extract the smallest and largest of them; the type of object and the measure of size is context-dependent, but the notion of extreme limits is invariant. Limit inferior is also called infimum limit, limit infimum, liminf, inferior limit, lower limit, or inner limit; limit superior is also known as supremum limit, limit supremum, limsup, superior limit, upper limit, or outer limit.

In probability theory, there exist several different notions of convergence of random variables. The convergence of sequences of random variables to some limit random variable is an important concept in probability theory, and its applications to statistics and stochastic processes. The same concepts are known in more general mathematics as stochastic convergence and they formalize the idea that a sequence of essentially random or unpredictable events can sometimes be expected to settle down into a behavior that is essentially unchanging when items far enough into the sequence are studied. The different possible notions of convergence relate to how such a behavior can be characterized: two readily understood behaviors are that the sequence eventually takes a constant value, and that values in the sequence continue to change but can be described by an unchanging probability distribution.

In mathematics, an infinite series of numbers is said to converge absolutely if the sum of the absolute values of the summands is finite. More precisely, a real or complex series is said to converge absolutely if for some real number . Similarly, an improper integral of a function, , is said to converge absolutely if the integral of the absolute value of the integrand is finite—that is, if

In mathematics, Abel's theorem for power series relates a limit of a power series to the sum of its coefficients. It is named after Norwegian mathematician Niels Henrik Abel.

In information theory, the typical set is a set of sequences whose probability is close to two raised to the negative power of the entropy of their source distribution. That this set has total probability close to one is a consequence of the asymptotic equipartition property (AEP) which is a kind of law of large numbers. The notion of typicality is only concerned with the probability of a sequence and not the actual sequence itself.

In mathematics, the limit of a sequence is the value that the terms of a sequence "tend to". If such a limit exists, the sequence is called convergent. A sequence which does not converge is said to be divergent. The limit of a sequence is said to be the fundamental notion on which the whole of analysis ultimately rests.

In probability theory, the law of the iterated logarithm describes the magnitude of the fluctuations of a random walk. The original statement of the law of the iterated logarithm is due to A. Y. Khinchin (1924). Another statement was given by A. N. Kolmogorov in 1929.

In information theory, Shannon's source coding theorem establishes the limits to possible data compression, and the operational meaning of the Shannon entropy.

In mathematics, a moment problem arises as the result of trying to invert the mapping that takes a measure μ to the sequences of moments

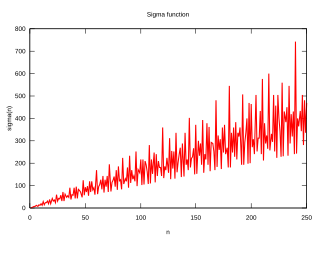

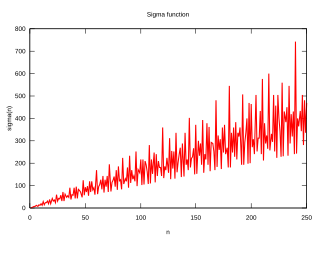

In mathematics, a colossally abundant number is a natural number that, in a particular, rigorous sense, has many divisors. Formally, a number n is colossally abundant if and only if there is an ε > 0 such that for all k > 1,

The Cauchy convergence test is a method used to test infinite series for convergence. It relies on bounding sums of terms in the series. This convergence criterion is named after Augustin-Louis Cauchy who published it in his textbook Cours d'Analyse 1821.

In mathematics, uniform integrability is an important concept in real analysis, functional analysis and measure theory, and plays a vital role in the theory of martingales. The definition used in measure theory is closely related to, but not identical to, the definition typically used in probability.

In game theory, a stochastic game, introduced by Lloyd Shapley in the early 1950s, is a dynamic game with probabilistic transitions played by one or more players. The game is played in a sequence of stages. At the beginning of each stage the game is in some state. The players select actions and each player receives a payoff that depends on the current state and the chosen actions. The game then moves to a new random state whose distribution depends on the previous state and the actions chosen by the players. The procedure is repeated at the new state and play continues for a finite or infinite number of stages. The total payoff to a player is often taken to be the discounted sum of the stage payoffs or the limit inferior of the averages of the stage payoffs.

In probability theory, a probability distribution is infinitely divisible if it can be expressed as the probability distribution of the sum of an arbitrary number of independent and identically distributed random variables. The characteristic function of any infinitely divisible distribution is then called an infinitely divisible characteristic function.

In probability theory, Lindeberg's condition is a sufficient condition for the central limit theorem (CLT) to hold for a sequence of independent random variables. Unlike the classical CLT, which requires that the random variables in question have finite variance and be both independent and identically distributed, Lindeberg's CLT only requires that they have finite variance, satisfy Lindeberg's condition, and be independent. It is named after the Finnish mathematician Jarl Waldemar Lindeberg.

The order in probability notation is used in probability theory and statistical theory in direct parallel to the big-O notation that is standard in mathematics. Where the big-O notation deals with the convergence of sequences or sets of ordinary numbers, the order in probability notation deals with convergence of sets of random variables, where convergence is in the sense of convergence in probability.