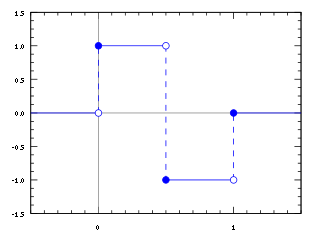

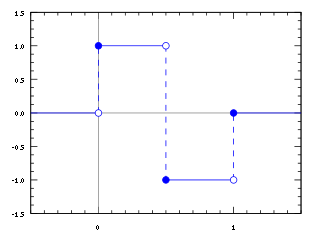

In mathematics, the Haar wavelet is a sequence of rescaled "square-shaped" functions which together form a wavelet family or basis. Wavelet analysis is similar to Fourier analysis in that it allows a target function over an interval to be represented in terms of an orthonormal basis. The Haar sequence is now recognised as the first known wavelet basis and is extensively used as a teaching example.

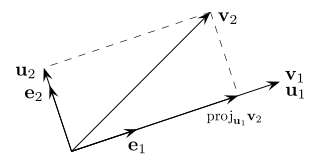

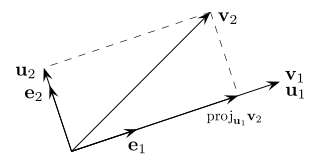

In mathematics, particularly linear algebra and numerical analysis, the Gram–Schmidt process is a method for orthonormalizing a set of vectors in an inner product space, most commonly the Euclidean space Rn equipped with the standard inner product. The Gram–Schmidt process takes a finite, linearly independent set of vectors S = {v1, ..., vk} for k ≤ n and generates an orthogonal set S′ = {u1, ..., uk} that spans the same k-dimensional subspace of Rn as S.

In linear algebra, an orthogonal matrix, or orthonormal matrix, is a real square matrix whose columns and rows are orthonormal vectors.

In linear algebra, a Toeplitz matrix or diagonal-constant matrix, named after Otto Toeplitz, is a matrix in which each descending diagonal from left to right is constant. For instance, the following matrix is a Toeplitz matrix:

In mathematics, the orthogonal group in dimension , denoted , is the group of distance-preserving transformations of a Euclidean space of dimension that preserve a fixed point, where the group operation is given by composing transformations. The orthogonal group is sometimes called the general orthogonal group, by analogy with the general linear group. Equivalently, it is the group of orthogonal matrices, where the group operation is given by matrix multiplication. The orthogonal group is an algebraic group and a Lie group. It is compact.

In mathematics, particularly in matrix theory, a permutation matrix is a square binary matrix that has exactly one entry of 1 in each row and each column and 0s elsewhere. Each such matrix, say P, represents a permutation of m elements and, when used to multiply another matrix, say A, results in permuting the rows or columns of the matrix A.

In linear algebra, a Hankel matrix, named after Hermann Hankel, is a square matrix in which each ascending skew-diagonal from left to right is constant, e.g.:

In mathematics and signal processing, the Hilbert transform is a specific singular integral that takes a function, u(t) of a real variable and produces another function of a real variable H(u)(t). The Hilbert transform is given by the Cauchy principal value of the convolution with the function (see § Definition). The Hilbert transform has a particularly simple representation in the frequency domain: It imparts a phase shift of ±90° (π⁄2 radians) to every frequency component of a function, the sign of the shift depending on the sign of the frequency (see § Relationship with the Fourier transform). The Hilbert transform is important in signal processing, where it is a component of the analytic representation of a real-valued signal u(t). The Hilbert transform was first introduced by David Hilbert in this setting, to solve a special case of the Riemann–Hilbert problem for analytic functions.

In mathematics, the Kronecker product, sometimes denoted by ⊗, is an operation on two matrices of arbitrary size resulting in a block matrix. It is a specialization of the tensor product from vectors to matrices and gives the matrix of the tensor product linear map with respect to a standard choice of basis. The Kronecker product is to be distinguished from the usual matrix multiplication, which is an entirely different operation. The Kronecker product is also sometimes called matrix direct product.

Quantum statistical mechanics is statistical mechanics applied to quantum mechanical systems. In quantum mechanics a statistical ensemble is described by a density operator S, which is a non-negative, self-adjoint, trace-class operator of trace 1 on the Hilbert space H describing the quantum system. This can be shown under various mathematical formalisms for quantum mechanics. One such formalism is provided by quantum logic.

In linear algebra, a circulant matrix is a square matrix in which all row vectors are composed of the same elements and each row vector is rotated one element to the right relative to the preceding row vector. It is a particular kind of Toeplitz matrix.

In numerical linear algebra, the Arnoldi iteration is an eigenvalue algorithm and an important example of an iterative method. Arnoldi finds an approximation to the eigenvalues and eigenvectors of general matrices by constructing an orthonormal basis of the Krylov subspace, which makes it particularly useful when dealing with large sparse matrices.

In mathematics and functional analysis a direct integral or Hilbert integral is a generalization of the concept of direct sum. The theory is most developed for direct integrals of Hilbert spaces and direct integrals of von Neumann algebras. The concept was introduced in 1949 by John von Neumann in one of the papers in the series On Rings of Operators. One of von Neumann's goals in this paper was to reduce the classification of von Neumann algebras on separable Hilbert spaces to the classification of so-called factors. Factors are analogous to full matrix algebras over a field, and von Neumann wanted to prove a continuous analogue of the Artin–Wedderburn theorem classifying semi-simple rings.

In the mathematical discipline of matrix theory, a Jordan matrix, named after Camille Jordan, is a block diagonal matrix over a ring R, where each block along the diagonal, called a Jordan block, has the following form:

In mathematics and physics, in particular quantum information, the term generalized Pauli matrices refers to families of matrices which generalize the properties of the Pauli matrices. Here, a few classes of such matrices are summarized.

Prony analysis was developed by Gaspard Riche de Prony in 1795. However, practical use of the method awaited the digital computer. Similar to the Fourier transform, Prony's method extracts valuable information from a uniformly sampled signal and builds a series of damped complex exponentials or damped sinusoids. This allows for the estimation of frequency, amplitude, phase and damping components of a signal.

In mathematics, especially in linear algebra and matrix theory, the commutation matrix is used for transforming the vectorized form of a matrix into the vectorized form of its transpose. Specifically, the commutation matrix K(m,n) is the nm × mn matrix which, for any m × n matrix A, transforms vec(A) into vec(AT):

In statistics and signal processing, the orthogonality principle is a necessary and sufficient condition for the optimality of a Bayesian estimator. Loosely stated, the orthogonality principle says that the error vector of the optimal estimator is orthogonal to any possible estimator. The orthogonality principle is most commonly stated for linear estimators, but more general formulations are possible. Since the principle is a necessary and sufficient condition for optimality, it can be used to find the minimum mean square error estimator.

In mathematics, a Bunce–Deddens algebra, named after John W. Bunce and James A. Deddens, is a certain type of AT algebra, a direct limit of matrix algebras over the continuous functions on the circle, in which the connecting maps are given by embeddings between families of shift operators with periodic weights.

The cyclotomic fast Fourier transform is a type of fast Fourier transform algorithm over finite fields. This algorithm first decomposes a DFT into several circular convolutions, and then derives the DFT results from the circular convolution results. When applied to a DFT over , this algorithm has a very low multiplicative complexity. In practice, since there usually exist efficient algorithms for circular convolutions with specific lengths, this algorithm is very efficient.