In computer science, binary search, also known as half-interval search, logarithmic search, or binary chop, is a search algorithm that finds the position of a target value within a sorted array. Binary search compares the target value to the middle element of the array. If they are not equal, the half in which the target cannot lie is eliminated and the search continues on the remaining half, again taking the middle element to compare to the target value, and repeating this until the target value is found. If the search ends with the remaining half being empty, the target is not in the array.

In mathematics and computer programming, exponentiating by squaring is a general method for fast computation of large positive integer powers of a number, or more generally of an element of a semigroup, like a polynomial or a square matrix. Some variants are commonly referred to as square-and-multiply algorithms or binary exponentiation. These can be of quite general use, for example in modular arithmetic or powering of matrices. For semigroups for which additive notation is commonly used, like elliptic curves used in cryptography, this method is also referred to as double-and-add.

The knapsack problem is the following problem in combinatorial optimization:

The subset sum problem (SSP) is a decision problem in computer science. In its most general formulation, there is a multiset of integers and a target-sum , and the question is to decide whether any subset of the integers sum to precisely . The problem is known to be NP-complete. Moreover, some restricted variants of it are NP-complete too, for example:

In mathematics, the floor function is the function that takes as input a real number x, and gives as output the greatest integer less than or equal to x, denoted ⌊x⌋ or floor(x). Similarly, the ceiling function maps x to the least integer greater than or equal to x, denoted ⌈x⌉ or ceil(x).

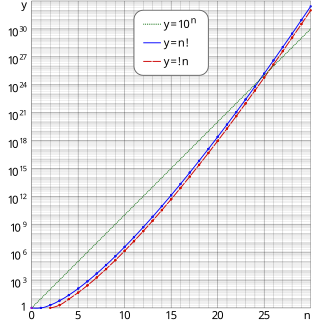

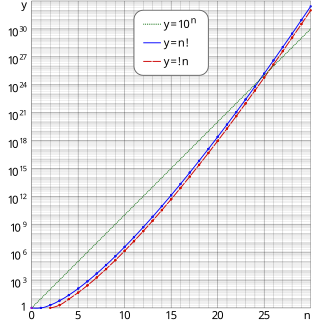

In combinatorial mathematics, a derangement is a permutation of the elements of a set in which no element appears in its original position. In other words, a derangement is a permutation that has no fixed points.

In computer science, the Akra–Bazzi method, or Akra–Bazzi theorem, is used to analyze the asymptotic behavior of the mathematical recurrences that appear in the analysis of divide and conquer algorithms where the sub-problems have substantially different sizes. It is a generalization of the master theorem for divide-and-conquer recurrences, which assumes that the sub-problems have equal size. It is named after mathematicians Mohamad Akra and Louay Bazzi.

Sperner's theorem, in discrete mathematics, describes the largest possible families of finite sets none of which contain any other sets in the family. It is one of the central results in extremal set theory. It is named after Emanuel Sperner, who published it in 1928.

In number theory, the integer square root (isqrt) of a non-negative integer n is the non-negative integer m which is the greatest integer less than or equal to the square root of n,

A fully polynomial-time approximation scheme (FPTAS) is an algorithm for finding approximate solutions to function problems, especially optimization problems. An FPTAS takes as input an instance of the problem and a parameter ε > 0. It returns as output a value which is at least times the correct value, and at most times the correct value.

In mathematics, particularly in combinatorics, a Stirling number of the second kind is the number of ways to partition a set of n objects into k non-empty subsets and is denoted by or . Stirling numbers of the second kind occur in the field of mathematics called combinatorics and the study of partitions. They are named after James Stirling.

In mathematics, especially in combinatorics, Stirling numbers of the first kind arise in the study of permutations. In particular, the unsigned Stirling numbers of the first kind count permutations according to their number of cycles.

In number theory and computer science, the partition problem, or number partitioning, is the task of deciding whether a given multiset S of positive integers can be partitioned into two subsets S1 and S2 such that the sum of the numbers in S1 equals the sum of the numbers in S2. Although the partition problem is NP-complete, there is a pseudo-polynomial time dynamic programming solution, and there are heuristics that solve the problem in many instances, either optimally or approximately. For this reason, it has been called "the easiest hard problem".

The Rand index or Rand measure in statistics, and in particular in data clustering, is a measure of the similarity between two data clusterings. A form of the Rand index may be defined that is adjusted for the chance grouping of elements, this is the adjusted Rand index. The Rand index is the accuracy of determining if a link belongs within a cluster or not.

The 3-partition problem is a strongly NP-complete problem in computer science. The problem is to decide whether a given multiset of integers can be partitioned into triplets that all have the same sum. More precisely:

In mathematics, the Dedekind numbers are a rapidly growing sequence of integers named after Richard Dedekind, who defined them in 1897. The Dedekind number M(n) is the number of monotone Boolean functions of n variables. Equivalently, it is the number of antichains of subsets of an n-element set, the number of elements in a free distributive lattice with n generators, and one more than the number of abstract simplicial complexes on a set with n elements.

Matroid partitioning is a problem arising in the mathematical study of matroids and in the design and analysis of algorithms. Its goal is to partition the elements of a matroid into as few independent sets as possible. An example is the problem of computing the arboricity of an undirected graph, the minimum number of forests needed to cover all of its edges. Matroid partitioning may be solved in polynomial time, given an independence oracle for the matroid. It may be generalized to show that a matroid sum is itself a matroid, to provide an algorithm for computing ranks and independent sets in matroid sums, and to compute the largest common independent set in the intersection of two given matroids.

In computer science, merge-insertion sort or the Ford–Johnson algorithm is a comparison sorting algorithm published in 1959 by L. R. Ford Jr. and Selmer M. Johnson. It uses fewer comparisons in the worst case than the best previously known algorithms, binary insertion sort and merge sort, and for 20 years it was the sorting algorithm with the fewest known comparisons. Although not of practical significance, it remains of theoretical interest in connection with the problem of sorting with a minimum number of comparisons. The same algorithm may have also been independently discovered by Stanisław Trybuła and Czen Ping.

In computer science, multiway number partitioning is the problem of partitioning a multiset of numbers into a fixed number of subsets, such that the sums of the subsets are as similar as possible. It was first presented by Ronald Graham in 1969 in the context of the identical-machines scheduling problem. The problem is parametrized by a positive integer k, and called k-way number partitioning. The input to the problem is a multiset S of numbers, whose sum is k*T.

Balanced number partitioning is a variant of multiway number partitioning in which there are constraints on the number of items allocated to each set. The input to the problem is a set of n items of different sizes, and two integers m, k. The output is a partition of the items into m subsets, such that the number of items in each subset is at most k. Subject to this, it is required that the sums of sizes in the m subsets are as similar as possible.