The calculus of variations is a field of mathematical analysis that uses variations, which are small changes in functions and functionals, to find maxima and minima of functionals: mappings from a set of functions to the real numbers. Functionals are often expressed as definite integrals involving functions and their derivatives. Functions that maximize or minimize functionals may be found using the Euler–Lagrange equation of the calculus of variations.

In mathematics, a self-adjoint operator on an infinite-dimensional complex vector space V with inner product is a linear map A that is its own adjoint. If V is finite-dimensional with a given orthonormal basis, this is equivalent to the condition that the matrix of A is a Hermitian matrix, i.e., equal to its conjugate transpose A∗. By the finite-dimensional spectral theorem, V has an orthonormal basis such that the matrix of A relative to this basis is a diagonal matrix with entries in the real numbers. In this article, we consider generalizations of this concept to operators on Hilbert spaces of arbitrary dimension.

The costate equation is related to the state equation used in optimal control. It is also referred to as auxiliary, adjoint, influence, or multiplier equation. It is stated as a vector of first order differential equations

Optimal control theory is a branch of mathematical optimization that deals with finding a control for a dynamical system over a period of time such that an objective function is optimized. It has numerous applications in science, engineering and operations research. For example, the dynamical system might be a spacecraft with controls corresponding to rocket thrusters, and the objective might be to reach the moon with minimum fuel expenditure. Or the dynamical system could be a nation's economy, with the objective to minimize unemployment; the controls in this case could be fiscal and monetary policy. A dynamical system may also be introduced to embed operations research problems within the framework of optimal control theory.

In probability theory, the Borel–Kolmogorov paradox is a paradox relating to conditional probability with respect to an event of probability zero. It is named after Émile Borel and Andrey Kolmogorov.

In the theory of stochastic processes, the Karhunen–Loève theorem, also known as the Kosambi–Karhunen–Loève theorem is a representation of a stochastic process as an infinite linear combination of orthogonal functions, analogous to a Fourier series representation of a function on a bounded interval. The transformation is also known as Hotelling transform and eigenvector transform, and is closely related to principal component analysis (PCA) technique widely used in image processing and in data analysis in many fields.

Pontryagin's maximum principle is used in optimal control theory to find the best possible control for taking a dynamical system from one state to another, especially in the presence of constraints for the state or input controls. It states that it is necessary for any optimal control along with the optimal state trajectory to solve the so-called Hamiltonian system, which is a two-point boundary value problem, plus a maximum condition of the control Hamiltonian. These necessary conditions become sufficient under certain convexity conditions on the objective and constraint functions.

A first class constraint is a dynamical quantity in a constrained Hamiltonian system whose Poisson bracket with all the other constraints vanishes on the constraint surface in phase space. To calculate the first class constraint, one assumes that there are no second class constraints, or that they have been calculated previously, and their Dirac brackets generated.

In mathematics, a stiff equation is a differential equation for which certain numerical methods for solving the equation are numerically unstable, unless the step size is taken to be extremely small. It has proven difficult to formulate a precise definition of stiffness, but the main idea is that the equation includes some terms that can lead to rapid variation in the solution.

In mathematics, the viscosity solution concept was introduced in the early 1980s by Pierre-Louis Lions and Michael G. Crandall as a generalization of the classical concept of what is meant by a 'solution' to a partial differential equation (PDE). It has been found that the viscosity solution is the natural solution concept to use in many applications of PDE's, including for example first order equations arising in dynamic programming, differential games or front evolution problems, as well as second-order equations such as the ones arising in stochastic optimal control or stochastic differential games.

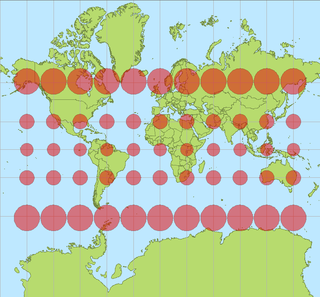

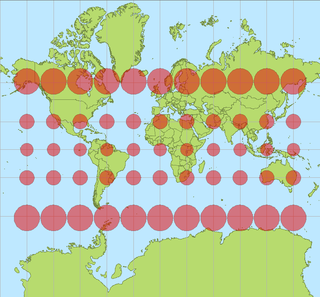

In cartography, a Tissot's indicatrix is a mathematical contrivance presented by French mathematician Nicolas Auguste Tissot in 1859 and 1871 in order to characterize local distortions due to map projection. It is the geometry that results from projecting a circle of infinitesimal radius from a curved geometric model, such as a globe, onto a map. Tissot proved that the resulting diagram is an ellipse whose axes indicate the two principal directions along which scale is maximal and minimal at that point on the map.

In the mathematical study of heat conduction and diffusion, a heat kernel is the fundamental solution to the heat equation on a specified domain with appropriate boundary conditions. It is also one of the main tools in the study of the spectrum of the Laplace operator, and is thus of some auxiliary importance throughout mathematical physics. The heat kernel represents the evolution of temperature in a region whose boundary is held fixed at a particular temperature, such that an initial unit of heat energy is placed at a point at time t = 0.

The Hamiltonian is a function used to solve a problem of optimal control for a dynamical system. It can be understood as an instantaneous increment of the Lagrangian expression of the problem that is to be optimized over a certain time period. Inspired by, but distinct from, the Hamiltonian of classical mechanics, the Hamiltonian of optimal control theory was developed by Lev Pontryagin as part of his maximum principle. Pontryagin proved that a necessary condition for solving the optimal control problem is that the control should be chosen so as to optimize the Hamiltonian.

The value function of an optimization problem gives the value attained by the objective function at a solution, while only depending on the parameters of the problem. In a controlled dynamical system, the value function represents the optimal payoff of the system over the interval [t, t1] when started at the time-t state variable x(t)=x. If the objective function represents some cost that is to be minimized, the value function can be interpreted as the cost to finish the optimal program, and is thus referred to as "cost-to-go function." In an economic context, where the objective function usually represents utility, the value function is conceptually equivalent to the indirect utility function.

In mathematics, the spectral theory of ordinary differential equations is the part of spectral theory concerned with the determination of the spectrum and eigenfunction expansion associated with a linear ordinary differential equation. In his dissertation Hermann Weyl generalized the classical Sturm–Liouville theory on a finite closed interval to second order differential operators with singularities at the endpoints of the interval, possibly semi-infinite or infinite. Unlike the classical case, the spectrum may no longer consist of just a countable set of eigenvalues, but may also contain a continuous part. In this case the eigenfunction expansion involves an integral over the continuous part with respect to a spectral measure, given by the Titchmarsh–Kodaira formula. The theory was put in its final simplified form for singular differential equations of even degree by Kodaira and others, using von Neumann's spectral theorem. It has had important applications in quantum mechanics, operator theory and harmonic analysis on semisimple Lie groups.

In the calculus of variations the Legendre–Clebsch condition is a second-order condition which a solution of the Euler–Lagrange equation must satisfy in order to be a minimum.

In mathematics, the Dirichlet eigenvalues are the fundamental modes of vibration of an idealized drum with a given shape. The problem of whether one can hear the shape of a drum is: given the Dirichlet eigenvalues, what features of the shape of the drum can one deduce. Here a "drum" is thought of as an elastic membrane Ω, which is represented as a planar domain whose boundary is fixed. The Dirichlet eigenvalues are found by solving the following problem for an unknown function u ≠ 0 and eigenvalue λ

In mathematics, the Neumann–Poincaré operator or Poincaré–Neumann operator, named after Carl Neumann and Henri Poincaré, is a non-self-adjoint compact operator introduced by Poincaré to solve boundary value problems for the Laplacian on bounded domains in Euclidean space. Within the language of potential theory it reduces the partial differential equation to an integral equation on the boundary to which the theory of Fredholm operators can be applied. The theory is particularly simple in two dimensions—the case treated in detail in this article—where it is related to complex function theory, the conjugate Beurling transform or complex Hilbert transform and the Fredholm eigenvalues of bounded planar domains.

In mathematical optimization, Zermelo's navigation problem, proposed in 1931 by Ernst Zermelo, is a classic optimal control problem that deals with a boat navigating on a body of water, originating from a point to a destination point . The boat is capable of a certain maximum speed, and the goal is to derive the best possible control to reach in the least possible time.

Batch normalization is a method used to make training of artificial neural networks faster and more stable through normalization of the layers' inputs by re-centering and re-scaling. It was proposed by Sergey Ioffe and Christian Szegedy in 2015.