Related Research Articles

The Transmission Control Protocol (TCP) is one of the main protocols of the Internet protocol suite. It originated in the initial network implementation in which it complemented the Internet Protocol (IP). Therefore, the entire suite is commonly referred to as TCP/IP. TCP provides reliable, ordered, and error-checked delivery of a stream of octets (bytes) between applications running on hosts communicating via an IP network. Major internet applications such as the World Wide Web, email, remote administration, and file transfer rely on TCP, which is part of the Transport layer of the TCP/IP suite. SSL/TLS often runs on top of TCP.

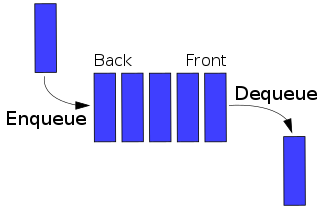

In telecommunications and computer engineering, the queuing delay or queueing delay is the time a job waits in a queue until it can be executed. It is a key component of network delay. In a switched network, queuing delay is the time between the completion of signaling by the call originator and the arrival of a ringing signal at the call receiver. Queuing delay may be caused by delays at the originating switch, intermediate switches, or the call receiver servicing switch. In a data network, queuing delay is the sum of the delays between the request for service and the establishment of a circuit to the called data terminal equipment (DTE). In a packet-switched network, queuing delay is the sum of the delays encountered by a packet between the time of insertion into the network and the time of delivery to the address.

In computer networking, the transport layer is a conceptual division of methods in the layered architecture of protocols in the network stack in the Internet protocol suite and the OSI model. The protocols of this layer provide end-to-end communication services for applications. It provides services such as connection-oriented communication, reliability, flow control, and multiplexing.

Traffic shaping is a bandwidth management technique used on computer networks which delays some or all datagrams to bring them into compliance with a desired traffic profile. Traffic shaping is used to optimize or guarantee performance, improve latency, or increase usable bandwidth for some kinds of packets by delaying other kinds. It is often confused with traffic policing, the distinct but related practice of packet dropping and packet marking.

Explicit Congestion Notification (ECN) is an extension to the Internet Protocol and to the Transmission Control Protocol and is defined in RFC 3168 (2001). ECN allows end-to-end notification of network congestion without dropping packets. ECN is an optional feature that may be used between two ECN-enabled endpoints when the underlying network infrastructure also supports it.

Network congestion in data networking and queueing theory is the reduced quality of service that occurs when a network node or link is carrying more data than it can handle. Typical effects include queueing delay, packet loss or the blocking of new connections. A consequence of congestion is that an incremental increase in offered load leads either only to a small increase or even a decrease in network throughput.

FAST TCP is a TCP congestion avoidance algorithm especially targeted at long-distance, high latency links, developed at the Netlab, California Institute of Technology and now being commercialized by FastSoft. FastSoft was acquired by Akamai Technologies in 2012.

The leaky bucket is an algorithm based on an analogy of how a bucket with a constant leak will overflow if either the average rate at which water is poured in exceeds the rate at which the bucket leaks or if more water than the capacity of the bucket is poured in all at once. It can be used to determine whether some sequence of discrete events conforms to defined limits on their average and peak rates or frequencies, e.g. to limit the actions associated with these events to these rates or delay them until they do conform to the rates. It may also be used to check conformance or limit to an average rate alone, i.e. remove any variation from the average.

Random early detection (RED), also known as random early discard or random early drop, is a queuing discipline for a network scheduler suited for congestion avoidance.

A long-tailed or heavy-tailed distribution is one that assigns relatively high probabilities to regions far from the mean or median. A more formal mathematical definition is given below. In the context of teletraffic engineering a number of quantities of interest have been shown to have a long-tailed distribution. For example, if we consider the sizes of files transferred from a web server, then, to a good degree of accuracy, the distribution is heavy-tailed, that is, there are a large number of small files transferred but, crucially, the number of very large files transferred remains a major component of the volume downloaded.

Transmission Control Protocol (TCP) uses a congestion control algorithm that includes various aspects of an additive increase/multiplicative decrease (AIMD) scheme, along with other schemes including slow start and a congestion window (CWND), to achieve congestion avoidance. The TCP congestion-avoidance algorithm is the primary basis for congestion control in the Internet. Per the end-to-end principle, congestion control is largely a function of internet hosts, not the network itself. There are several variations and versions of the algorithm implemented in protocol stacks of operating systems of computers that connect to the Internet.

TCP global synchronization in computer networks is a pattern of each sender decreasing and increasing transmission rates at the same time as other senders. It can happen to Transmission Control Protocol (TCP) flows during periods of congestion because each sender will reduce their transmission rate at the same time when packet loss occurs.

TCP tuning techniques adjust the network congestion avoidance parameters of Transmission Control Protocol (TCP) connections over high-bandwidth, high-latency networks. Well-tuned networks can perform up to 10 times faster in some cases. However, blindly following instructions without understanding their real consequences can hurt performance as well.

Packet loss occurs when one or more packets of data travelling across a computer network fail to reach their destination. Packet loss is either caused by errors in data transmission, typically across wireless networks, or network congestion. Packet loss is measured as a percentage of packets lost with respect to packets sent.

Bandwidth management is the process of measuring and controlling the communications on a network link, to avoid filling the link to capacity or overfilling the link, which would result in network congestion and poor performance of the network. Bandwidth is described by bit rate and measured in units of bits per second (bit/s) or bytes per second (B/s).

In routers and switches, active queue management (AQM) is the policy of dropping packets inside a buffer associated with a network interface controller (NIC) before that buffer becomes full, often with the goal of reducing network congestion or improving end-to-end latency. This task is performed by the network scheduler, which for this purpose uses various algorithms such as random early detection (RED), Explicit Congestion Notification (ECN), or controlled delay (CoDel). RFC 7567 recommends active queue management as a best practice.

Bufferbloat is the undesirable latency that comes from a router or other network equipment buffering too many data packets. Bufferbloat can also cause packet delay variation, as well as reduce the overall network throughput. When a router or switch is configured to use excessively large buffers, even very high-speed networks can become practically unusable for many interactive applications like voice over IP (VoIP), audio streaming, online gaming, and even ordinary web browsing.

CoDel is an active queue management (AQM) algorithm in network routing, developed by Van Jacobson and Kathleen Nichols and published as RFC8289. It is designed to overcome bufferbloat in networking hardware, such as routers, by setting limits on the delay network packets experience as they pass through buffers in this equipment. CoDel aims to improve on the overall performance of the random early detection (RED) algorithm by addressing some of its fundamental misconceptions, as perceived by Jacobson, and by being easier to manage.

A network scheduler, also called packet scheduler, queueing discipline (qdisc) or queueing algorithm, is an arbiter on a node in a packet switching communication network. It manages the sequence of network packets in the transmit and receive queues of the protocol stack and network interface controller. There are several network schedulers available for the different operating systems, that implement many of the existing network scheduling algorithms.

Kathleen Nichols is an American computer scientist and computer networking expert. Nichols is the founder and CEO of Pollere, Inc, a network architecture and performance company based in California, US. Before founding Pollere, Nichols was VP of Network Science at Packet Design, where she was part of the founding team. Prior to Packet Design she was director of advanced Internet architectures in the Office of CTO at Cisco Systems.

References

- ↑ Wei, D; Cao, P; Low, S. "TCP pacing revisited". Proceedings of IEEE INFOCOM. Vol. 2. 2006.

- ↑ Kleinrock, L (1975). Queueing systems. Wiley J. OCLC 25403139.

- ↑ Zhang, Lixia; Shenker, Scott; Clark, Daivd D. (August 1991). "Observations on the dynamics of a congestion control algorithm". Proceedings of the conference on Communications architecture & protocols. New York, NY, USA: ACM. pp. 133–147. doi:10.1145/115992.116006. ISBN 0897914449. S2CID 7824777.