In mathematics convolution is a mathematical operation on two functions to produce a third function that expresses how the shape of one is modified by the other. The term convolution refers to both the result function and to the process of computing it. Convolution is similar to cross-correlation. For real-valued functions, of a continuous or discrete variable, it differs from cross-correlation only in that either f (x) or g(x) is reflected about the y-axis; thus it is a cross-correlation of f (x) and g(−x), or f (−x) and g(x). For continuous functions, the cross-correlation operator is the adjoint of the convolution operator.

Functional analysis is a branch of mathematical analysis, the core of which is formed by the study of vector spaces endowed with some kind of limit-related structure and the linear functions defined on these spaces and respecting these structures in a suitable sense. The historical roots of functional analysis lie in the study of spaces of functions and the formulation of properties of transformations of functions such as the Fourier transform as transformations defining continuous, unitary etc. operators between function spaces. This point of view turned out to be particularly useful for the study of differential and integral equations.

In mathematics, particularly linear algebra and functional analysis, a spectral theorem is a result about when a linear operator or matrix can be diagonalized. This is extremely useful because computations involving a diagonalizable matrix can often be reduced to much simpler computations involving the corresponding diagonal matrix. The concept of diagonalization is relatively straightforward for operators on finite-dimensional vector spaces but requires some modification for operators on infinite-dimensional spaces. In general, the spectral theorem identifies a class of linear operators that can be modeled by multiplication operators, which are as simple as one can hope to find. In more abstract language, the spectral theorem is a statement about commutative C*-algebras. See also spectral theory for a historical perspective.

In mathematics, a self-adjoint operator on a finite-dimensional complex vector space V with inner product

is a linear map A that is its own adjoint:

for all vectors v and w. If V is finite-dimensional with a given orthonormal basis, this is equivalent to the condition that the matrix of A is a Hermitian matrix, i.e., equal to its conjugate transpose A∗. By the finite-dimensional spectral theorem, V has an orthonormal basis such that the matrix of A relative to this basis is a diagonal matrix with entries in the real numbers. In this article, we consider generalizations of this concept to operators on Hilbert spaces of arbitrary dimension.

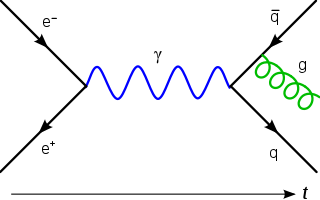

In physics, the Wightman axioms, named after Lars Gårding and Arthur Wightman, are an attempt at a mathematically rigorous formulation of quantum field theory. Arthur Wightman formulated the axioms in the early 1950s, but they were first published only in 1964 after Haag–Ruelle scattering theory affirmed their significance.

In mathematical analysis, Parseval's identity, named after Marc-Antoine Parseval, is a fundamental result on the summability of the Fourier series of a function. Geometrically, it is the

Pythagorean theorem for inner-product spaces.

In mathematics and in signal processing, the Hilbert transform is a specific linear operator that takes a function, u(t) of a real variable and produces another function of a real variable H(u)(t). This linear operator is given by convolution with the function

:

In mathematics, a positive-definite function is, depending on the context, either of two types of function.

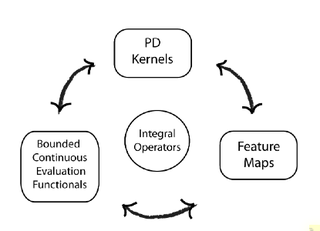

In functional analysis, a reproducing kernel Hilbert space (RKHS) is a Hilbert space of functions in which point evaluation is a continuous linear functional. Roughly speaking, this means that if two functions

and

in the RKHS are close in norm, i.e.,

is small, then

and

are also pointwise close, i.e.,

is small for all

. The reverse need not be true.

In mathematics, the Riesz–Thorin theorem, often referred to as the Riesz–Thorin interpolation theorem or the Riesz–Thorin convexity theorem, is a result about interpolation of operators. It is named after Marcel Riesz and his student G. Olof Thorin.

In mathematics, the Marcinkiewicz interpolation theorem, discovered by Józef Marcinkiewicz (1939), is a result bounding the norms of non-linear operators acting on Lp spaces.

In mathematics and mathematical physics, potential theory is the study of harmonic functions.

In mathematics, Bochner's theorem characterizes the Fourier transform of a positive finite Borel measure on the real line. More generally in harmonic analysis, Bochner's theorem asserts that under Fourier transform a continuous positive definite function on a locally compact abelian group corresponds to a finite positive measure on the Pontryagin dual group.

In mathematics, a nuclear space is a topological vector space with many of the good properties of finite-dimensional vector spaces. The topology on them can be defined by a family of seminorms whose unit balls decrease rapidly in size. Vector spaces whose elements are "smooth" in some sense tend to be nuclear spaces; a typical example of a nuclear space is the set of smooth functions on a compact manifold.

The mathematical concept of a Hilbert space, named after David Hilbert, generalizes the notion of Euclidean space. It extends the methods of vector algebra and calculus from the two-dimensional Euclidean plane and three-dimensional space to spaces with any finite or infinite number of dimensions. A Hilbert space is an abstract vector space possessing the structure of an inner product that allows length and angle to be measured. Furthermore, Hilbert spaces are complete: there are enough limits in the space to allow the techniques of calculus to be used.

In mathematical analysis, the Hardy–Littlewood tauberian theorem is a tauberian theorem relating the asymptotics of the partial sums of a series with the asymptotics of its Abel summation. In this form, the theorem asserts that if, as y ↓ 0, the non-negative sequence an is such that there is an asymptotic equivalence

In mathematics, singular integral operators on closed curves arise in problems in analysis, in particular complex analysis and harmonic analysis. The two main singular integral operators, the Hilbert transform and the Cauchy transform, can be defined for any smooth Jordan curve in the complex plane and are related by a simple algebraic formula. The Hilbert transform is an involution and the Cauchy transform an idempotent. The range of the Cauchy transform is the Hardy space of the bounded region enclosed by the Jordan curve. The theory for the original curve can be deduced from that on the unit circle, where, because of rotational symmetry, both operators are classical singular integral operators of convolution type. The Hilbert transform satisfies the jump relations of Plemelj and Sokhotski, which express the original function as the difference between the boundary values of holomorphic functions on the region and its complement. Singular integral operators have been studied on various classes of functions, including Hőlder spaces, Lp spaces and Sobolev spaces. In the case of L2 spaces—the case treated in detail below—other operators associated with the closed curve, such as the Szegő projection onto Hardy space and the Neumann–Poincaré operator, can be expressed in terms of the Cauchy transform and its adjoint.