In algorithmic information theory, the Kolmogorov complexity of an object, such as a piece of text, is the length of a shortest computer program that produces the object as output. It is a measure of the computational resources needed to specify the object, and is also known as algorithmic complexity, Solomonoff–Kolmogorov–Chaitin complexity, program-size complexity, descriptive complexity, or algorithmic entropy. It is named after Andrey Kolmogorov, who first published on the subject in 1963 and is a generalization of classical information theory.

In statistics, the Kolmogorov–Smirnov test is a nonparametric test of the equality of continuous, one-dimensional probability distributions that can be used to compare a sample with a reference probability distribution, or to compare two samples. In essence, the test answers the question "How likely is it that we would see a collection of samples like this if they were drawn from that probability distribution?" or, in the second case, "How likely is it that we would see two sets of samples like this if they were drawn from the same probability distribution?". It is named after Andrey Kolmogorov and Nikolai Smirnov.

The bin packing problem is an optimization problem, in which items of different sizes must be packed into a finite number of bins or containers, each of a fixed given capacity, in a way that minimizes the number of bins used. The problem has many applications, such as filling up containers, loading trucks with weight capacity constraints, creating file backups in media, and technology mapping in FPGA semiconductor chip design.

Minimum Description Length (MDL) is a model selection principle where the shortest description of the data is the best model. MDL methods learn through a data compression perspective and are sometimes described as mathematical applications of Occam's razor. The MDL principle can be extended to other forms of inductive inference and learning, for example to estimation and sequential prediction, without explicitly identifying a single model of the data.

In algorithmic information theory, algorithmic probability, also known as Solomonoff probability, is a mathematical method of assigning a prior probability to a given observation. It was invented by Ray Solomonoff in the 1960s. It is used in inductive inference theory and analyses of algorithms. In his general theory of inductive inference, Solomonoff uses the method together with Bayes' rule to obtain probabilities of prediction for an algorithm's future outputs.

In information theory, Shannon's source coding theorem establishes the statistical limits to possible data compression for data whose source is an independent identically-distributed random variable, and the operational meaning of the Shannon entropy.

In computational complexity theory, PostBQP is a complexity class consisting of all of the computational problems solvable in polynomial time on a quantum Turing machine with postselection and bounded error.

The chain rule for Kolmogorov complexity is an analogue of the chain rule for information entropy, which states:

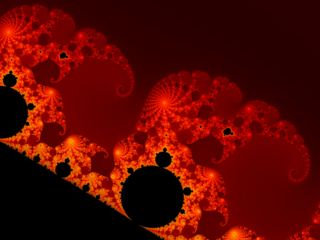

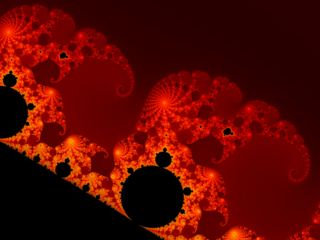

In mathematics, effective dimension is a modification of Hausdorff dimension and other fractal dimensions that places it in a computability theory setting. There are several variations of which the most common is effective Hausdorff dimension. Dimension, in mathematics, is a particular way of describing the size of an object. Hausdorff dimension generalizes the well-known integer dimensions assigned to points, lines, planes, etc. by allowing one to distinguish between objects of intermediate size between these integer-dimensional objects. For example, fractal subsets of the plane may have intermediate dimension between 1 and 2, as they are "larger" than lines or curves, and yet "smaller" than filled circles or rectangles. Effective dimension modifies Hausdorff dimension by requiring that objects with small effective dimension be not only small but also locatable in a computable sense. As such, objects with large Hausdorff dimension also have large effective dimension, and objects with small effective dimension have small Hausdorff dimension, but an object can have small Hausdorff but large effective dimension. An example is an algorithmically random point on a line, which has Hausdorff dimension 0 but effective dimension 1.

In mathematics, the FEE method, or fast E-function evaluation method, is the method of fast summation of series of a special form. It was constructed in 1990 by Ekaterina Karatsuba and is so-named because it makes fast computations of the Siegel E-functions possible, in particular of .

In mathematics the Function Field Sieve is one of the most efficient algorithms to solve the Discrete Logarithm Problem (DLP) in a finite field. It has heuristic subexponential complexity. Leonard Adleman developed it in 1994 and then elaborated it together with M. D. Huang in 1999. Previous work includes the work of D. Coppersmith about the DLP in fields of characteristic two.

The exponential mechanism is a technique for designing differentially private algorithms. It was developed by Frank McSherry and Kunal Talwar in 2007. Their work was recognized as a co-winner of the 2009 PET Award for Outstanding Research in Privacy Enhancing Technologies.

In probability theory and statistics, the Poisson distribution is a discrete probability distribution that expresses the probability of a given number of events occurring in a fixed interval of time or space if these events occur with a known constant mean rate and independently of the time since the last event. It is named after French mathematician Siméon Denis Poisson. The Poisson distribution can also be used for the number of events in other specified interval types such as distance, area, or volume. It plays an important role for discrete-stable distributions.

In data structures, a range query consists of pre-processing some input data into a data structure to efficiently answer any number of queries on any subset of the input. Particularly, there is a group of problems that have been extensively studied where the input is an array of unsorted numbers and a query consists of computing some function, such as the minimum, on a specific range of the array.

Information distance is the distance between two finite objects expressed as the number of bits in the shortest program which transforms one object into the other one or vice versa on a universal computer. This is an extension of Kolmogorov complexity. The Kolmogorov complexity of a single finite object is the information in that object; the information distance between a pair of finite objects is the minimum information required to go from one object to the other or vice versa. Information distance was first defined and investigated in based on thermodynamic principles, see also. Subsequently, it achieved final form in. It is applied in the normalized compression distance and the normalized Google distance.

A hyperbolic geometric graph (HGG) or hyperbolic geometric network (HGN) is a special type of spatial network where (1) latent coordinates of nodes are sprinkled according to a probability density function into a hyperbolic space of constant negative curvature and (2) an edge between two nodes is present if they are close according to a function of the metric (typically either a Heaviside step function resulting in deterministic connections between vertices closer than a certain threshold distance, or a decaying function of hyperbolic distance yielding the connection probability). A HGG generalizes a random geometric graph (RGG) whose embedding space is Euclidean.

In computational learning theory, Occam learning is a model of algorithmic learning where the objective of the learner is to output a succinct representation of received training data. This is closely related to probably approximately correct (PAC) learning, where the learner is evaluated on its predictive power of a test set.

The distributional learning theory or learning of probability distribution is a framework in computational learning theory. It has been proposed from Michael Kearns, Yishay Mansour, Dana Ron, Ronitt Rubinfeld, Robert Schapire and Linda Sellie in 1994 and it was inspired from the PAC-framework introduced by Leslie Valiant.

In PAC learning, error tolerance refers to the ability of an algorithm to learn when the examples received have been corrupted in some way. In fact, this is a very common and important issue since in many applications it is not possible to access noise-free data. Noise can interfere with the learning process at different levels: the algorithm may receive data that have been occasionally mislabeled, or the inputs may have some false information, or the classification of the examples may have been maliciously adulterated.

In mathematics, the incompressibility method is a proof method like the probabilistic method, the counting method or the pigeonhole principle. To prove that an object in a certain class satisfies a certain property, select an object of that class that is incompressible. If it does not satisfy the property, it can be compressed by computable coding. Since it can be generally proven that almost all objects in a given class are incompressible, the argument demonstrates that almost all objects in the class have the property involved. To select an incompressible object is ineffective, and cannot be done by a computer program. However, a simple counting argument usually shows that almost all objects of a given class can be compressed by only a few bits.