A codec is a device or computer program that encodes or decodes a data stream or signal. Codec is a portmanteau of coder/decoder.

Latency, from a general point of view, is a time delay between the cause and the effect of some physical change in the system being observed. Lag, as it is known in gaming circles, refers to the latency between the input to a simulation and the visual or auditory response, often occurring because of network delay in online games.

Quality of service (QoS) is the description or measurement of the overall performance of a service, such as a telephony or computer network, or a cloud computing service, particularly the performance seen by the users of the network. To quantitatively measure quality of service, several related aspects of the network service are often considered, such as packet loss, bit rate, throughput, transmission delay, availability, jitter, etc.

Speech coding is an application of data compression to digital audio signals containing speech. Speech coding uses speech-specific parameter estimation using audio signal processing techniques to model the speech signal, combined with generic data compression algorithms to represent the resulting modeled parameters in a compact bitstream.

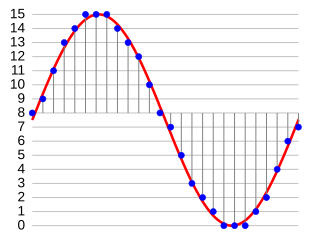

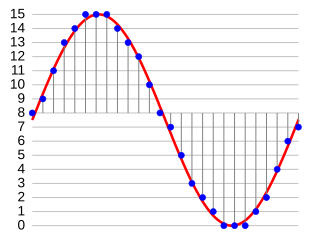

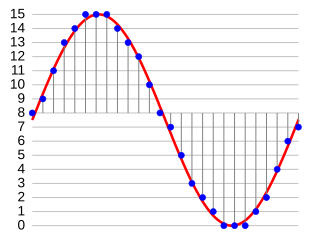

Digital audio is a representation of sound recorded in, or converted into, digital form. In digital audio, the sound wave of the audio signal is typically encoded as numerical samples in a continuous sequence. For example, in CD audio, samples are taken 44,100 times per second, each with 16-bit sample depth. Digital audio is also the name for the entire technology of sound recording and reproduction using audio signals that have been encoded in digital form. Following significant advances in digital audio technology during the 1970s and 1980s, it gradually replaced analog audio technology in many areas of audio engineering, record production and telecommunications in the 1990s and 2000s.

Voice over Internet Protocol (VoIP), also called IP telephony, is a method and group of technologies for voice calls for the delivery of voice communication sessions over Internet Protocol (IP) networks, such as the Internet.

A mixing console or mixing desk is an electronic device for mixing audio signals, used in sound recording and reproduction and sound reinforcement systems. Inputs to the console include microphones, signals from electric or electronic instruments, or recorded sounds. Mixers may control analog or digital signals. The modified signals are summed to produce the combined output signals, which can then be broadcast, amplified through a sound reinforcement system or recorded.

In analog telephony, a telephone hybrid is the component at the ends of a subscriber line of the public switched telephone network (PSTN) that converts between two-wire and four-wire forms of bidirectional audio paths. When used in broadcast facilities to enable the airing of telephone callers, the broadcast-quality telephone hybrid is known as a broadcast telephone hybrid or telephone balance unit.

G.729 is a royalty-free narrow-band vocoder-based audio data compression algorithm using a frame length of 10 milliseconds. It is officially described as Coding of speech at 8 kbit/s using code-excited linear prediction speech coding (CS-ACELP), and was introduced in 1996. The wide-band extension of G.729 is called G.729.1, which equals G.729 Annex J.

A VoIP phone or IP phone uses voice over IP technologies for placing and transmitting telephone calls over an IP network, such as the Internet. This is in contrast to a standard phone which uses the traditional public switched telephone network (PSTN).

Delay is an audio signal processing technique that records an input signal to a storage medium and then plays it back after a period of time. When the delayed playback is mixed with the live audio, it creates an echo-like effect, whereby the original audio is heard followed by the delayed audio. The delayed signal may be played back multiple times, or fed back into the recording, to create the sound of a repeating, decaying echo.

Perceptual Evaluation of Audio Quality (PEAQ) is a standardized algorithm for objectively measuring perceived audio quality, developed in 1994–1998 by a joint venture of experts within Task Group 6Q of the International Telecommunication Union's Radiocommunication Sector (ITU-R). It was originally released as ITU-R Recommendation BS.1387 in 1998 and last updated in 2023. It utilizes software to simulate perceptual properties of the human ear and then integrates multiple model output variables into a single metric.

Audio-to-video synchronization refers to the relative timing of audio (sound) and video (image) parts during creation, post-production (mixing), transmission, reception and play-back processing. AV synchronization can be an issue in television, videoconferencing, or film.

CobraNet is a combination of software, hardware, and network protocols designed to deliver uncompressed, multi-channel, low-latency digital audio over a standard Ethernet network. Developed in the 1990s, CobraNet is widely regarded as the first commercially successful audio-over-Ethernet implementation.

A software effect processor is a computer program that alters the sound from a digital source through audio signal processing in real time. It is a digital analog of hardware effects processors. It is an integral part of audio editing software, such as in Adobe Audition

G.718 is an ITU-T Recommendation embedded scalable speech and audio codec providing high quality narrowband speech over the lower bit rates and high quality wideband speech over the complete range of bit rates. In addition, G.718 is designed to be highly robust to frame erasures, thereby enhancing the speech quality when used in Internet Protocol (IP) transport applications on fixed, wireless and mobile networks. Despite its embedded nature, the codec also performs well with both narrowband and wideband generic audio signals. The codec has an embedded scalable structure, enabling maximum flexibility in the transport of voice packets through IP networks of today and in future media-aware networks. In addition, the embedded structure of G.718 will easily allow the codec to be extended to provide a superwideband and stereo capability through additional layers which are currently under development in ITU-T Study Group 16. The bitstream may be truncated at the decoder side or by any component of the communication system to instantaneously adjust the bit rate to the desired value without the need for out-of-band signalling. The encoder produces an embedded bitstream structured in five layers corresponding to the five available bit rates: 8, 12, 16, 24 & 32 kbit/s.

Adaptive differential pulse-code modulation (ADPCM) is a variant of differential pulse-code modulation (DPCM) that varies the size of the quantization step, to allow further reduction of the required data bandwidth for a given signal-to-noise ratio.

aptX is a family of proprietary audio codec compression algorithms owned by Qualcomm, with a heavy emphasis on wireless audio applications.

Echo suppression and echo cancellation are methods used in telephony to improve voice quality by preventing echo from being created or removing it after it is already present. In addition to improving subjective audio quality, echo suppression increases the capacity achieved through silence suppression by preventing echo from traveling across a telecommunications network. Echo suppressors were developed in the 1950s in response to the first use of satellites for telecommunications.

Jamulus is open source (GPL) networked music performance software that enables live rehearsing, jamming and performing with musicians located anywhere on the internet. Jamulus is written by Volker Fischer and contributors using C++. The Software is based on the Qt framework and uses the OPUS audio codec. It was known as "llcon" until 2013.