Related Research Articles

Artificial intelligence (AI), in its broadest sense, is intelligence exhibited by machines, particularly computer systems. It is a field of research in computer science that develops and studies methods and software which enable machines to perceive their environment and uses learning and intelligence to take actions that maximize their chances of achieving defined goals. Such machines may be called AIs.

Microsoft Bing, commonly referred to as Bing, is a search engine owned and operated by Microsoft. The service traces its roots back to Microsoft's earlier search engines, including MSN Search, Windows Live Search, and Live Search. Bing offers a broad spectrum of search services, encompassing web, video, image, and map search products, all developed using ASP.NET.

Deep learning is the subset of machine learning methods based on neural networks with representation learning. The adjective "deep" refers to the use of multiple layers in the network. Methods used can be either supervised, semi-supervised or unsupervised.

Google Brain was a deep learning artificial intelligence research team under the umbrella of Google AI, a research division at Google dedicated to artificial intelligence. Formed in 2011, it combined open-ended machine learning research with information systems and large-scale computing resources. It created tools such as TensorFlow, which allow neural networks to be used by the public, and multiple internal AI research projects, and aimed to create research opportunities in machine learning and natural language processing. It was merged into former Google sister company DeepMind to form Google DeepMind in April 2023.

DeepMind Technologies Limited, doing business as Google DeepMind, is a British-American artificial intelligence research laboratory which serves as a subsidiary of Google. Founded in the UK in 2010, it was acquired by Google in 2014. The company is based in London, with research centres in Canada, France, Germany, and the United States.

Fei-Fei Li is a China-born American computer scientist, known for establishing ImageNet, the dataset that enabled rapid advances in computer vision in the 2010s. She is the Sequoia Capital professor of computer science at Stanford University and former board director at Twitter. Li is a co-director of the Stanford Institute for Human-Centered Artificial Intelligence and a co-director of the Stanford Vision and Learning Lab. She served as the director of the Stanford Artificial Intelligence Laboratory from 2013 to 2018.

Multimodal learning, in the context of machine learning, is a type of deep learning using a combination of various modalities of data, such as text, audio, or images, in order to create a more robust model of the real-world phenomena in question. In contrast, singular modal learning would analyze text or imaging data independently. Multimodal machine learning combines these fundamentally different statistical analyses using specialized modeling strategies and algorithms, resulting in a model that comes closer to representing the real world.

OpenAI is an American artificial intelligence (AI) research organization founded in December 2015, researching artificial intelligence with the goal of developing "safe and beneficial" artificial general intelligence, which it defines as "highly autonomous systems that outperform humans at most economically valuable work". As one of the leading organizations of the AI boom, it has developed several large language models, advanced image generation models, and previously, released open-source models. Its release of ChatGPT has been credited with starting the AI boom.

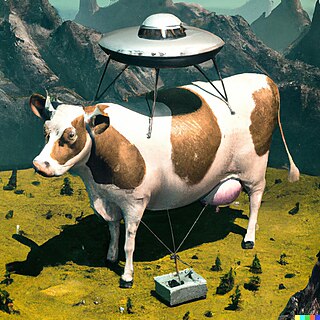

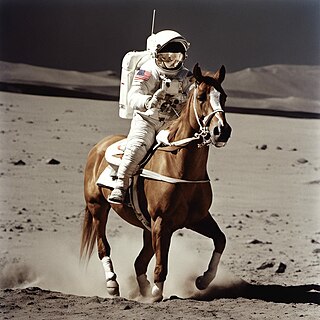

Artificial intelligence art is any visual artwork created through the use of artificial intelligence (AI) programs such as text-to-image models. AI art began to gain popularity in the mid- to late-20th century through the boom of artificial intelligence.

A transformer is a deep learning architecture developed by Google and based on the multi-head attention mechanism, proposed in a 2017 paper "Attention Is All You Need". Text is converted to numerical representations called tokens, and each token is converted into a vector via looking up from a word embedding table. At each layer, each token is then contextualized within the scope of the context window with other (unmasked) tokens via a parallel multi-head attention mechanism allowing the signal for key tokens to be amplified and less important tokens to be diminished. The transformer paper, published in 2017, is based on the softmax-based attention mechanism proposed by Bahdanau et. al. in 2014 for machine translation, and the Fast Weight Controller, similar to a transformer, proposed in 1992.

This article presents a detailed timeline of events in the history of computing from 2020 to the present. For narratives explaining the overall developments, see the history of computing.

Prompt engineering is the process of structuring an instruction that can be interpreted and understood by a generative AI model. A prompt is natural language text describing the task that an AI should perform.

Stable Diffusion is a deep learning, text-to-image model released in 2022 based on diffusion techniques. It is considered to be a part of the ongoing artifical intelligence boom.

A text-to-image model is a machine learning model which takes an input natural language description and produces an image matching that description.

A text-to-video model is a machine learning model which takes a natural language description as input and producing a video or multiples videos from the input.

In the field of artificial intelligence (AI), a hallucination or artificial hallucination is a response generated by AI which contains false or misleading information presented as fact. This term draws a loose analogy with human psychology, where hallucination typically involves false percepts. However, there’s a key difference: AI hallucination is associated with unjustified responses or beliefs rather than perceptual experiences.

A large language model (LLM) is a computational model notable for its ability to achieve general-purpose language generation and other natural language processing tasks such as classification. Based on language models, LLMs acquire these abilities by learning statistical relationships from text documents during a computationally intensive self-supervised and semi-supervised training process. LLMs can be used for text generation, a form of generative AI, by taking an input text and repeatedly predicting the next token or word.

Generative artificial intelligence is artificial intelligence capable of generating text, images, videos, or other data using generative models, often in response to prompts. Generative AI models learn the patterns and structure of their input training data and then generate new data that has similar characteristics.

Gemini is a family of multimodal large language models developed by Google DeepMind, serving as the successor to LaMDA and PaLM 2. Comprising Gemini Ultra, Gemini Pro, and Gemini Nano, it was announced on December 6, 2023, positioned as a competitor to OpenAI's GPT-4. It powers the chatbot of the same name.

References

- 1 2 Krithika, K. L. (December 20, 2023). "Google Unveils VideoPoet, a New LLM for Video Generation". Analytics India Magazine. Retrieved April 29, 2024.

- ↑ Kondratyuk, Dan; Yu, Lijun; Gu, Xiuye; Lezama, José; Huang, Jonathan; Hornung, Rachel; Adam, Hartwig; Akbari, Hassan; Alon, Yair; Birodkar, Vighnesh; Cheng, Yong; Chiu, Ming-Chang; Dillon, Josh; Essa, Irfan; Gupta, Agrim; Hahn, Meera; Hauth, Anja; Hendon, David; Martinez, Alonso; Minnen, David; Ross, David; Schindler, Grant; Sirotenko, Mikhail; Sohn, Kihyuk; Somandepalli, Krishna; Wang, Huisheng; Yan, Jimmy; Yang, Ming-Hsuan; Yang, Xuan; Seybold, Bryan; Jiang, Lu (December 21, 2023). "VideoPoet: A Large Language Model for Zero-Shot Video Generation". arXiv: 2312.14125 [cs.CV].

- ↑ "Google has introduced VideoPOET breaking new ground in coherent video generation". Gizmochina. December 21, 2023.

- 1 2 "VideoPoet". Google Research. Retrieved April 29, 2024.

- ↑ Franzen, Carl (December 20, 2023). "Google's new multimodal AI video generator VideoPoet looks incredible". VentureBeat.