Neuromorphic computing is an approach to computing that is inspired by the structure and function of the human brain. A neuromorphic computer/chip is any device that uses physical artificial neurons to do computations. In recent times, the term neuromorphic has been used to describe analog, digital, mixed-mode analog/digital VLSI, and software systems that implement models of neural systems. The implementation of neuromorphic computing on the hardware level can be realized by oxide-based memristors, spintronic memories, threshold switches, transistors, among others. Training software-based neuromorphic systems of spiking neural networks can be achieved using error backpropagation, e.g., using Python based frameworks such as snnTorch, or using canonical learning rules from the biological learning literature, e.g., using BindsNet.

Carver Andress Mead is an American scientist and engineer. He currently holds the position of Gordon and Betty Moore Professor Emeritus of Engineering and Applied Science at the California Institute of Technology (Caltech), having taught there for over 40 years.

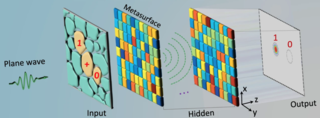

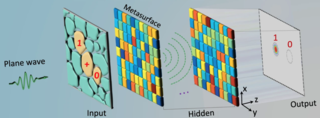

An optical neural network (ONN) is a physical implementation of an artificial neural network with optical components.

An image sensor or imager is a sensor that detects and conveys information used to form an image. It does so by converting the variable attenuation of light waves into signals, small bursts of current that convey the information. The waves can be light or other electromagnetic radiation. Image sensors are used in electronic imaging devices of both analog and digital types, which include digital cameras, camera modules, camera phones, optical mouse devices, medical imaging equipment, night vision equipment such as thermal imaging devices, radar, sonar, and others. As technology changes, electronic and digital imaging tends to replace chemical and analog imaging.

An active-pixel sensor (APS) is an image sensor, which was invented by Peter J.W. Noble in 1968, where each pixel sensor unit cell has a photodetector and one or more active transistors. In a metal–oxide–semiconductor (MOS) active-pixel sensor, MOS field-effect transistors (MOSFETs) are used as amplifiers. There are different types of APS, including the early NMOS APS and the now much more common complementary MOS (CMOS) APS, also known as the CMOS sensor. CMOS sensors are used in digital camera technologies such as cell phone cameras, web cameras, most modern digital pocket cameras, most digital single-lens reflex cameras (DSLRs), mirrorless interchangeable-lens cameras (MILCs), and lensless imaging for cells.

In digital imaging, a color filter array (CFA), or color filter mosaic (CFM), is a mosaic of tiny color filters placed over the pixel sensors of an image sensor to capture color information.

The asynchronous array of simple processors (AsAP) architecture comprises a 2-D array of reduced complexity programmable processors with small scratchpad memories interconnected by a reconfigurable mesh network. AsAP was developed by researchers in the VLSI Computation Laboratory (VCL) at the University of California, Davis and achieves high performance and energy-efficiency, while using a relatively small circuit area. It was made in 2006.

Spiking neural networks (SNNs) are artificial neural networks (ANN) that more closely mimic natural neural networks. In addition to neuronal and synaptic state, SNNs incorporate the concept of time into their operating model. The idea is that neurons in the SNN do not transmit information at each propagation cycle, but rather transmit information only when a membrane potential—an intrinsic quality of the neuron related to its membrane electrical charge—reaches a specific value, called the threshold. When the membrane potential reaches the threshold, the neuron fires, and generates a signal that travels to other neurons which, in turn, increase or decrease their potentials in response to this signal. A neuron model that fires at the moment of threshold crossing is also called a spiking neuron model.

Object detection is a computer technology related to computer vision and image processing that deals with detecting instances of semantic objects of a certain class in digital images and videos. Well-researched domains of object detection include face detection and pedestrian detection. Object detection has applications in many areas of computer vision, including image retrieval and video surveillance.

SpiNNaker is a massively parallel, manycore supercomputer architecture designed by the Advanced Processor Technologies Research Group (APT) at the Department of Computer Science, University of Manchester. It is composed of 57,600 processing nodes, each with 18 ARM9 processors and 128 MB of mobile DDR SDRAM, totalling 1,036,800 cores and over 7 TB of RAM. The computing platform is based on spiking neural networks, useful in simulating the human brain.

A cognitive computer is a computer that hardwires artificial intelligence and machine learning algorithms into an integrated circuit that closely reproduces the behavior of the human brain. It generally adopts a neuromorphic engineering approach. Synonyms include neuromorphic chip and cognitive chip.

Donhee Ham is the John A. and Elizabeth S. Armstrong Professor of Engineering and Applied Sciences at Harvard University and Fellow of Samsung Electronics.

The MNIST database is a large database of handwritten digits that is commonly used for training various image processing systems. The database is also widely used for training and testing in the field of machine learning. It was created by "re-mixing" the samples from NIST's original datasets. The creators felt that since NIST's training dataset was taken from American Census Bureau employees, while the testing dataset was taken from American high school students, it was not well-suited for machine learning experiments. Furthermore, the black and white images from NIST were normalized to fit into a 28x28 pixel bounding box and anti-aliased, which introduced grayscale levels.

Convolutional neural network (CNN) is a regularized type of feed-forward neural network that learns feature engineering by itself via filters optimization. Vanishing gradients and exploding gradients, seen during backpropagation in earlier neural networks, are prevented by using regularized weights over fewer connections. For example, for each neuron in the fully-connected layer, 10,000 weights would be required for processing an image sized 100 × 100 pixels. However, applying cascaded convolution kernels, only 25 neurons are required to process 5x5-sized tiles. Higher-layer features are extracted from wider context windows, compared to lower-layer features.

In the domain of physics and probability, the filters, random fields, and maximum entropy (FRAME) model is a Markov random field model of stationary spatial processes, in which the energy function is the sum of translation-invariant potential functions that are one-dimensional non-linear transformations of linear filter responses. The FRAME model was originally developed by Song-Chun Zhu, Ying Nian Wu, and David Mumford for modeling stochastic texture patterns, such as grasses, tree leaves, brick walls, water waves, etc. This model is the maximum entropy distribution that reproduces the observed marginal histograms of responses from a bank of filters, where for each filter tuned to a specific scale and orientation, the marginal histogram is pooled over all the pixels in the image domain. The FRAME model is also proved to be equivalent to the micro-canonical ensemble, which was named the Julesz ensemble. Gibbs sampler is adopted to synthesize texture images by drawing samples from the FRAME model.

Video super-resolution (VSR) is the process of generating high-resolution video frames from the given low-resolution video frames. Unlike single-image super-resolution (SISR), the main goal is not only to restore more fine details while saving coarse ones, but also to preserve motion consistency.

Edoardo Charbon is a Swiss electrical engineer. He is a professor of quantum engineering at EPFL and the head of the Laboratory of Advanced Quantum Architecture (AQUA) at the School of Engineering.

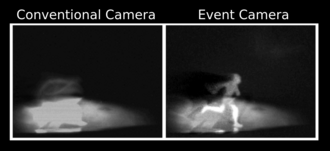

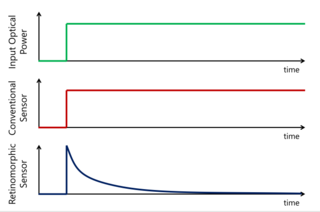

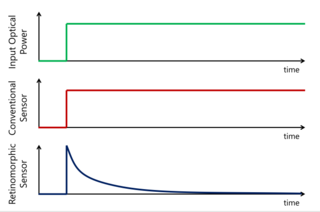

Retinomorphic sensors are a type of event-driven optical sensor which produce a signal in response to changes in light intensity, rather than to light intensity itself. This is in contrast to conventional optical sensors such as charge coupled device (CCD) or complementary metal oxide semiconductor (CMOS) based sensors, which output a signal that increases with increasing light intensity. Because they respond to movement only, retinomorphic sensors are hoped to enable faster tracking of moving objects than conventional image sensors, and have potential applications in autonomous vehicles, robotics, and neuromorphic engineering.

Small object detection is a particular case of object detection where various techniques are employed to detect small objects in digital images and videos. "Small objects" are objects having a small pixel footprint in the input image. In areas such as aerial imagery, state-of-the-art object detection techniques under performed because of small objects.

Peter L. P. Dillon is an American physicist, and the inventor of integral color image sensors.. and single-chip color video cameras. The curator of the Technology Collection at the George Eastman Museum, Todd Gustavson, has stated that "the color sensor technology developed by Peter Dillon has revolutionized all forms of color photography. These color sensors are now ubiquitous in products such as smart phone cameras, digital cameras and camcorders, digital cinema cameras, medical cameras, automobile cameras, and drones". Dillon joined Kodak Research Labs in 1959 and retired from Kodak in 1991. He lives in Pittsford, New York.