Quality of service (QoS) is the description or measurement of the overall performance of a service, such as a telephony or computer network or a cloud computing service, particularly the performance seen by the users of the network. To quantitatively measure quality of service, several related aspects of the network service are often considered, such as packet loss, bit rate, throughput, transmission delay, availability, jitter, etc.

Network throughput refers to the rate of successful message delivery over a communication channel, such as Ethernet or packet radio, in a communication network.The data that these messages contain may be delivered over physical or logical links, or through network nodes. Throughput is usually measured in bits per second, and sometimes in data packets per second or data packets per time slot.

In software quality assurance, performance testing is in general a testing practice performed to determine how a system performs in terms of responsiveness and stability under a particular workload. It can also serve to investigate, measure, validate or verify other quality attributes of the system, such as scalability, reliability and resource usage.

In computer networking, the transport layer is a conceptual division of methods in the layered architecture of protocols in the network stack in the Internet protocol suite and the OSI model. The protocols of this layer provide end-to-end communication services for applications. It provides services such as connection-oriented communication, reliability, flow control, and multiplexing.

Network congestion in data networking and queueing theory is the reduced quality of service that occurs when a network node or link is carrying more data than it can handle. Typical effects include queueing delay, packet loss or the blocking of new connections. A consequence of congestion is that an incremental increase in offered load leads either only to a small increase or even a decrease in network throughput.

In data communications, flow control is the process of managing the rate of data transmission between two nodes to prevent a fast sender from overwhelming a slow receiver. It provides a mechanism for the receiver to control the transmission speed, so that the receiving node is not overwhelmed with data from transmitting node. Flow control should be distinguished from congestion control, which is used for controlling the flow of data when congestion has actually occurred. Flow control mechanisms can be classified by whether or not the receiving node sends feedback to the sending node.

Transmission Control Protocol (TCP) uses a network congestion-avoidance algorithm that includes various aspects of an additive increase/multiplicative decrease (AIMD) scheme, along with other schemes including slow start and congestion window (CWND), to achieve congestion avoidance. The TCP congestion-avoidance algorithm is the primary basis for congestion control in the Internet. Per the end-to-end principle, congestion control is largely a function of internet hosts, not the network itself. There are several variations and versions of the algorithm implemented in protocol stacks of operating systems of computers that connect to the Internet.

Fair queuing is a family of scheduling algorithms used in some process and network schedulers. The algorithm is designed to achieve fairness when a limited resource is shared, for example to prevent flows with large packets or processes that generate small jobs from consuming more throughput or CPU time than other flows or processes.

A computer network is a set of computers sharing resources located on or provided by network nodes. The computers use common communication protocols over digital interconnections to communicate with each other. These interconnections are made up of telecommunication network technologies, based on physically wired, optical, and wireless radio-frequency methods that may be arranged in a variety of network topologies.

In communication networks, multiplexing and the division of scarce resources, max-min fairness is said to be achieved by an allocation if and only if the allocation is feasible and an attempt to increase the allocation of any participant necessarily results in the decrease in the allocation of some other participant with an equal or smaller allocation.

Weighted fair queueing (WFQ) is a network scheduling algorithm. WFQ is both a packet-based implementation of the generalized processor sharing (GPS) policy, and a natural extension of fair queuing (FQ). Whereas FQ shares the link's capacity in equal subparts, WFQ allows schedulers to specify, for each flow, which fraction of the capacity will be given.

Three-phase traffic theory is a theory of traffic flow developed by Boris Kerner between 1996 and 2002. It focuses mainly on the explanation of the physics of traffic breakdown and resulting congested traffic on highways. Kerner describes three phases of traffic, while the classical theories based on the fundamental diagram of traffic flow have two phases: free flow and congested traffic. Kerner’s theory divides congested traffic into two distinct phases, synchronized flow and wide moving jam, bringing the total number of phases to three:

A wide variety of different wireless data technologies exist, some in direct competition with one another, others designed for specific applications. Wireless technologies can be evaluated by a variety of different metrics of which some are described in this entry.

In computing, computer performance is the amount of useful work accomplished by a computer system. Outside of specific contexts, computer performance is estimated in terms of accuracy, efficiency and speed of executing computer program instructions. When it comes to high computer performance, one or more of the following factors might be involved:

In a communication network, sometimes a max-min fairness of the network is desired, usually opposed to the basic first-come first-served policy. With max-min fairness, data flow between any two nodes is maximized, but only at the cost of more or equally expensive data flows. To put it another way, in case of network congestion any data flow is only impacted by smaller or equal flows.

In software engineering, a bottleneck occurs when the capacity of an application or a computer system is limited by a single component, like the neck of a bottle slowing down the overall water flow. The bottleneck has the lowest throughput of all parts of the transaction path.

In packet switching networks, traffic flow, packet flow or network flow is a sequence of packets from a source computer to a destination, which may be another host, a multicast group, or a broadcast domain. RFC 2722 defines traffic flow as "an artificial logical equivalent to a call or connection." RFC 3697 defines traffic flow as "a sequence of packets sent from a particular source to a particular unicast, anycast, or multicast destination that the source desires to label as a flow. A flow could consist of all packets in a specific transport connection or a media stream. However, a flow is not necessarily 1:1 mapped to a transport connection." Flow is also defined in RFC 3917 as "a set of IP packets passing an observation point in the network during a certain time interval." Packet flow temporal efficiency can be affected by one-way delay (OWD) that is described as a combination of the following components:

A single point of failure (SPOF) is a part of a system that, if it fails, will stop the entire system from working. SPOFs are undesirable in any system with a goal of high availability or reliability, be it a business practice, software application, or other industrial system.

Bottleneck literally refers to the narrowed portion (neck) of a bottle near its opening, which limit the rate of outflow, and may describe any object of a similar shape. The literal neck of a bottle was originally used to play what is now known as slide guitar.

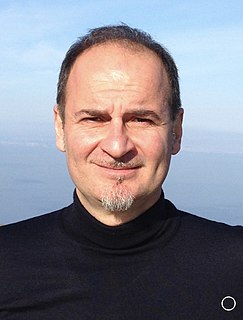

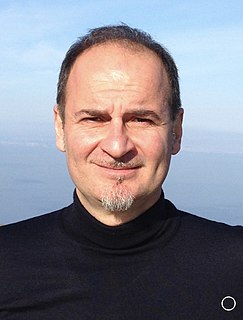

Saverio Mascolo is an Italian information engineer, academic and researcher. He is the former Head of the Department of Electrical Engineering and Information Science and the professor of Automatic Control at Department of Ingegneria Elettrica e dell'Informazione (DEI) at Politecnico di Bari, Italy.