Computer simulation modeling

Modeling of computer experiments typically uses a Bayesian framework. Bayesian statistics is an interpretation of the field of statistics where all evidence about the true state of the world is explicitly expressed in the form of probabilities. In the realm of computer experiments, the Bayesian interpretation would imply we must form a prior distribution that represents our prior belief on the structure of the computer model. The use of this philosophy for computer experiments started in the 1980s and is nicely summarized by Sacks et al. (1989) . While the Bayesian approach is widely used, frequentist approaches have been recently discussed .

The basic idea of this framework is to model the computer simulation as an unknown function of a set of inputs. The computer simulation is implemented as a piece of computer code that can be evaluated to produce a collection of outputs. Examples of inputs to these simulations are coefficients in the underlying model, initial conditions and forcing functions. It is natural to see the simulation as a deterministic function that maps these inputs into a collection of outputs. On the basis of seeing our simulator this way, it is common to refer to the collection of inputs as  , the computer simulation itself as

, the computer simulation itself as  , and the resulting output as

, and the resulting output as  . Both

. Both  and

and  are vector quantities, and they can be very large collections of values, often indexed by space, or by time, or by both space and time.

are vector quantities, and they can be very large collections of values, often indexed by space, or by time, or by both space and time.

Although  is known in principle, in practice this is not the case. Many simulators comprise tens of thousands of lines of high-level computer code, which is not accessible to intuition. For some simulations, such as climate models, evaluation of the output for a single set of inputs can require millions of computer hours .

is known in principle, in practice this is not the case. Many simulators comprise tens of thousands of lines of high-level computer code, which is not accessible to intuition. For some simulations, such as climate models, evaluation of the output for a single set of inputs can require millions of computer hours .

Gaussian process prior

The typical model for a computer code output is a Gaussian process. For notational simplicity, assume  is a scalar. Owing to the Bayesian framework, we fix our belief that the function

is a scalar. Owing to the Bayesian framework, we fix our belief that the function  follows a Gaussian process,

follows a Gaussian process,  where

where  is the mean function and

is the mean function and  is the covariance function. Popular mean functions are low order polynomials and a popular covariance function is Matern covariance, which includes both the exponential (

is the covariance function. Popular mean functions are low order polynomials and a popular covariance function is Matern covariance, which includes both the exponential ( ) and Gaussian covariances (as

) and Gaussian covariances (as  ).

).

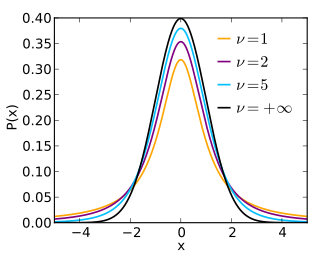

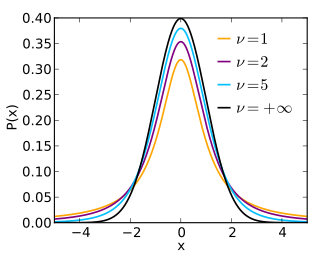

In probability and statistics, Student's t distribution is a continuous probability distribution that generalizes the standard normal distribution. Like the latter, it is symmetric around zero and bell-shaped.

In probability theory and statistics, a Gaussian process is a stochastic process, such that every finite collection of those random variables has a multivariate normal distribution. The distribution of a Gaussian process is the joint distribution of all those random variables, and as such, it is a distribution over functions with a continuous domain, e.g. time or space.

In statistics, originally in geostatistics, kriging or Kriging, also known as Gaussian process regression, is a method of interpolation based on Gaussian process governed by prior covariances. Under suitable assumptions of the prior, kriging gives the best linear unbiased prediction (BLUP) at unsampled locations. Interpolating methods based on other criteria such as smoothness may not yield the BLUP. The method is widely used in the domain of spatial analysis and computer experiments. The technique is also known as Wiener–Kolmogorov prediction, after Norbert Wiener and Andrey Kolmogorov.

Sensitivity analysis is the study of how the uncertainty in the output of a mathematical model or system can be divided and allocated to different sources of uncertainty in its inputs. A related practice is uncertainty analysis, which has a greater focus on uncertainty quantification and propagation of uncertainty; ideally, uncertainty and sensitivity analysis should be run in tandem.

In quantum information theory, a quantum circuit is a model for quantum computation, similar to classical circuits, in which a computation is a sequence of quantum gates, measurements, initializations of qubits to known values, and possibly other actions. The minimum set of actions that a circuit needs to be able to perform on the qubits to enable quantum computation is known as DiVincenzo's criteria.

In probability theory and mathematical physics, a random matrix is a matrix-valued random variable—that is, a matrix in which some or all of its entries are sampled randomly from a probability distribution. Random matrix theory (RMT) is the study of properties of random matrices, often as they become large. RMT provides techniques like mean-field theory, diagrammatic methods, the cavity method, or the replica method to compute quantities like traces, spectral densities, or scalar products between eigenvectors. Many physical phenomena, such as the spectrum of nuclei of heavy atoms, the thermal conductivity of a lattice, or the emergence of quantum chaos, can be modeled mathematically as problems concerning large, random matrices.

Uncertainty quantification (UQ) is the science of quantitative characterization and estimation of uncertainties in both computational and real world applications. It tries to determine how likely certain outcomes are if some aspects of the system are not exactly known. An example would be to predict the acceleration of a human body in a head-on crash with another car: even if the speed was exactly known, small differences in the manufacturing of individual cars, how tightly every bolt has been tightened, etc., will lead to different results that can only be predicted in a statistical sense.

The ensemble Kalman filter (EnKF) is a recursive filter suitable for problems with a large number of variables, such as discretizations of partial differential equations in geophysical models. The EnKF originated as a version of the Kalman filter for large problems, and it is now an important data assimilation component of ensemble forecasting. EnKF is related to the particle filter but the EnKF makes the assumption that all probability distributions involved are Gaussian; when it is applicable, it is much more efficient than the particle filter.

Polynomial chaos (PC), also called polynomial chaos expansion (PCE) and Wiener chaos expansion, is a method for representing a random variable in terms of a polynomial function of other random variables. The polynomials are chosen to be orthogonal with respect to the joint probability distribution of these random variables. Note that despite its name, PCE has no immediate connections to chaos theory. The word "chaos" here should be understood as "random".

In statistics, Gaussian process emulator is one name for a general type of statistical model that has been used in contexts where the problem is to make maximum use of the outputs of a complicated computer-based simulation model. Each run of the simulation model is computationally expensive and each run is based on many different controlling inputs. The variation of the outputs of the simulation model is expected to vary reasonably smoothly with the inputs, but in an unknown way.

Within bayesian statistics for machine learning, kernel methods arise from the assumption of an inner product space or similarity structure on inputs. For some such methods, such as support vector machines (SVMs), the original formulation and its regularization were not Bayesian in nature. It is helpful to understand them from a Bayesian perspective. Because the kernels are not necessarily positive semidefinite, the underlying structure may not be inner product spaces, but instead more general reproducing kernel Hilbert spaces. In Bayesian probability kernel methods are a key component of Gaussian processes, where the kernel function is known as the covariance function. Kernel methods have traditionally been used in supervised learning problems where the input space is usually a space of vectors while the output space is a space of scalars. More recently these methods have been extended to problems that deal with multiple outputs such as in multi-task learning.

Kernel methods are a well-established tool to analyze the relationship between input data and the corresponding output of a function. Kernels encapsulate the properties of functions in a computationally efficient way and allow algorithms to easily swap functions of varying complexity.

Boson sampling is a restricted model of non-universal quantum computation introduced by Scott Aaronson and Alex Arkhipov after the original work of Lidror Troyansky and Naftali Tishby, that explored possible usage of boson scattering to evaluate expectation values of permanents of matrices. The model consists of sampling from the probability distribution of identical bosons scattered by a linear interferometer. Although the problem is well defined for any bosonic particles, its photonic version is currently considered as the most promising platform for a scalable implementation of a boson sampling device, which makes it a non-universal approach to linear optical quantum computing. Moreover, while not universal, the boson sampling scheme is strongly believed to implement computing tasks which are hard to implement with classical computers by using far fewer physical resources than a full linear-optical quantum computing setup. This advantage makes it an ideal candidate for demonstrating the power of quantum computation in the near term.

In statistics, Whittle likelihood is an approximation to the likelihood function of a stationary Gaussian time series. It is named after the mathematician and statistician Peter Whittle, who introduced it in his PhD thesis in 1951. It is commonly used in time series analysis and signal processing for parameter estimation and signal detection.

Gradient-enhanced kriging (GEK) is a surrogate modeling technique used in engineering. A surrogate model is a prediction of the output of an expensive computer code. This prediction is based on a small number of evaluations of the expensive computer code.

Multifidelity methods leverage both low- and high-fidelity data in order to maximize the accuracy of model estimates, while minimizing the cost associated with parametrization. They have been successfully used in impedance cardiography, wing-design optimization, robotic learning, computational biomechanics, and have more recently been extended to human-in-the-loop systems, such as aerospace and transportation. They include both model-based methods, where a generative model is available or can be learned, in addition to model-free methods, that include regression-based approaches, such as stacked-regression. A more general class of regression-based multi-fidelity methods are Bayesian approaches, e.g. Bayesian linear regression, Gaussian mixture models, Gaussian processes, auto-regressive Gaussian processes, or Bayesian polynomial chaos expansions.

A Neural Network Gaussian Process (NNGP) is a Gaussian process (GP) obtained as the limit of a certain type of sequence of neural networks. Specifically, a wide variety of network architectures converges to a GP in the infinitely wide limit, in the sense of distribution. The concept constitutes an intensional definition, i.e., a NNGP is just a GP, but distinguished by how it is obtained.

This is a comparison of statistical analysis software that allows doing inference with Gaussian processes often using approximations.

Probabilistic numerics is an active field of study at the intersection of applied mathematics, statistics, and machine learning centering on the concept of uncertainty in computation. In probabilistic numerics, tasks in numerical analysis such as finding numerical solutions for integration, linear algebra, optimization and simulation and differential equations are seen as problems of statistical, probabilistic, or Bayesian inference.

Bayesian quadrature is a method for approximating intractable integration problems. It falls within the class of probabilistic numerical methods. Bayesian quadrature views numerical integration as a Bayesian inference task, where function evaluations are used to estimate the integral of that function. For this reason, it is sometimes also referred to as "Bayesian probabilistic numerical integration" or "Bayesian numerical integration". The name "Bayesian cubature" is also sometimes used when the integrand is multi-dimensional. A potential advantage of this approach is that it provides probabilistic uncertainty quantification for the value of the integral.