In statistical mechanics and mathematics, a Boltzmann distribution is a probability distribution or probability measure that gives the probability that a system will be in a certain state as a function of that state's energy and the temperature of the system. The distribution is expressed in the form:

Entropy is a scientific concept as well as a measurable physical property that is most commonly associated with a state of disorder, randomness, or uncertainty. The term and the concept are used in diverse fields, from classical thermodynamics, where it was first recognized, to the microscopic description of nature in statistical physics, and to the principles of information theory. It has found far-ranging applications in chemistry and physics, in biological systems and their relation to life, in cosmology, economics, sociology, weather science, climate change, and information systems including the transmission of information in telecommunication.

In information theory, the entropy of a random variable is the average level of "information", "surprise", or "uncertainty" inherent to the variable's possible outcomes. Given a discrete random variable , which takes values in the alphabet and is distributed according to :

In physics, statistical mechanics is a mathematical framework that applies statistical methods and probability theory to large assemblies of microscopic entities. It does not assume or postulate any natural laws, but explains the macroscopic behavior of nature from the behavior of such ensembles.

In physics, specifically statistical mechanics, an ensemble is an idealization consisting of a large number of virtual copies of a system, considered all at once, each of which represents a possible state that the real system might be in. In other words, a statistical ensemble is set of systems of particles used in statistical mechanics to describe a single system. The concept of an ensemble was introduced by J. Willard Gibbs in 1902.

The principle of maximum entropy states that the probability distribution which best represents the current state of knowledge about a system is the one with largest entropy, in the context of precisely stated prior data.

The fluctuation theorem (FT), which originated from statistical mechanics, deals with the relative probability that the entropy of a system which is currently away from thermodynamic equilibrium will increase or decrease over a given amount of time. While the second law of thermodynamics predicts that the entropy of an isolated system should tend to increase until it reaches equilibrium, it became apparent after the discovery of statistical mechanics that the second law is only a statistical one, suggesting that there should always be some nonzero probability that the entropy of an isolated system might spontaneously decrease; the fluctuation theorem precisely quantifies this probability.

In information theory and statistics, negentropy is used as a measure of distance to normality. The concept and phrase "negative entropy" was introduced by Erwin Schrödinger in his 1944 popular-science book What is Life? Later, Léon Brillouin shortened the phrase to negentropy. In 1974, Albert Szent-Györgyi proposed replacing the term negentropy with syntropy. That term may have originated in the 1940s with the Italian mathematician Luigi Fantappiè, who tried to construct a unified theory of biology and physics. Buckminster Fuller tried to popularize this usage, but negentropy remains common.

In classical statistical mechanics, the H-theorem, introduced by Ludwig Boltzmann in 1872, describes the tendency to decrease in the quantity H in a nearly-ideal gas of molecules. As this quantity H was meant to represent the entropy of thermodynamics, the H-theorem was an early demonstration of the power of statistical mechanics as it claimed to derive the second law of thermodynamics—a statement about fundamentally irreversible processes—from reversible microscopic mechanics. It is thought to prove the second law of thermodynamics, albeit under the assumption of low-entropy initial conditions.

In statistical mechanics, the grand canonical ensemble is the statistical ensemble that is used to represent the possible states of a mechanical system of particles that are in thermodynamic equilibrium with a reservoir. The system is said to be open in the sense that the system can exchange energy and particles with a reservoir, so that various possible states of the system can differ in both their total energy and total number of particles. The system's volume, shape, and other external coordinates are kept the same in all possible states of the system.

In statistical mechanics, the microcanonical ensemble is a statistical ensemble that represents the possible states of a mechanical system whose total energy is exactly specified. The system is assumed to be isolated in the sense that it cannot exchange energy or particles with its environment, so that the energy of the system does not change with time.

In statistical mechanics, a canonical ensemble is the statistical ensemble that represents the possible states of a mechanical system in thermal equilibrium with a heat bath at a fixed temperature. The system can exchange energy with the heat bath, so that the states of the system will differ in total energy.

Equilibrium Thermodynamics is the systematic study of transformations of matter and energy in systems in terms of a concept called thermodynamic equilibrium. The word equilibrium implies a state of balance. Equilibrium thermodynamics, in origins, derives from analysis of the Carnot cycle. Here, typically a system, as cylinder of gas, initially in its own state of internal thermodynamic equilibrium, is set out of balance via heat input from a combustion reaction. Then, through a series of steps, as the system settles into its final equilibrium state, work is extracted.

In physics, maximum entropy thermodynamics views equilibrium thermodynamics and statistical mechanics as inference processes. More specifically, MaxEnt applies inference techniques rooted in Shannon information theory, Bayesian probability, and the principle of maximum entropy. These techniques are relevant to any situation requiring prediction from incomplete or insufficient data. MaxEnt thermodynamics began with two papers by Edwin T. Jaynes published in the 1957 Physical Review.

In statistical mechanics, a microstate is a specific microscopic configuration of a thermodynamic system that the system may occupy with a certain probability in the course of its thermal fluctuations. In contrast, the macrostate of a system refers to its macroscopic properties, such as its temperature, pressure, volume and density. Treatments on statistical mechanics define a macrostate as follows: a particular set of values of energy, the number of particles, and the volume of an isolated thermodynamic system is said to specify a particular macrostate of it. In this description, microstates appear as different possible ways the system can achieve a particular macrostate.

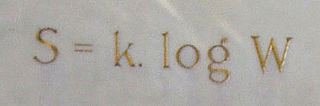

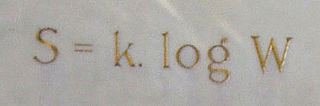

The mathematical expressions for thermodynamic entropy in the statistical thermodynamics formulation established by Ludwig Boltzmann and J. Willard Gibbs in the 1870s are similar to the information entropy by Claude Shannon and Ralph Hartley, developed in the 1940s.

The concept of entropy developed in response to the observation that a certain amount of functional energy released from combustion reactions is always lost to dissipation or friction and is thus not transformed into useful work. Early heat-powered engines such as Thomas Savery's (1698), the Newcomen engine (1712) and the Cugnot steam tricycle (1769) were inefficient, converting less than two percent of the input energy into useful work output; a great deal of useful energy was dissipated or lost. Over the next two centuries, physicists investigated this puzzle of lost energy; the result was the concept of entropy.

The concept entropy was first developed by German physicist Rudolf Clausius in the mid-nineteenth century as a thermodynamic property that predicts that certain spontaneous processes are irreversible or impossible. In statistical mechanics, entropy is formulated as a statistical property using probability theory. The statistical entropy perspective was introduced in 1870 by Austrian physicist Ludwig Boltzmann, who established a new field of physics that provided the descriptive linkage between the macroscopic observation of nature and the microscopic view based on the rigorous treatment of a large ensembles of microstates that constitute thermodynamic systems.

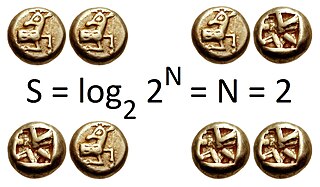

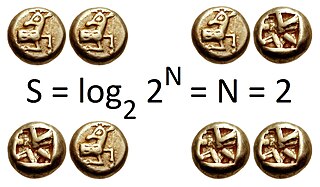

In statistical mechanics, Boltzmann's equation is a probability equation relating the entropy , also written as , of an ideal gas to the multiplicity, the number of real microstates corresponding to the gas's macrostate:

In network science, the network entropy is a disorder measure derived from information theory to describe the level of randomness and the amount of information encoded in a graph. It is a relevant metric to quantitatively characterize real complex networks and can also be used to quantify network complexity