A system on a chip or system-on-chip is an integrated circuit that integrates most or all components of a computer or other electronic system. These components almost always include on-chip central processing unit (CPU), memory interfaces, input/output devices, input/output interfaces, and secondary storage interfaces, often alongside other components such as radio modems and a graphics processing unit (GPU) – all on a single substrate or microchip. SoCs may contain digital, and also analog, mixed-signal, and often radio frequency signal processing functions.

Power management is a feature of some electrical appliances, especially copiers, computers, computer CPUs, computer GPUs and computer peripherals such as monitors and printers, that turns off the power or switches the system to a low-power state when inactive. In computing this is known as PC power management and is built around a standard called ACPI, this supersedes APM. All recent computers have ACPI support.

In computer science and mathematical optimization, a metaheuristic is a higher-level procedure or heuristic designed to find, generate, tune, or select a heuristic that may provide a sufficiently good solution to an optimization problem or a machine learning problem, especially with incomplete or imperfect information or limited computation capacity. Metaheuristics sample a subset of solutions which is otherwise too large to be completely enumerated or otherwise explored. Metaheuristics may make relatively few assumptions about the optimization problem being solved and so may be usable for a variety of problems.

A multi-core processor is a microprocessor on a single integrated circuit with two or more separate processing units, called cores, each of which reads and executes program instructions. The instructions are ordinary CPU instructions but the single processor can run instructions on separate cores at the same time, increasing overall speed for programs that support multithreading or other parallel computing techniques. Manufacturers typically integrate the cores onto a single integrated circuit die or onto multiple dies in a single chip package. The microprocessors currently used in almost all personal computers are multi-core.

EEMBC, the Embedded Microprocessor Benchmark Consortium, is a non-profit, member-funded organization formed in 1997, focused on the creation of standard benchmarks for the hardware and software used in embedded systems. The goal of its members is to make EEMBC benchmarks an industry standard for evaluating the capabilities of embedded processors, compilers, and the associated embedded system implementations, according to objective, clearly defined, application-based criteria. EEMBC members may contribute to the development of benchmarks, vote at various stages before public distribution, and accelerate testing of their platforms through early access to benchmarks and associated specifications.

Thread Level Speculation (TLS), also known as Speculative Multithreading, or Speculative Parallelization, is a technique to speculatively execute a section of computer code that is anticipated to be executed later in parallel with the normal execution on a separate independent thread. Such a speculative thread may need to make assumptions about the values of input variables. If these prove to be invalid, then the portions of the speculative thread that rely on these input variables will need to be discarded and squashed. If the assumptions are correct the program can complete in a shorter time provided the thread was able to be scheduled efficiently.

In computer science, fault injection is a testing technique for understanding how computing systems behave when stressed in unusual ways. This can be achieved using physical- or software-based means, or using a hybrid approach. Widely studied physical fault injections include the application of high voltages, extreme temperatures and electromagnetic pulses on electronic components, such as computer memory and central processing units. By exposing components to conditions beyond their intended operating limits, computing systems can be coerced into mis-executing instructions and corrupting critical data.

Manycore processors are special kinds of multi-core processors designed for a high degree of parallel processing, containing numerous simpler, independent processor cores. Manycore processors are used extensively in embedded computers and high-performance computing.

In computing, algorithmic skeletons, or parallelism patterns, are a high-level parallel programming model for parallel and distributed computing.

The Slurm Workload Manager, formerly known as Simple Linux Utility for Resource Management (SLURM), or simply Slurm, is a free and open-source job scheduler for Linux and Unix-like kernels, used by many of the world's supercomputers and computer clusters.

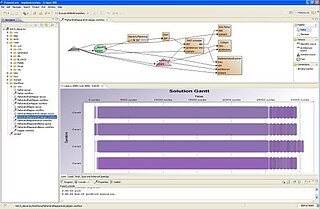

PREESM is an open-source rapid prototyping and code generation tool. It is primarily employed to simulate signal processing applications and generate code for multi-core Digital Signal Processors. PREESM is developed at the Institute of Electronics and Telecommunications-Rennes (IETR) in collaboration with Texas Instruments France in Nice.

For several years parallel hardware was only available for distributed computing but recently it is becoming available for the low end computers as well. Hence it has become inevitable for software programmers to start writing parallel applications. It is quite natural for programmers to think sequentially and hence they are less acquainted with writing multi-threaded or parallel processing applications. Parallel programming requires handling various issues such as synchronization and deadlock avoidance. Programmers require added expertise for writing such applications apart from their expertise in the application domain. Hence programmers prefer to write sequential code and most of the popular programming languages support it. This allows them to concentrate more on the application. Therefore, there is a need to convert such sequential applications to parallel applications with the help of automated tools. The need is also non-trivial because large amount of legacy code written over the past few decades needs to be reused and parallelized.

Tachyon is a parallel/multiprocessor ray tracing software. It is a parallel ray tracing library for use on distributed memory parallel computers, shared memory computers, and clusters of workstations. Tachyon implements rendering features such as ambient occlusion lighting, depth-of-field focal blur, shadows, reflections, and others. It was originally developed for the Intel iPSC/860 by John Stone for his M.S. thesis at University of Missouri-Rolla. Tachyon subsequently became a more functional and complete ray tracing engine, and it is now incorporated into a number of other open source software packages such as VMD, and SageMath. Tachyon is released under a permissive license.

Electronic systems’ power consumption has been a real challenge for Hardware and Software designers as well as users especially in portable devices like cell phones and laptop computers. Power consumption also has been an issue for many industries that use computer systems heavily such as Internet service providers using servers or companies with many employees using computers and other computational devices. Many different approaches have been discovered by researchers to estimate power consumption efficiently. This survey paper focuses on the different methods where power consumption can be estimated or measured in real-time.

Kepler is the codename for a GPU microarchitecture developed by Nvidia, first introduced at retail in April 2012, as the successor to the Fermi microarchitecture. Kepler was Nvidia's first microarchitecture to focus on energy efficiency. Most GeForce 600 series, most GeForce 700 series, and some GeForce 800M series GPUs were based on Kepler, all manufactured in 28 nm. Kepler also found use in the GK20A, the GPU component of the Tegra K1 SoC, as well as in the Quadro Kxxx series, the Quadro NVS 510, and Nvidia Tesla computing modules. Kepler was followed by the Maxwell microarchitecture and used alongside Maxwell in the GeForce 700 series and GeForce 800M series.

Data center is a pool of resources interconnected using a communication network. Data Center Network (DCN) holds a pivotal role in a data center, as it interconnects all of the data center resources together. DCNs need to be scalable and efficient to connect tens or even hundreds of thousands of servers to handle the growing demands of Cloud computing. Today's data centers are constrained by the interconnection network.

pSeven is a DSE software platform developed by DATADVANCE that features design, simulation and analysis capabilities and assists in design decisions. It provides integration with third party CAD and CAE software tools, multi-objective and robust optimization algorithms, data analysis, and uncertainty quantification tools.

Ying Lu is an Associate Professor of Computer Science and Engineering at the University of Nebraska-Lincoln.

In machine learning, hyperparameter optimization or tuning is the problem of choosing a set of optimal hyperparameters for a learning algorithm. A hyperparameter is a parameter whose value is used to control the learning process. By contrast, the values of other parameters are learned.

Hardware watermarking, also known as IP core watermarking is the process of embedding covert marks as design attributes inside a hardware or IP core design itself. Hardware Watermarking can represent watermarking of either DSP Cores or combinational/sequential circuits. Both forms of Hardware Watermarking are very popular. In DSP Core Watermarking a secret mark is embedded within the logic elements of the DSP Core itself. DSP Core Watermark usually implants this secret mark in the form of a robust signature either in the RTL design or during High Level Synthesis (HLS) design. The watermarking process of a DSP Core leverages on the High Level Synthesis framework and implants a secret mark in one of the high level synthesis phases such as scheduling, allocation and binding. DSP Core Watermarking is performed to protect a DSP core from hardware threats such as IP piracy, forgery and false claim of ownership. Some examples of DSP cores are FIR filter, IIR filter, FFT, DFT, JPEG, HWT etc. Few of the most important properties of a DSP core watermarking process are as follows: (a) Low embedding cost (b) Secret mark (c) Low creation time (d) Strong tamper tolerance (e) Fault tolerance.