In physics, optical depth or optical thickness is the natural logarithm of the ratio of incident to transmitted radiant power through a material. Thus, the larger the optical depth, the smaller the amount of transmitted radiant power through the material. Spectral optical depth or spectral optical thickness is the natural logarithm of the ratio of incident to transmitted spectral radiant power through a material. Optical depth is dimensionless, and in particular is not a length, though it is a monotonically increasing function of optical path length, and approaches zero as the path length approaches zero. The use of the term "optical density" for optical depth is discouraged.

In the theory of relativity, four-acceleration is a four-vector that is analogous to classical acceleration. Four-acceleration has applications in areas such as the annihilation of antiprotons, resonance of strange particles and radiation of an accelerated charge.

In mathematics, the Weierstrass elliptic functions are elliptic functions that take a particularly simple form. They are named for Karl Weierstrass. This class of functions are also referred to as ℘-functions and they are usually denoted by the symbol ℘, a uniquely fancy script p. They play an important role in the theory of elliptic functions. A ℘-function together with its derivative can be used to parameterize elliptic curves and they generate the field of elliptic functions with respect to a given period lattice.

The Hamiltonian constraint arises from any theory that admits a Hamiltonian formulation and is reparametrisation-invariant. The Hamiltonian constraint of general relativity is an important non-trivial example.

The Hodrick–Prescott filter is a mathematical tool used in macroeconomics, especially in real business cycle theory, to remove the cyclical component of a time series from raw data. It is used to obtain a smoothed-curve representation of a time series, one that is more sensitive to long-term than to short-term fluctuations. The adjustment of the sensitivity of the trend to short-term fluctuations is achieved by modifying a multiplier .

In system analysis, among other fields of study, a linear time-invariant (LTI) system is a system that produces an output signal from any input signal subject to the constraints of linearity and time-invariance; these terms are briefly defined below. These properties apply (exactly or approximately) to many important physical systems, in which case the response y(t) of the system to an arbitrary input x(t) can be found directly using convolution: y(t) = (x ∗ h)(t) where h(t) is called the system's impulse response and ∗ represents convolution (not to be confused with multiplication, as is frequently employed by the symbol in computer languages). What's more, there are systematic methods for solving any such system (determining h(t)), whereas systems not meeting both properties are generally more difficult (or impossible) to solve analytically. A good example of an LTI system is any electrical circuit consisting of resistors, capacitors, inductors and linear amplifiers.

The simply typed lambda calculus, a form of type theory, is a typed interpretation of the lambda calculus with only one type constructor that builds function types. It is the canonical and simplest example of a typed lambda calculus. The simply typed lambda calculus was originally introduced by Alonzo Church in 1940 as an attempt to avoid paradoxical use of the untyped lambda calculus.

In physics, Larmor precession is the precession of the magnetic moment of an object about an external magnetic field. The phenomenon is conceptually similar to the precession of a tilted classical gyroscope in an external torque-exerting gravitational field. Objects with a magnetic moment also have angular momentum and effective internal electric current proportional to their angular momentum; these include electrons, protons, other fermions, many atomic and nuclear systems, as well as classical macroscopic systems. The external magnetic field exerts a torque on the magnetic moment,

In mathematics and transportation engineering, traffic flow is the study of interactions between travellers and infrastructure, with the aim of understanding and developing an optimal transport network with efficient movement of traffic and minimal traffic congestion problems.

Fermi–Walker transport is a process in general relativity used to define a coordinate system or reference frame such that all curvature in the frame is due to the presence of mass/energy density and not to arbitrary spin or rotation of the frame.

In mathematics, a commutation theorem for traces explicitly identifies the commutant of a specific von Neumann algebra acting on a Hilbert space in the presence of a trace.

In mathematics, a Jacobi form is an automorphic form on the Jacobi group, which is the semidirect product of the symplectic group Sp(n;R) and the Heisenberg group . The theory was first systematically studied by Eichler & Zagier (1985).

A traffic bottleneck is a localized disruption of vehicular traffic on a street, road, or highway. As opposed to a traffic jam, a bottleneck is a result of a specific physical condition, often the design of the road, badly timed traffic lights, or sharp curves. They can also be caused by temporary situations, such as vehicular accidents.

The Three-detector problem is a problem in traffic flow theory. Given is a homogeneous freeway and the vehicle counts at two detector stations. We seek the vehicle counts at some intermediate location. The method can be applied to incident detection and diagnosis by comparing the observed and predicted data, so a realistic solution to this problem is important. Newell G.F. proposed a simple method to solve this problem. In Newell's method, one gets the cumulative count curve (N-curve) of any intermediate location just by shifting the N-curves of the upstream and downstream detectors. Newell's method was developed before the variational theory of traffic flow was proposed to deal systematically with vehicle counts. This article shows how Newell's method fits in the context of variational theory.

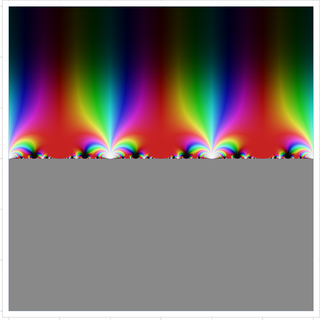

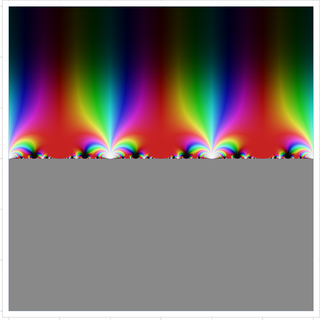

In mathematics, the modular lambda function λ(τ) is a highly symmetric holomorphic function on the complex upper half-plane. It is invariant under the fractional linear action of the congruence group Γ(2), and generates the function field of the corresponding quotient, i.e., it is a Hauptmodul for the modular curve X(2). Over any point τ, its value can be described as a cross ratio of the branch points of a ramified double cover of the projective line by the elliptic curve , where the map is defined as the quotient by the [−1] involution.

In traffic flow theory, the Newell–Daganzo merge model describes a simple procedure on how to determine the flows exiting two branch roadways and merging to flow through a single roadway. The model is simple in that it does not consider the actual merging process between vehicles as the two branch roadways come together. The only information required to calculate the flows leaving the two branch roadways are the capacities of the two branch roadways and the exiting capacity, the demands into the system, and a value describing how the two input flows interact. This latter input term is called the split priority, or merge ratio, and is defined as the proportion of the two input flows when both are operating in congested conditions.

Vehicular traffic can be either free or congested. Traffic occurs in time and space, i.e., it is a spatiotemporal process. However, usually traffic can be measured only at some road locations. For efficient traffic control and other intelligent transportation systems, the reconstruction of traffic congestion is necessary at all other road locations at which traffic measurements are not available. Traffic congestion can be reconstructed in space and time based on Boris Kerner’s three-phase traffic theory with the use of the ASDA and FOTO models introduced by Kerner. Kerner's three-phase traffic theory and, respectively, the ASDA/FOTO models are based on some common spatiotemporal features of traffic congestion observed in measured traffic data.

In physics, general covariant transformations are symmetries of gravitation theory on a world manifold . They are gauge transformations whose parameter functions are vector fields on . From the physical viewpoint, general covariant transformations are treated as particular (holonomic) reference frame transformations in general relativity. In mathematics, general covariant transformations are defined as particular automorphisms of so-called natural fiber bundles.

In quantum mechanics, the Redfield equation is a Markovian master equation that describes the time evolution of the reduced density matrix ρ of a strongly coupled quantum system that is weakly coupled to an environment. The equation is named in honor of Alfred G. Redfield, who first applied it, doing so for nuclear magnetic resonance spectroscopy.

Tau functions are an important ingredient in the modern theory of integrable systems, and have numerous applications in a variety of other domains. They were originally introduced by Ryogo Hirota in his direct method approach to soliton equations, based on expressing them in an equivalent bilinear form. The term Tau function, or -function, was first used systematically by Mikio Sato and his students in the specific context of the Kadomtsev–Petviashvili equation, and related integrable hierarchies. It is a central ingredient in the theory of solitons. Tau functions also appear as matrix model partition functions in the spectral theory of Random Matrices, and may also serve as generating functions, in the sense of combinatorics and enumerative geometry, especially in relation to moduli spaces of Riemann surfaces, and enumeration of branched coverings, or so-called Hurwitz numbers.