In probability and statistics, a random variable, random quantity, aleatory variable, or stochastic variable is a variable whose possible values are outcomes of a random phenomenon. More specifically, a random variable is defined as a function that maps the outcomes of an unpredictable process to numerical quantities, typically real numbers. It is a variable, in the sense that it depends on the outcome of an underlying process providing the input to this function, and it is random in the sense that the underlying process is assumed to be random.

In probability theory, the central limit theorem (CLT) establishes that, in some situations, when independent random variables are added, their properly normalized sum tends toward a normal distribution even if the original variables themselves are not normally distributed. The theorem is a key concept in probability theory because it implies that probabilistic and statistical methods that work for normal distributions can be applicable to many problems involving other types of distributions.

In mathematics, the Lp spaces are function spaces defined using a natural generalization of the p-norm for finite-dimensional vector spaces. They are sometimes called Lebesgue spaces, named after Henri Lebesgue, although according to the Bourbaki group they were first introduced by Frigyes Riesz . Lp spaces form an important class of Banach spaces in functional analysis, and of topological vector spaces. Because of their key role in the mathematical analysis of measure and probability spaces, Lebesgue spaces are used also in the theoretical discussion of problems in physics, statistics, finance, engineering, and other disciplines.

In probability theory and statistics, the multivariate normal distribution, multivariate Gaussian distribution, or joint normal distribution is a generalization of the one-dimensional (univariate) normal distribution to higher dimensions. One definition is that a random vector is said to be k-variate normally distributed if every linear combination of its k components has a univariate normal distribution. Its importance derives mainly from the multivariate central limit theorem. The multivariate normal distribution is often used to describe, at least approximately, any set of (possibly) correlated real-valued random variables each of which clusters around a mean value.

In probability theory, a log-normal distribution is a continuous probability distribution of a random variable whose logarithm is normally distributed. Thus, if the random variable X is log-normally distributed, then Y = ln(X) has a normal distribution. Likewise, if Y has a normal distribution, then the exponential function of Y, X = exp(Y), has a log-normal distribution. A random variable which is log-normally distributed takes only positive real values. The distribution is occasionally referred to as the Galton distribution or Galton's distribution, after Francis Galton. The log-normal distribution also has been associated with other names, such as McAlister, Gibrat and Cobb–Douglas.

In statistics, maximum likelihood estimation (MLE) is a method of estimating the parameters of a statistical model, given observations. The method obtains the parameter estimates by finding the parameter values that maximize the likelihood function. The estimates are called maximum likelihood estimates, which is also abbreviated as MLE.

In mathematics, a Gaussian function, often simply referred to as a Gaussian, is a function of the form:

In probability theory and statistics, a Gaussian process is a stochastic process, such that every finite collection of those random variables has a multivariate normal distribution, i.e. every finite linear combination of them is normally distributed. The distribution of a Gaussian process is the joint distribution of all those random variables, and as such, it is a distribution over functions with a continuous domain, e.g. time or space.

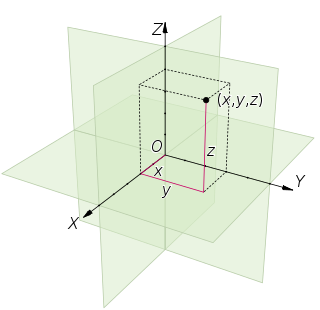

In probability theory, random element is a generalization of the concept of random variable to more complicated spaces than the simple real line. The concept was introduced by Maurice Fréchet (1948) who commented that the “development of probability theory and expansion of area of its applications have led to necessity to pass from schemes where (random) outcomes of experiments can be described by number or a finite set of numbers, to schemes where outcomes of experiments represent, for example, vectors, functions, processes, fields, series, transformations, and also sets or collections of sets.”

In mathematics, Gaussian measure is a Borel measure on finite-dimensional Euclidean space Rn, closely related to the normal distribution in statistics. There is also a generalization to infinite-dimensional spaces. Gaussian measures are named after the German mathematician Carl Friedrich Gauss. One reason why Gaussian measures are so ubiquitous in probability theory is the Central Limit Theorem. Loosely speaking, it states that if a random variable X is obtained by summing a large number N of independent random variables of order 1, then X is of order and its law is approximately Gaussian.

An abstract Wiener space is a mathematical object in measure theory, used to construct a "decent" measure on an infinite-dimensional vector space. It is named after the American mathematician Norbert Wiener. Wiener's original construction only applied to the space of real-valued continuous paths on the unit interval, known as classical Wiener space; Leonard Gross provided the generalization to the case of a general separable Banach space.

In mathematics, Dvoretzky's theorem is an important structural theorem about normed vector spaces proved by Aryeh Dvoretzky in the early 1960s, answering a question of Alexander Grothendieck. In essence, it says that every sufficiently high-dimensional normed vector space will have low-dimensional subspaces that are approximately Euclidean. Equivalently, every high-dimensional bounded symmetric convex set has low-dimensional sections that are approximately ellipsoids.

In mathematics, uniform integrability is an important concept in real analysis, functional analysis and measure theory, and plays a vital role in the theory of martingales. The definition used in measure theory is closely related to, but not identical to, the definition typically used in probability.

In the theory of stochastic processes, the filtering problem is a mathematical model for a number of state estimation problems in signal processing and related fields. The general idea is to establish a "best estimate" for the true value of some system from an incomplete, potentially noisy set of observations on that system. The problem of optimal non-linear filtering was solved by Ruslan L. Stratonovich, see also Harold J. Kushner's work and Moshe Zakai's, who introduced a simplified dynamics for the unnormalized conditional law of the filter known as Zakai equation. The solution, however, is infinite-dimensional in the general case. Certain approximations and special cases are well understood: for example, the linear filters are optimal for Gaussian random variables, and are known as the Wiener filter and the Kalman-Bucy filter. More generally, as the solution is infinite dimensional, it requires finite dimensional approximations to be implemented in a computer with finite memory. A finite dimensional approximated nonlinear filter may be more based on heuristics, such as the Extended Kalman Filter or the Assumed Density Filters, or more methodologically oriented such as for example the Projection Filters, some sub-families of which are shown to coincide with the Assumed Density Filters.

In functional analysis, the dual norm is a measure of the "size" of each continuous linear functional defined on a normed vector space.

In computational learning theory, Rademacher complexity, named after Hans Rademacher, measures richness of a class of real-valued functions with respect to a probability distribution.

In mathematics — specifically, in measure theory — Fernique's theorem is a result about Gaussian measures on Banach spaces. It extends the finite-dimensional result that a Gaussian random variable has exponential tails. The result was proved in 1970 by the mathematician Xavier Fernique.

In the mathematical study of functional analysis, the Banach–Mazur distance is a way to define a distance on the set Q(n) of n-dimensional normed spaces. With this distance, the set of isometry classes of n-dimensional normed spaces becomes a compact metric space, called the Banach–Mazur compactum.

In mathematics, the Pettis integral or Gelfand–Pettis integral, named after Israel M. Gelfand and Billy James Pettis, extends the definition of the Lebesgue integral to vector-valued functions on a measure space, by exploiting duality. The integral was introduced by Gelfand for the case when the measure space is an interval with Lebesgue measure. The integral is also called the weak integral in contrast to the Bochner integral, which is the strong integral.