Related Research Articles

Mathematical optimization or mathematical programming is the selection of a best element, with regard to some criteria, from some set of available alternatives. It is generally divided into two subfields: discrete optimization and continuous optimization. Optimization problems arise in all quantitative disciplines from computer science and engineering to operations research and economics, and the development of solution methods has been of interest in mathematics for centuries.

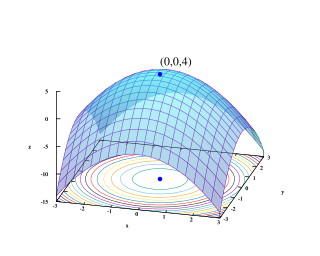

Global optimization is a branch of applied mathematics and numerical analysis that attempts to find the global minima or maxima of a function or a set of functions on a given set. It is usually described as a minimization problem because the maximization of the real-valued function is equivalent to the minimization of the function .

In mathematics, nonlinear programming (NLP) is the process of solving an optimization problem where some of the constraints are not linear equalities or the objective function is not a linear function. An optimization problem is one of calculation of the extrema of an objective function over a set of unknown real variables and conditional to the satisfaction of a system of equalities and inequalities, collectively termed constraints. It is the sub-field of mathematical optimization that deals with problems that are not linear.

The general algebraic modeling system (GAMS) is a high-level modeling system for mathematical optimization. GAMS is designed for modeling and solving linear, nonlinear, and mixed-integer optimization problems. The system is tailored for complex, large-scale modeling applications and allows the user to build large maintainable models that can be adapted to new situations. The system is available for use on various computer platforms. Models are portable from one platform to another.

In mathematics, the relaxation of a (mixed) integer linear program is the problem that arises by removing the integrality constraint of each variable.

Algebraic modeling languages (AML) are high-level computer programming languages for describing and solving high complexity problems for large scale mathematical computation. One particular advantage of some algebraic modeling languages like AIMMS, AMPL, GAMS, Gekko, MathProg, Mosel, and OPL is the similarity of their syntax to the mathematical notation of optimization problems. This allows for a very concise and readable definition of problems in the domain of optimization, which is supported by certain language elements like sets, indices, algebraic expressions, powerful sparse index and data handling variables, constraints with arbitrary names. The algebraic formulation of a model does not contain any hints how to process it.

The Zuse Institute Berlin is a research institute for applied mathematics and computer science on the campus of Freie Universität Berlin in Dahlem, Berlin, Germany.

BARON is a computational system for solving non-convex optimization problems to global optimality. Purely continuous, purely integer, and mixed-integer nonlinear problems can be solved by the solver. Linear programming (LP), nonlinear programming (NLP), mixed integer programming (MIP), and mixed integer nonlinear programming (MINLP) are supported. In a comparison of different solvers, BARON solved the most benchmark problems and required the least amount of time per problem.

MOSEK is a software package for the solution of linear, mixed-integer linear, quadratic, mixed-integer quadratic, quadratically constrained, conic and convex nonlinear mathematical optimization problems. The applicability of the solver varies widely and is commonly used for solving problems in areas such as engineering, finance and computer science.

In applied mathematics, branch and price is a method of combinatorial optimization for solving integer linear programming (ILP) and mixed integer linear programming (MILP) problems with many variables. The method is a hybrid of branch and bound and column generation methods.

Variable neighborhood search (VNS), proposed by Mladenović & Hansen in 1997, is a metaheuristic method for solving a set of combinatorial optimization and global optimization problems. It explores distant neighborhoods of the current incumbent solution, and moves from there to a new one if and only if an improvement was made. The local search method is applied repeatedly to get from solutions in the neighborhood to local optima. VNS was designed for approximating solutions of discrete and continuous optimization problems and according to these, it is aimed for solving linear program problems, integer program problems, mixed integer program problems, nonlinear program problems, etc.

APOPT is a software package for solving large-scale optimization problems of any of these forms:

MINOS is a Fortran software package for solving linear and nonlinear mathematical optimization problems. MINOS may be used for linear programming, quadratic programming, and more general objective functions and constraints, and for finding a feasible point for a set of linear or nonlinear equalities and inequalities.

Convex Over and Under ENvelopes for Nonlinear Estimation (Couenne) is an open-source library for solving global optimization problems, also termed mixed integer nonlinear optimization problems. A global optimization problem requires to minimize a function, called objective function, subject to a set of constraints. Both the objective function and the constraints might be nonlinear and nonconvex. For solving these problems, Couenne uses a reformulation procedure and provides a linear programming approximation of any nonconvex optimization problem.

Artelys Knitro is a commercial software package for solving large scale nonlinear mathematical optimization problems.

Algebraic modeling languages like AIMMS, AMPL, GAMS, MPL and others have been developed to facilitate the description of a problem in mathematical terms and to link the abstract formulation with data-management systems on the one hand and appropriate algorithms for solution on the other. Robust algorithms and modeling language interfaces have been developed for a large variety of mathematical programming problems such as linear programs (LPs), nonlinear programs (NPs), mixed integer programs (MIPs), mixed complementarity programs (MCPs) and others. Researchers are constantly updating the types of problems and algorithms that they wish to use to model in specific domain applications.

ANTIGONE, is a deterministic global optimization solver for general Mixed-Integer Nonlinear Programs (MINLP).

Octeract Engine is a proprietary massively parallel deterministic global optimization solver for general Mixed-Integer Nonlinear Programs (MINLP).

References

- ↑ Complete Search in Continuous Global Optimization and Constraint Satisfaction, Acta Numerica 2004 (A. Iserles, ed.), Cambridge University Press 2004

- ↑ Computability of global solutions to factorable nonconvex programs: Part I – Convex underestimating problems, Mathematical Programming, 1976, 1(10), 147–175

- ↑ Hansen, E.R. Global optimization using interval analysis, Marcel Dekker Inc, New York 1992

- ↑ Misener, Ruth; Floudas, Christodoulos A. (2014). "ANTIGONE: Algorithms for coNTinuous / Integer Global Optimization of Nonlinear Equations". Journal of Global Optimization. 59 (2–3): 503–526. doi:10.1007/s10898-014-0166-2. hdl: 10044/1/15506 . S2CID 41823802.

- ↑ ANTIGONE documentation in GAMS, 16 Apr 2013, retrieved 27 July 2019

- ↑ "BARON on the NEOS Server". Archived from the original on 2013-06-29. Retrieved 2016-01-26.

- ↑ "The optimization firm".

- ↑ P. Belotti, C. Kirches, S. Leyffer, J. Linderoth, J. Luedtke and A. Mahajan (2013). Mixed-integer nonlinear optimization. Acta Numerica, 22, pp 1-131. doi:10.1017/S0962492913000032. http://journals.cambridge.org/abstract_S0962492913000032

- ↑ Wilhelm, M. E.; Stuber, M. D. (2020). "EAGO.jl: easy advanced global optimization in Julia". Optimization Methods and Software. 37 (2): 425–450. doi:10.1080/10556788.2020.1786566. S2CID 225503302.

- ↑ "EAGO source code". GitHub .

- ↑ Linus E. Schrage, Linear, Integer, and Quadratic Programming with Lindo, Scientific Press, 1986, ISBN 0894260901

- ↑ "McCormick-based Algorithm for mixed-integer Nonlinear Global Optimization (MAiNGO)".

- ↑ "MAiNGO source code".

- ↑ "Octeract".

- ↑ "SCIP optimization suite".