In numerical analysis, Newton's method, also known as the Newton–Raphson method, named after Isaac Newton and Joseph Raphson, is a root-finding algorithm which produces successively better approximations to the roots of a real-valued function. The most basic version starts with a real-valued function f, its derivative f′, and an initial guess x0 for a root of f. If f satisfies certain assumptions and the initial guess is close, then

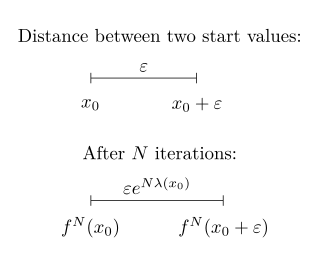

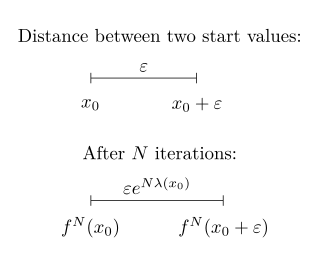

In mathematics, the Lyapunov exponent or Lyapunov characteristic exponent of a dynamical system is a quantity that characterizes the rate of separation of infinitesimally close trajectories. Quantitatively, two trajectories in phase space with initial separation vector diverge at a rate given by

In numerical analysis, the secant method is a root-finding algorithm that uses a succession of roots of secant lines to better approximate a root of a function f. The secant method can be thought of as a finite-difference approximation of Newton's method. However, the secant method predates Newton's method by over 3000 years.

In mathematics and computing, the Levenberg–Marquardt algorithm, also known as the damped least-squares (DLS) method, is used to solve non-linear least squares problems. These minimization problems arise especially in least squares curve fitting. The LMA interpolates between the Gauss–Newton algorithm (GNA) and the method of gradient descent. The LMA is more robust than the GNA, which means that in many cases it finds a solution even if it starts very far off the final minimum. For well-behaved functions and reasonable starting parameters, the LMA tends to be slower than the GNA. LMA can also be viewed as Gauss–Newton using a trust region approach.

The Gauss–Newton algorithm is used to solve non-linear least squares problems, which is equivalent to minimizing a sum of squared function values. It is an extension of Newton's method for finding a minimum of a non-linear function. Since a sum of squares must be nonnegative, the algorithm can be viewed as using Newton's method to iteratively approximate zeroes of the components of the sum, and thus minimizing the sum. In this sense, the algorithm is also an effective method for solving overdetermined systems of equations. It has the advantage that second derivatives, which can be challenging to compute, are not required.

In optimization, the line search strategy is one of two basic iterative approaches to find a local minimum of an objective function . The other approach is trust region.

In numerical analysis, the Crank–Nicolson method is a finite difference method used for numerically solving the heat equation and similar partial differential equations. It is a second-order method in time. It is implicit in time, can be written as an implicit Runge–Kutta method, and it is numerically stable. The method was developed by John Crank and Phyllis Nicolson in the mid 20th century.

In numerical optimization, the Broyden–Fletcher–Goldfarb–Shanno (BFGS) algorithm is an iterative method for solving unconstrained nonlinear optimization problems. Like the related Davidon–Fletcher–Powell method, BFGS determines the descent direction by preconditioning the gradient with curvature information. It does so by gradually improving an approximation to the Hessian matrix of the loss function, obtained only from gradient evaluations via a generalized secant method.

Quasi-Newton methods are methods used to either find zeroes or local maxima and minima of functions, as an alternative to Newton's method. They can be used if the Jacobian or Hessian is unavailable or is too expensive to compute at every iteration. The "full" Newton's method requires the Jacobian in order to search for zeros, or the Hessian for finding extrema. Some iterative methods that reduce to Newton's method, such as SLSQP, may be considered quasi-Newtonian.

In numerical optimization, the nonlinear conjugate gradient method generalizes the conjugate gradient method to nonlinear optimization. For a quadratic function

In computational chemistry, a constraint algorithm is a method for satisfying the Newtonian motion of a rigid body which consists of mass points. A restraint algorithm is used to ensure that the distance between mass points is maintained. The general steps involved are: (i) choose novel unconstrained coordinates, (ii) introduce explicit constraint forces, (iii) minimize constraint forces implicitly by the technique of Lagrange multipliers or projection methods.

Numerical continuation is a method of computing approximate solutions of a system of parameterized nonlinear equations,

The Kantorovich theorem, or Newton–Kantorovich theorem, is a mathematical statement on the semi-local convergence of Newton's method. It was first stated by Leonid Kantorovich in 1948. It is similar to the form of the Banach fixed-point theorem, although it states existence and uniqueness of a zero rather than a fixed point.

Equilibrium constants are determined in order to quantify chemical equilibria. When an equilibrium constant K is expressed as a concentration quotient,

The Symmetric Rank 1 (SR1) method is a quasi-Newton method to update the second derivative (Hessian) based on the derivatives (gradients) calculated at two points. It is a generalization to the secant method for a multidimensional problem. This update maintains the symmetry of the matrix but does not guarantee that the update be positive definite.

The Davidon–Fletcher–Powell formula finds the solution to the secant equation that is closest to the current estimate and satisfies the curvature condition. It was the first quasi-Newton method to generalize the secant method to a multidimensional problem. This update maintains the symmetry and positive definiteness of the Hessian matrix.

Non-linear least squares is the form of least squares analysis used to fit a set of m observations with a model that is non-linear in n unknown parameters (m ≥ n). It is used in some forms of nonlinear regression. The basis of the method is to approximate the model by a linear one and to refine the parameters by successive iterations. There are many similarities to linear least squares, but also some significant differences. In economic theory, the non-linear least squares method is applied in (i) the probit regression, (ii) threshold regression, (iii) smooth regression, (iv) logistic link regression, (v) Box–Cox transformed regressors ().

In physics, Lagrangian mechanics is a formulation of classical mechanics founded on the stationary-action principle. It was introduced by the Italian-French mathematician and astronomer Joseph-Louis Lagrange in his presentation to the Turin Academy of Science in 1760 culminating in his 1788 grand opus, Mécanique analytique.

Powell's dog leg method, also called Powell's hybrid method, is an iterative optimisation algorithm for the solution of non-linear least squares problems, introduced in 1970 by Michael J. D. Powell. Similarly to the Levenberg–Marquardt algorithm, it combines the Gauss–Newton algorithm with gradient descent, but it uses an explicit trust region. At each iteration, if the step from the Gauss–Newton algorithm is within the trust region, it is used to update the current solution. If not, the algorithm searches for the minimum of the objective function along the steepest descent direction, known as Cauchy point. If the Cauchy point is outside of the trust region, it is truncated to the boundary of the latter and it is taken as the new solution. If the Cauchy point is inside the trust region, the new solution is taken at the intersection between the trust region boundary and the line joining the Cauchy point and the Gauss-Newton step.

The Barzilai-Borwein method is an iterative gradient descent method for unconstrained optimization using either of two step sizes derived from the linear trend of the most recent two iterates. This method, and modifications, are globally convergent under mild conditions, and perform competitively with conjugate gradient methods for many problems. Not depending on the objective itself, it can also solve some systems of linear and non-linear equations.