Computer vision tasks include methods for acquiring, processing, analyzing and understanding digital images, and extraction of high-dimensional data from the real world in order to produce numerical or symbolic information, e.g. in the forms of decisions. Understanding in this context means the transformation of visual images into descriptions of the world that make sense to thought processes and can elicit appropriate action. This image understanding can be seen as the disentangling of symbolic information from image data using models constructed with the aid of geometry, physics, statistics, and learning theory.

A point cloud is a discrete set of data points in space. The points may represent a 3D shape or object. Each point position has its set of Cartesian coordinates. Point clouds are generally produced by 3D scanners or by photogrammetry software, which measure many points on the external surfaces of objects around them. As the output of 3D scanning processes, point clouds are used for many purposes, including to create 3D computer-aided design (CAD) or geographic information systems (GIS) models for manufactured parts, for metrology and quality inspection, and for a multitude of visualizing, animating, rendering, and mass customization applications.

Stereoscopy is a technique for creating or enhancing the illusion of depth in an image by means of stereopsis for binocular vision. The word stereoscopy derives from Greek στερεός (stereos) 'firm, solid', and σκοπέω (skopeō) 'to look, to see'. Any stereoscopic image is called a stereogram. Originally, stereogram referred to a pair of stereo images which could be viewed using a stereoscope.

Bullet time is a visual effect or visual impression of detaching the time and space of a camera from that of its visible subject. It is a depth enhanced simulation of variable-speed action and performance found in films, broadcast advertisements, and realtime graphics within video games and other special media. It is characterized by its extreme transformation of both time, and of space. This is almost impossible with conventional slow motion, as the physical camera would have to move implausibly fast; the concept implies that only a "virtual camera", often illustrated within the confines of a computer-generated environment such as a virtual world or virtual reality, would be capable of "filming" bullet-time types of moments. Technical and historical variations of this effect have been referred to as time slicing, view morphing, temps mort and virtual cinematography.

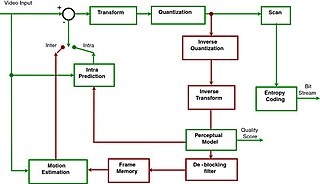

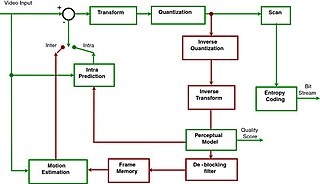

Advanced Video Coding (AVC), also referred to as H.264 or MPEG-4 Part 10, is a video compression standard based on block-oriented, motion-compensated coding. It is by far the most commonly used format for the recording, compression, and distribution of video content, used by 91% of video industry developers as of September 2019. It supports a maximum resolution of 8K UHD.

A camcorder is a self-contained portable electronic device with video and recording as its primary function. It is typically equipped with an articulating screen mounted on the left side, a belt to facilitate holding on the right side, hot-swappable battery facing towards the user, hot-swappable recording media, and an internally contained quiet optical zoom lens.

In 3D computer graphics, hidden-surface determination is the process of identifying what surfaces and parts of surfaces can be seen from a particular viewing angle. A hidden-surface determination algorithm is a solution to the visibility problem, which was one of the first major problems in the field of 3D computer graphics. The process of hidden-surface determination is sometimes called hiding, and such an algorithm is sometimes called a hider. When referring to line rendering it is known as hidden-line removal. Hidden-surface determination is necessary to render a scene correctly, so that one may not view features hidden behind the model itself, allowing only the naturally viewable portion of the graphic to be visible.

In computer graphics, a shader is a computer program that calculates the appropriate levels of light, darkness, and color during the rendering of a 3D scene—a process known as shading. Shaders have evolved to perform a variety of specialized functions in computer graphics special effects and video post-processing, as well as general-purpose computing on graphics processing units.

A volumetric display device is a display device that forms a visual representation of an object in three physical dimensions, as opposed to the planar image of traditional screens that simulate depth through a number of different visual effects. One definition offered by pioneers in the field is that volumetric displays create 3D imagery via the emission, scattering, or relaying of illumination from well-defined regions in (x,y,z) space.

In computer vision and image processing, motion estimation is the process of determining motion vectors that describe the transformation from one 2D image to another; usually from adjacent frames in a video sequence. It is an ill-posed problem as the motion happens in three dimensions (3D) but the images are a projection of the 3D scene onto a 2D plane. The motion vectors may relate to the whole image or specific parts, such as rectangular blocks, arbitrary shaped patches or even per pixel. The motion vectors may be represented by a translational model or many other models that can approximate the motion of a real video camera, such as rotation and translation in all three dimensions and zoom.

Real-time computer graphics or real-time rendering is the sub-field of computer graphics focused on producing and analyzing images in real time. The term can refer to anything from rendering an application's graphical user interface (GUI) to real-time image analysis, but is most often used in reference to interactive 3D computer graphics, typically using a graphics processing unit (GPU). One example of this concept is a video game that rapidly renders changing 3D environments to produce an illusion of motion.

3D computer graphics, sometimes called CGI, 3-D-CGI or three-dimensional computer graphics, are graphics that use a three-dimensional representation of geometric data that is stored in the computer for the purposes of performing calculations and rendering digital images, usually 2D images but sometimes 3D images. The resulting images may be stored for viewing later or displayed in real time.

TDVision Systems, Inc., was a company that designed products and system architectures for stereoscopic video coding, stereoscopic video games, and head mounted displays. The company was founded by Manuel Gutierrez Novelo and Isidoro Pessah in Mexico in 2001 and moved to the United States in 2004.

2D-plus-Depthis a stereoscopic video coding format that is used for 3D displays, such as Philips WOWvx. Philips discontinued work on the WOWvx line in 2009, citing "current market developments". Currently, this Philips technology is used by SeeCubic company, led by former key 3D engineers and scientists of Philips. They offer autostereoscopic 3D displays which use the 2D-plus-Depth format for 3D video input.

Multi view Video Coding is a stereoscopic video coding standard for video compression that allows for encoding of video sequences captured simultaneously from multiple camera angles in a single video stream. It uses the 2D plus Delta method and is an amendment to the H.264 video compression standard, developed jointly by MPEG and VCEG, with contributions from a number of companies, primarily Panasonic and LG Electronics.

DVB 3D-TV is a new standard that partially came out at the end of 2010 which included techniques and procedures to send a three-dimensional video signal through actual DVB transmission standards. Currently there is a commercial requirement text for 3D TV broadcasters and Set-top box manufacturers, but no technical information is in there.

Computer-generated imagery (CGI) is a specific-technology or application of computer graphics for creating or improving images in art, printed media, simulators, videos and video games. These images are either static or dynamic. CGI both refers to 2D computer graphics and 3D computer graphics with the purpose of designing characters, virtual worlds, or scenes and special effects. The application of CGI for creating/improving animations is called computer animation, or CGI animation.

This is a glossary of terms relating to computer graphics.

Volumetric capture or volumetric video is a technique that captures a three-dimensional space, such as a location or performance. This type of volumography acquires data that can be viewed on flat screens as well as using 3D displays and VR goggles. Consumer-facing formats are numerous and the required motion capture techniques lean on computer graphics, photogrammetry, and other computation-based methods. The viewer generally experiences the result in a real-time engine and has direct input in exploring the generated volume.