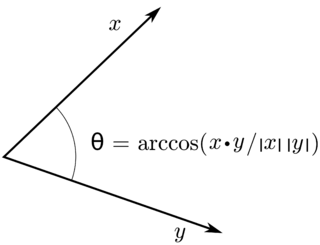

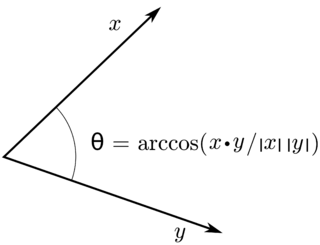

In mathematics, an inner product space is a real vector space or a complex vector space with an operation called an inner product. The inner product of two vectors in the space is a scalar, often denoted with angle brackets such as in . Inner products allow formal definitions of intuitive geometric notions, such as lengths, angles, and orthogonality of vectors. Inner product spaces generalize Euclidean vector spaces, in which the inner product is the dot product or scalar product of Cartesian coordinates. Inner product spaces of infinite dimension are widely used in functional analysis. Inner product spaces over the field of complex numbers are sometimes referred to as unitary spaces. The first usage of the concept of a vector space with an inner product is due to Giuseppe Peano, in 1898.

In mathematics, and more specifically in linear algebra, a linear map is a mapping between two vector spaces that preserves the operations of vector addition and scalar multiplication. The same names and the same definition are also used for the more general case of modules over a ring; see Module homomorphism.

In mathematics, a set B of vectors in a vector space V is called a basis if every element of V may be written in a unique way as a finite linear combination of elements of B. The coefficients of this linear combination are referred to as components or coordinates of the vector with respect to B. The elements of a basis are called basis vectors.

In mathematics, an operator is generally a mapping or function that acts on elements of a space to produce elements of another space. There is no general definition of an operator, but the term is often used in place of function when the domain is a set of functions or other structured objects. Also, the domain of an operator is often difficult to characterize explicitly, and may be extended so as to act on related objects.

In mathematics and physics, a vector space is a set whose elements, often called vectors, can be added together and multiplied ("scaled") by numbers called scalars. Scalars are often real numbers, but can be complex numbers or, more generally, elements of any field. The operations of vector addition and scalar multiplication must satisfy certain requirements, called vector axioms. Real vector spaces and complex vector spaces are kinds of vector spaces based on different kinds of scalars: real numbers and complex numbers.

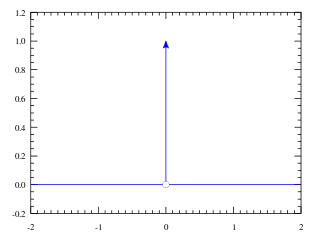

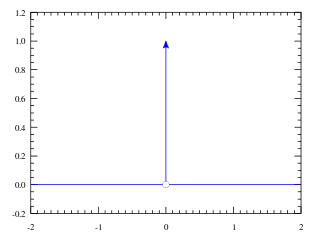

In mathematical analysis, the Dirac delta function, also known as the unit impulse, is a generalized function on the real numbers, whose value is zero everywhere except at zero, and whose integral over the entire real line is equal to one. Since there is no function having this property, modelling the delta "function" rigorously involves the use of limits or, as is common in mathematics, measure theory and the theory of distributions.

In physics, engineering and mathematics, the Fourier transform (FT) is an integral transform that takes a function as input and outputs another function that describes the extent to which various frequencies are present in the original function. The output of the transform is a complex-valued function of frequency. The term Fourier transform refers to both this complex-valued function and the mathematical operation. When a distinction needs to be made, the output of the operation is sometimes called the frequency domain representation of the original function. The Fourier transform is analogous to decomposing the sound of a musical chord into the intensities of its constituent pitches.

In mathematics, Cauchy's integral formula, named after Augustin-Louis Cauchy, is a central statement in complex analysis. It expresses the fact that a holomorphic function defined on a disk is completely determined by its values on the boundary of the disk, and it provides integral formulas for all derivatives of a holomorphic function. Cauchy's formula shows that, in complex analysis, "differentiation is equivalent to integration": complex differentiation, like integration, behaves well under uniform limits – a result that does not hold in real analysis.

In the mathematical field of differential geometry, a metric tensor is an additional structure on a manifold M that allows defining distances and angles, just as the inner product on a Euclidean space allows defining distances and angles there. More precisely, a metric tensor at a point p of M is a bilinear form defined on the tangent space at p, and a metric field on M consists of a metric tensor at each point p of M that varies smoothly with p.

In geometry, a three-dimensional space is a mathematical space in which three values (coordinates) are required to determine the position of a point. Most commonly, it is the three-dimensional Euclidean space, that is, the Euclidean space of dimension three, which models physical space. More general three-dimensional spaces are called 3-manifolds. The term may also refer colloquially to a subset of space, a three-dimensional region, a solid figure.

In theoretical physics, scalar field theory can refer to a relativistically invariant classical or quantum theory of scalar fields. A scalar field is invariant under any Lorentz transformation.

The gradient theorem, also known as the fundamental theorem of calculus for line integrals, says that a line integral through a gradient field can be evaluated by evaluating the original scalar field at the endpoints of the curve. The theorem is a generalization of the second fundamental theorem of calculus to any curve in a plane or space rather than just the real line.

In mathematics, the disintegration theorem is a result in measure theory and probability theory. It rigorously defines the idea of a non-trivial "restriction" of a measure to a measure zero subset of the measure space in question. It is related to the existence of conditional probability measures. In a sense, "disintegration" is the opposite process to the construction of a product measure.

In mathematics, Hilbert spaces allow the methods of linear algebra and calculus to be generalized from (finite-dimensional) Euclidean vector spaces to spaces that may be infinite-dimensional. Hilbert spaces arise naturally and frequently in mathematics and physics, typically as function spaces. Formally, a Hilbert space is a vector space equipped with an inner product that induces a distance function for which the space is a complete metric space. A Hilbert space is a special case of a Banach space.

In mathematics, the Pettis integral or Gelfand–Pettis integral, named after Israel M. Gelfand and Billy James Pettis, extends the definition of the Lebesgue integral to vector-valued functions on a measure space, by exploiting duality. The integral was introduced by Gelfand for the case when the measure space is an interval with Lebesgue measure. The integral is also called the weak integral in contrast to the Bochner integral, which is the strong integral.

Lagrangian field theory is a formalism in classical field theory. It is the field-theoretic analogue of Lagrangian mechanics. Lagrangian mechanics is used to analyze the motion of a system of discrete particles each with a finite number of degrees of freedom. Lagrangian field theory applies to continua and fields, which have an infinite number of degrees of freedom.

In mathematics, convenient vector spaces are locally convex vector spaces satisfying a very mild completeness condition.

In physics, a sinusoidal plane wave is a special case of plane wave: a field whose value varies as a sinusoidal function of time and of the distance from some fixed plane. It is also called a monochromatic plane wave, with constant frequency.

An integral bilinear form is a bilinear functional that belongs to the continuous dual space of , the injective tensor product of the locally convex topological vector spaces (TVSs) X and Y. An integral linear operator is a continuous linear operator that arises in a canonical way from an integral bilinear form.

This is a glossary for the terminology in a mathematical field of functional analysis.

![The arc length functional has as its domain the vector space of rectifiable curves - a subspace of

C

(

[

0

,

1

]

,

R

3

)

{\displaystyle C([0,1],\mathbb {R} ^{3})}

- and outputs a real scalar. This is an example of a non-linear functional. Arclength.svg](http://upload.wikimedia.org/wikipedia/commons/thumb/5/5a/Arclength.svg/400px-Arclength.svg.png)

![The Riemann integral is a linear functional on the vector space of functions defined on [a, b] that are Riemann-integrable from a to b. Integral as region under curve.svg](http://upload.wikimedia.org/wikipedia/commons/thumb/f/f2/Integral_as_region_under_curve.svg/220px-Integral_as_region_under_curve.svg.png)