Introduction

In a linear dynamical system, the variation of a state vector (an  -dimensional vector denoted

-dimensional vector denoted  ) equals a constant matrix (denoted

) equals a constant matrix (denoted  ) multiplied by

) multiplied by  . This variation can take two forms: either as a flow, in which

. This variation can take two forms: either as a flow, in which  varies continuously with time

varies continuously with time

or as a mapping, in which  varies in discrete steps

varies in discrete steps

These equations are linear in the following sense: if  and

and  are two valid solutions, then so is any linear combination of the two solutions, e.g.,

are two valid solutions, then so is any linear combination of the two solutions, e.g.,  where

where  and

and  are any two scalars. The matrix

are any two scalars. The matrix  need not be symmetric.

need not be symmetric.

Linear dynamical systems can be solved exactly, in contrast to most nonlinear ones. Occasionally, a nonlinear system can be solved exactly by a change of variables to a linear system. Moreover, the solutions of (almost) any nonlinear system can be well-approximated by an equivalent linear system near its fixed points. Hence, understanding linear systems and their solutions is a crucial first step to understanding the more complex nonlinear systems.

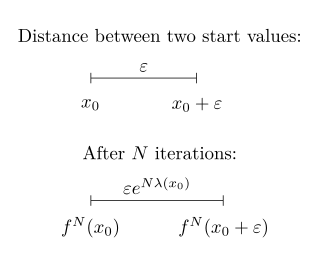

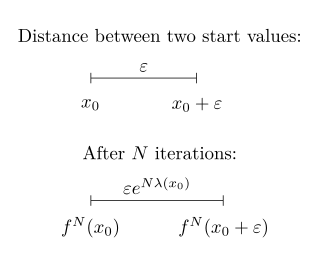

In mathematics, the Lyapunov exponent or Lyapunov characteristic exponent of a dynamical system is a quantity that characterizes the rate of separation of infinitesimally close trajectories. Quantitatively, two trajectories in phase space with initial separation vector diverge at a rate given by

In physics, a Langevin equation is a stochastic differential equation describing how a system evolves when subjected to a combination of deterministic and fluctuating ("random") forces. The dependent variables in a Langevin equation typically are collective (macroscopic) variables changing only slowly in comparison to the other (microscopic) variables of the system. The fast (microscopic) variables are responsible for the stochastic nature of the Langevin equation. One application is to Brownian motion, which models the fluctuating motion of a small particle in a fluid.

In mathematics, an eigenfunction of a linear operator D defined on some function space is any non-zero function in that space that, when acted upon by D, is only multiplied by some scaling factor called an eigenvalue. As an equation, this condition can be written as

Linear elasticity is a mathematical model of how solid objects deform and become internally stressed due to prescribed loading conditions. It is a simplification of the more general nonlinear theory of elasticity and a branch of continuum mechanics.

In control theory, the discrete Lyapunov equation is of the form

In linear algebra, a generalized eigenvector of an matrix is a vector which satisfies certain criteria which are more relaxed than those for an (ordinary) eigenvector.

In system analysis, among other fields of study, a linear time-invariant (LTI) system is a system that produces an output signal from any input signal subject to the constraints of linearity and time-invariance; these terms are briefly defined below. These properties apply (exactly or approximately) to many important physical systems, in which case the response y(t) of the system to an arbitrary input x(t) can be found directly using convolution: y(t) = (x ∗ h)(t) where h(t) is called the system's impulse response and ∗ represents convolution (not to be confused with multiplication). What's more, there are systematic methods for solving any such system (determining h(t)), whereas systems not meeting both properties are generally more difficult (or impossible) to solve analytically. A good example of an LTI system is any electrical circuit consisting of resistors, capacitors, inductors and linear amplifiers.

In mathematics, the discrete Laplace operator is an analog of the continuous Laplace operator, defined so that it has meaning on a graph or a discrete grid. For the case of a finite-dimensional graph, the discrete Laplace operator is more commonly called the Laplacian matrix.

In applied mathematics, in particular the context of nonlinear system analysis, a phase plane is a visual display of certain characteristics of certain kinds of differential equations; a coordinate plane with axes being the values of the two state variables, say (x, y), or (q, p) etc. (any pair of variables). It is a two-dimensional case of the general n-dimensional phase space.

In linear algebra, an eigenvector or characteristic vector of a linear transformation is a nonzero vector that changes at most by a constant factor when that linear transformation is applied to it. The corresponding eigenvalue, often represented by , is the multiplying factor.

In continuum mechanics, the Cauchy stress tensor, also called true stress tensor or simply stress tensor, completely defines the state of stress at a point inside a material in the deformed state, placement, or configuration. The second order tensor consists of nine components and relates a unit-length direction vector e to the traction vectorT(e) across an imaginary surface perpendicular to e:

In mathematics, preconditioning is the application of a transformation, called the preconditioner, that conditions a given problem into a form that is more suitable for numerical solving methods. Preconditioning is typically related to reducing a condition number of the problem. The preconditioned problem is then usually solved by an iterative method.

In the field of multivariate statistics, kernel principal component analysis (kernel PCA) is an extension of principal component analysis (PCA) using techniques of kernel methods. Using a kernel, the originally linear operations of PCA are performed in a reproducing kernel Hilbert space.

A multi-compartment model is a type of mathematical model used for describing the way materials or energies are transmitted among the compartments of a system. Sometimes, the physical system that we try to model in equations is too complex, so it is much easier to discretize the problem and reduce the number of parameters. Each compartment is assumed to be a homogeneous entity within which the entities being modeled are equivalent. A multi-compartment model is classified as a lumped parameters model. Similar to more general mathematical models, multi-compartment models can treat variables as continuous, such as a differential equation, or as discrete, such as a Markov chain. Depending on the system being modeled, they can be treated as stochastic or deterministic.

In mathematics, an eigenvalue perturbation problem is that of finding the eigenvectors and eigenvalues of a system that is perturbed from one with known eigenvectors and eigenvalues . This is useful for studying how sensitive the original system's eigenvectors and eigenvalues are to changes in the system. This type of analysis was popularized by Lord Rayleigh, in his investigation of harmonic vibrations of a string perturbed by small inhomogeneities.

In linear algebra, eigendecomposition is the factorization of a matrix into a canonical form, whereby the matrix is represented in terms of its eigenvalues and eigenvectors. Only diagonalizable matrices can be factorized in this way. When the matrix being factorized is a normal or real symmetric matrix, the decomposition is called "spectral decomposition", derived from the spectral theorem.

In mathematics, a nonlinear eigenproblem, sometimes nonlinear eigenvalue problem, is a generalization of the (ordinary) eigenvalue problem to equations that depend nonlinearly on the eigenvalue. Specifically, it refers to equations of the form

A differential equation is a mathematical equation for an unknown function of one or several variables that relates the values of the function itself and its derivatives of various orders. A matrix differential equation contains more than one function stacked into vector form with a matrix relating the functions to their derivatives.

Riemann invariants are mathematical transformations made on a system of conservation equations to make them more easily solvable. Riemann invariants are constant along the characteristic curves of the partial differential equations where they obtain the name invariant. They were first obtained by Bernhard Riemann in his work on plane waves in gas dynamics.

Tau functions are an important ingredient in the modern mathematical theory of integrable systems, and have numerous applications in a variety of other domains. They were originally introduced by Ryogo Hirota in his direct method approach to soliton equations, based on expressing them in an equivalent bilinear form.