The John von Neumann Theory Prize of the Institute for Operations Research and the Management Sciences (INFORMS) is awarded annually to an individual who has made fundamental and sustained contributions to theory in operations research and the management sciences.

Linear programming is a method to achieve the best outcome in a mathematical model whose requirements are represented by linear relationships. Linear programming is a special case of mathematical programming.

Mathematical optimization or mathematical programming is the selection of a best element, with regard to some criterion, from some set of available alternatives. Optimization problems of sorts arise in all quantitative disciplines from computer science and engineering to operations research and economics, and the development of solution methods has been of interest in mathematics for centuries.

Interior-point methods are a certain class of algorithms that solve linear and nonlinear convex optimization problems.

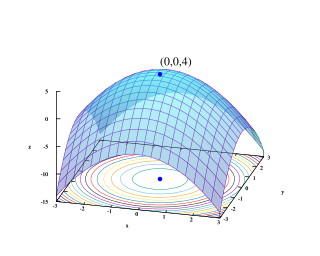

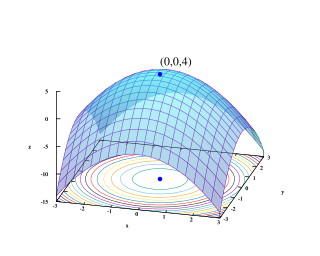

Convex optimization is a subfield of mathematical optimization that studies the problem of minimizing convex functions over convex sets. Many classes of convex optimization problems admit polynomial-time algorithms, whereas mathematical optimization is in general NP-hard.

In mathematical optimization, the ellipsoid method is an iterative method for minimizing convex functions. When specialized to solving feasible linear optimization problems with rational data, the ellipsoid method is an algorithm which finds an optimal solution in a number of steps that is polynomial in the input size.

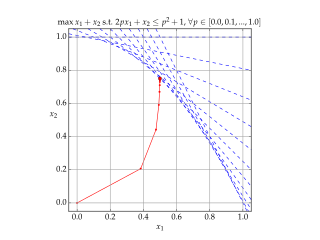

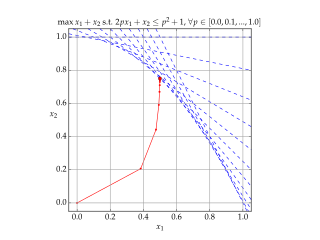

In convex optimization, a linear matrix inequality (LMI) is an expression of the form

In optimization, a self-concordant function is a function for which

Ellis Lane Johnson is the Professor Emeritus and the Coca-Cola Chaired Professor in the H. Milton Stewart School of Industrial and Systems Engineering at Georgia Institute of Technology in Atlanta, Georgia.

Yinyu Ye is a Chinese American theoretical computer scientist working on mathematical optimization. He is a specialist in interior point methods, especially in convex minimization and linear programming. He is a professor of Management Science and Engineering and Kwoh-Ting Li Chair Professor of Engineering at Stanford University. He also holds a courtesy appointment in the Department of Electrical Engineering. Ye also is a co-founder of minMax Optimization Inc.

Laurence Alexander Wolsey is an English mathematician working in the field of integer programming. He is a former president and research director of the Center for Operations Research and Econometrics (CORE) at Université catholique de Louvain in Belgium. He is professor emeritus of applied mathematics at the engineering school of the same university.

Yurii Nesterov is a Russian mathematician, an internationally recognized expert in convex optimization, especially in the development of efficient algorithms and numerical optimization analysis. He is currently a professor at the University of Louvain (UCLouvain).

Gérard Pierre Cornuéjols is the IBM University Professor of Operations Research in the Carnegie Mellon University Tepper School of Business. His research interests include facility location, integer programming, balanced matrices, and perfect graphs.

The Center for Operations Research and Econometrics (CORE) is an interdisciplinary research institute of the University of Louvain (UCLouvain) located in Louvain-la-Neuve, Belgium. Since 2010, it is part of the Louvain Institute of Data Analysis and Modeling in economics and statistics (LIDAM), along with the Institute for Economic and Social Research (IRES), Louvain Finance (LFIN) and the Institute of Statistics, Biostatistics and Actuarial Sciences (ISBA).

Jorge Nocedal is an internationally known applied mathematician, computer scientist and the Walter P. Murphy professor at Northwestern University who in 2017 received the John Von Neumann Theory Prize. He was elected a member of the National Academy of Engineering in 2020.

The Dantzig Prize is given every 3 years to one or more individuals for research which, by virtue of its originality, breadth, and depth, has a major impact on the field of mathematical programming. It is named in honor of George B. Dantzig and is awarded jointly by the Society for Industrial and Applied Mathematics (SIAM) and the Mathematical Optimization Society (MOS). The prize fund was established in 1979, and the prize first awarded in 1982.

In mathematical optimization, oracle complexity is a standard theoretical framework to study the computational requirements for solving classes of optimization problems. It is suitable for analyzing iterative algorithms which proceed by computing local information about the objective function at various points. The framework has been used to provide tight worst-case guarantees on the number of required iterations, for several important classes of optimization problems.

James Milton Renegar Jr. is an American mathematician, specializing in optimization algorithms for linear programming and nonlinear programming.

In convex geometry and polyhedral combinatorics, the extension complexity is a convex polytope is the smallest number of facets among convex polytopes that have as a projection. In this context, is called an extended formulation of ; it may have much higher dimension than .

In mathematics mirror descent descent is an iterative optimization algorithm for finding a local minimum of a differentiable function.