Bus engine replacement model

The bus engine replacement model developed in the seminal paper Rust (1987) is one of the first dynamic stochastic models of discrete choice estimated using real data, and continues to serve as classical example of the problems of this type. [4]

The model is a simple regenerative optimal stopping stochastic dynamic problem faced by the decision maker, Harold Zurcher, superintendent of maintenance at the Madison Metropolitan Bus Company in Madison, Wisconsin. For every bus in operation in each time period Harold Zurcher has to decide whether to replace the engine and bear the associated replacement cost, or to continue operating the bus at an ever raising cost of operation, which includes insurance and the cost of lost ridership in the case of a breakdown.

Let  denote the odometer reading (mileage) at period

denote the odometer reading (mileage) at period  ,

,  cost of operating the bus which depends on the vector of parameters

cost of operating the bus which depends on the vector of parameters  ,

,  cost of replacing the engine, and

cost of replacing the engine, and  the discount factor. Then the per-period utility is given by

the discount factor. Then the per-period utility is given by

where  denotes the decision (keep or replace) and

denotes the decision (keep or replace) and  and

and  represent the component of the utility observed by Harold Zurcher, but not John Rust. It is assumed that

represent the component of the utility observed by Harold Zurcher, but not John Rust. It is assumed that  and

and  are independent and identically distributed with the Type I extreme value distribution, and that

are independent and identically distributed with the Type I extreme value distribution, and that  are independent of

are independent of  conditional on

conditional on  .

.

Then the optimal decisions satisfy the Bellman equation

where  and

and  are respectively transition densities for the observed and unobserved states variables. Time indices in the Bellman equation are dropped because the model is formulated in the infinite horizon settings, the unknown optimal policy is stationary, i.e. independent of time.

are respectively transition densities for the observed and unobserved states variables. Time indices in the Bellman equation are dropped because the model is formulated in the infinite horizon settings, the unknown optimal policy is stationary, i.e. independent of time.

Given the distributional assumption on  , the probability of particular choice

, the probability of particular choice  is given by

is given by

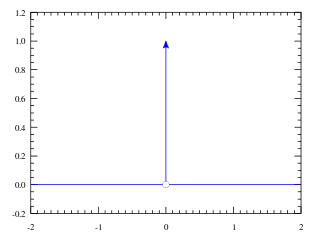

where  is a unique solution to the functional equation

is a unique solution to the functional equation

It can be shown that the latter functional equation defines a contraction mapping if the state space  is bounded, so there will be a unique solution

is bounded, so there will be a unique solution  for any

for any  , and further the implicit function theorem holds, so

, and further the implicit function theorem holds, so  is also a smooth function of

is also a smooth function of  for each

for each  .

.

Estimation with nested fixed point algorithm

The contraction mapping above can be solved numerically for the fixed point  that yields choice probabilities

that yields choice probabilities  for any given value of

for any given value of  . The log-likelihood function can then be formulated as

. The log-likelihood function can then be formulated as

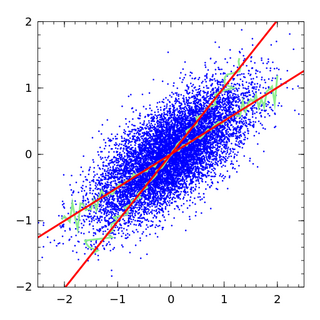

where  and

and  represent data on state variables (odometer readings) and decision (keep or replace) for

represent data on state variables (odometer readings) and decision (keep or replace) for  individual buses, each in

individual buses, each in  periods.

periods.

The joint algorithm for solving the fixed point problem given a particular value of parameter  and maximizing the log-likelihood

and maximizing the log-likelihood  with respect to

with respect to  was named by John Rust nested fixed point algorithm (NFXP).

was named by John Rust nested fixed point algorithm (NFXP).

Rust's implementation of the nested fixed point algorithm is highly optimized for this problem, using Newton–Kantorovich iterations to calculate  and quasi-Newton methods, such as the Berndt–Hall–Hall–Hausman algorithm, for likelihood maximization. [5]

and quasi-Newton methods, such as the Berndt–Hall–Hall–Hausman algorithm, for likelihood maximization. [5]

Estimation with MPEC

In the nested fixed point algorithm,  is recalculated for each guess of the parameters θ. The MPEC method instead solves the constrained optimization problem: [4]

is recalculated for each guess of the parameters θ. The MPEC method instead solves the constrained optimization problem: [4]

This method is faster to compute than non-optimized implementations of the nested fixed point algorithm, and takes about as long as highly optimized implementations. [5]

Estimation with non-solution methods

The conditional choice probabilities method of Hotz and Miller can be applied in this setting. Hotz, Miller, Sanders, and Smith proposed a computationally simpler version of the method, and tested it on a study of the bus engine replacement problem. The method works by estimating conditional choice probabilities using simulation, then backing out the implied differences in value functions. [8]