In mathematical physics and mathematics, the Pauli matrices are a set of three 2 × 2 complex matrices that are traceless, Hermitian, involutory and unitary. Usually indicated by the Greek letter sigma, they are occasionally denoted by tau when used in connection with isospin symmetries.

In linear algebra, an orthogonal matrix, or orthonormal matrix, is a real square matrix whose columns and rows are orthonormal vectors.

In mathematics, specifically in linear algebra, matrix multiplication is a binary operation that produces a matrix from two matrices. For matrix multiplication, the number of columns in the first matrix must be equal to the number of rows in the second matrix. The resulting matrix, known as the matrix product, has the number of rows of the first and the number of columns of the second matrix. The product of matrices A and B is denoted as AB.

In mechanics and geometry, the 3D rotation group, often denoted SO(3), is the group of all rotations about the origin of three-dimensional Euclidean space under the operation of composition.

Unit quaternions, known as versors, provide a convenient mathematical notation for representing spatial orientations and rotations of elements in three dimensional space. Specifically, they encode information about an axis-angle rotation about an arbitrary axis. Rotation and orientation quaternions have applications in computer graphics, computer vision, robotics, navigation, molecular dynamics, flight dynamics, orbital mechanics of satellites, and crystallographic texture analysis.

In mathematics, the matrix representation of conic sections permits the tools of linear algebra to be used in the study of conic sections. It provides easy ways to calculate a conic section's axis, vertices, tangents and the pole and polar relationship between points and lines of the plane determined by the conic. The technique does not require putting the equation of a conic section into a standard form, thus making it easier to investigate those conic sections whose axes are not parallel to the coordinate system.

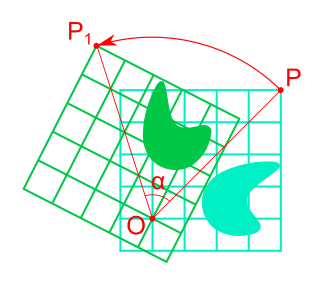

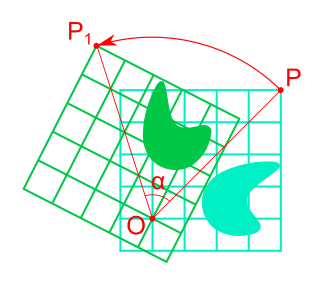

Rotation in mathematics is a concept originating in geometry. Any rotation is a motion of a certain space that preserves at least one point. It can describe, for example, the motion of a rigid body around a fixed point. Rotation can have a sign (as in the sign of an angle): a clockwise rotation is a negative magnitude so a counterclockwise turn has a positive magnitude. A rotation is different from other types of motions: translations, which have no fixed points, and (hyperplane) reflections, each of them having an entire (n − 1)-dimensional flat of fixed points in a n-dimensional space.

In linear algebra, a rotation matrix is a transformation matrix that is used to perform a rotation in Euclidean space. For example, using the convention below, the matrix

In geometry, Euler's rotation theorem states that, in three-dimensional space, any displacement of a rigid body such that a point on the rigid body remains fixed, is equivalent to a single rotation about some axis that runs through the fixed point. It also means that the composition of two rotations is also a rotation. Therefore the set of rotations has a group structure, known as a rotation group.

In mathematics, the Cayley transform, named after Arthur Cayley, is any of a cluster of related things. As originally described by Cayley (1846), the Cayley transform is a mapping between skew-symmetric matrices and special orthogonal matrices. The transform is a homography used in real analysis, complex analysis, and quaternionic analysis. In the theory of Hilbert spaces, the Cayley transform is a mapping between linear operators.

In mathematics, the polar decomposition of a square real or complex matrix is a factorization of the form , where is a unitary matrix and is a positive semi-definite Hermitian matrix, both square and of the same size.

In geometry and linear algebra, a Cartesian tensor uses an orthonormal basis to represent a tensor in a Euclidean space in the form of components. Converting a tensor's components from one such basis to another is done through an orthogonal transformation.

In mathematics, the group of rotations about a fixed point in four-dimensional Euclidean space is denoted SO(4). The name comes from the fact that it is the special orthogonal group of order 4.

In statistics, Procrustes analysis is a form of statistical shape analysis used to analyse the distribution of a set of shapes. The name Procrustes refers to a bandit from Greek mythology who made his victims fit his bed either by stretching their limbs or cutting them off.

In bioinformatics, the root mean square deviation of atomic positions, or simply root mean square deviation (RMSD), is the measure of the average distance between the atoms (usually the backbone atoms) of superimposed molecules. In the study of globular protein conformations, one customarily measures the similarity in three-dimensional structure by the RMSD of the Cα atomic coordinates after optimal rigid body superposition.

In computer vision, the essential matrix is a matrix, that relates corresponding points in stereo images assuming that the cameras satisfy the pinhole camera model.

In mathematics, the dual quaternions are an 8-dimensional real algebra isomorphic to the tensor product of the quaternions and the dual numbers. Thus, they may be constructed in the same way as the quaternions, except using dual numbers instead of real numbers as coefficients. A dual quaternion can be represented in the form A + εB, where A and B are ordinary quaternions and ε is the dual unit, which satisfies ε2 = 0 and commutes with every element of the algebra. Unlike quaternions, the dual quaternions do not form a division algebra.

The orthogonal Procrustes problem is a matrix approximation problem in linear algebra. In its classical form, one is given two matrices and and asked to find an orthogonal matrix which most closely maps to . Specifically, the orthogonal Procrustes problem is an optimization problem given by

In applied mathematics, Wahba's problem, first posed by Grace Wahba in 1965, seeks to find a rotation matrix between two coordinate systems from a set of (weighted) vector observations. Solutions to Wahba's problem are often used in satellite attitude determination utilising sensors such as magnetometers and multi-antenna GPS receivers. The cost function that Wahba's problem seeks to minimise is as follows:

In mathematics and mechanics, the Euler–Rodrigues formula describes the rotation of a vector in three dimensions. It is based on Rodrigues' rotation formula, but uses a different parametrization.