A randomized controlled trial is a form of scientific experiment used to control factors not under direct experimental control. Examples of RCTs are clinical trials that compare the effects of drugs, surgical techniques, medical devices, diagnostic procedures, diets or other medical treatments.

Cochrane is a British international charitable organisation formed to synthesize medical research findings to facilitate evidence-based choices about health interventions involving health professionals, patients and policy makers. It includes 53 review groups that are based at research institutions worldwide. Cochrane has approximately 30,000 volunteer experts from around the world.

Low back pain or lumbago is a common disorder involving the muscles, nerves, and bones of the back, in between the lower edge of the ribs and the lower fold of the buttocks. Pain can vary from a dull constant ache to a sudden sharp feeling. Low back pain may be classified by duration as acute, sub-chronic, or chronic. The condition may be further classified by the underlying cause as either mechanical, non-mechanical, or referred pain. The symptoms of low back pain usually improve within a few weeks from the time they start, with 40–90% of people recovered by six weeks.

A medical guideline is a document with the aim of guiding decisions and criteria regarding diagnosis, management, and treatment in specific areas of healthcare. Such documents have been in use for thousands of years during the entire history of medicine. However, in contrast to previous approaches, which were often based on tradition or authority, modern medical guidelines are based on an examination of current evidence within the paradigm of evidence-based medicine. They usually include summarized consensus statements on best practice in healthcare. A healthcare provider is obliged to know the medical guidelines of their profession, and has to decide whether to follow the recommendations of a guideline for an individual treatment.

A systematic review is a scholarly synthesis of the evidence on a clearly presented topic using critical methods to identify, define and assess research on the topic. A systematic review extracts and interprets data from published studies on the topic, then analyzes, describes, critically appraises and summarizes interpretations into a refined evidence-based conclusion. For example, a systematic review of randomized controlled trials is a way of summarizing and implementing evidence-based medicine.

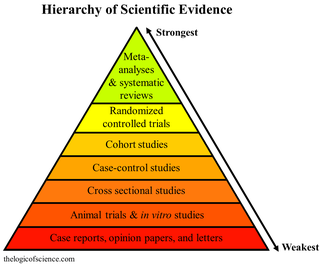

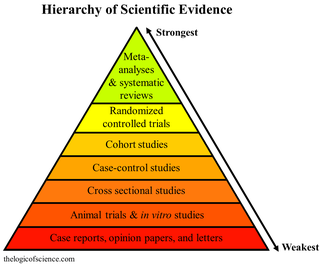

A hierarchy of evidence, comprising levels of evidence (LOEs), that is, evidence levels (ELs), is a heuristic used to rank the relative strength of results obtained from experimental research, especially medical research. There is broad agreement on the relative strength of large-scale, epidemiological studies. More than 80 different hierarchies have been proposed for assessing medical evidence. The design of the study and the endpoints measured affect the strength of the evidence. In clinical research, the best evidence for treatment efficacy is mainly from meta-analyses of randomized controlled trials (RCTs). Systematic reviews of completed, high-quality randomized controlled trials – such as those published by the Cochrane Collaboration – rank the same as systematic review of completed high-quality observational studies in regard to the study of side effects. Evidence hierarchies are often applied in evidence-based practices and are integral to evidence-based medicine (EBM).

In medicine, a case report is a detailed report of the symptoms, signs, diagnosis, treatment, and follow-up of an individual patient. Case reports may contain a demographic profile of the patient, but usually describe an unusual or novel occurrence. Some case reports also contain a literature review of other reported cases. Case reports are professional narratives that provide feedback on clinical practice guidelines and offer a framework for early signals of effectiveness, adverse events, and cost. They can be shared for medical, scientific, or educational purposes.

Sir Iain Geoffrey Chalmers is a British health services researcher, one of the founders of the Cochrane Collaboration, and coordinator of the James Lind Initiative, which includes the James Lind Library and James Lind Alliance.

David Lawrence Sackett was an American-Canadian physician and a pioneer in evidence-based medicine. He is known as one of the fathers of Evidence-Based Medicine. He founded the first department of clinical epidemiology in Canada at McMaster University, and the Oxford Centre for Evidence-Based Medicine. He is well known for his textbooks Clinical Epidemiology and Evidence-Based Medicine.

Critical appraisal in evidence based medicine, is the use of explicit, transparent methods to assess the data in published research, applying the rules of evidence to factors such as internal validity, adherence to reporting standards, conclusions, generalizability and risk-of-bias. Critical appraisal methods form a central part of the systematic review process. They are used in evidence synthesis to assist clinical decision-making, and are increasingly used in evidence-based social care and education provision.

Evidence-based dentistry (EBD) is the dental part of the more general movement toward evidence-based medicine and other evidence-based practices. The pervasive access to information on the internet includes different aspects of dentistry for both the dentists and patients. This has created a need to ensure that evidence referenced to are valid, reliable and of good quality.

Alessandro Liberati was an Italian healthcare researcher and clinical epidemiologist, and founder of the Italian Cochrane Centre.

Health care quality is a level of value provided by any health care resource, as determined by some measurement. As with quality in other fields, it is an assessment of whether something is good enough and whether it is suitable for its purpose. The goal of health care is to provide medical resources of high quality to all who need them; that is, to ensure good quality of life, cure illnesses when possible, to extend life expectancy, and so on. Researchers use a variety of quality measures to attempt to determine health care quality, including counts of a therapy's reduction or lessening of diseases identified by medical diagnosis, a decrease in the number of risk factors which people have following preventive care, or a survey of health indicators in a population who are accessing certain kinds of care.

The discipline of evidence-based toxicology (EBT) strives to transparently, consistently, and objectively assess available scientific evidence in order to answer questions in toxicology, the study of the adverse effects of chemical, physical, or biological agents on living organisms and the environment, including the prevention and amelioration of such effects. EBT has the potential to address concerns in the toxicological community about the limitations of current approaches to assessing the state of the science. These include concerns related to transparency in decision making, synthesis of different types of evidence, and the assessment of bias and credibility. Evidence-based toxicology has its roots in the larger movement towards evidence-based practices.

The Centre for Evidence-Based Medicine (CEBM), based in the Nuffield Department of Primary Care Health Sciences at the University of Oxford, is an academic-led centre dedicated to the practice, teaching, and dissemination of high quality evidence-based medicine to improve healthcare in everyday clinical practice. CEBM was founded by David Sackett in 1995. It was subsequently directed by Brian Haynes and Paul Glasziou. Since 2010 it has been led by Professor Carl Heneghan, a clinical epidemiologist and general practitioner.

Kay Dickersin is an academic who trained first in cell biology and subsequently epidemiology. She went on to a career studying factors that influence research integrity, in particular publication bias and outcome reporting bias. She is retired Professor Emerita in the Department of Epidemiology at Johns Hopkins Bloomberg School of Public Health where she was Director of the Center for Clinical Trials and Evidence Synthesis there. She was also Director of the US Cochrane Center and the US Satellite of the Cochrane Eyes and Vision Group within the Cochrane Collaboration. Dickersin received multiple awards for her research.

Allegiance bias in behavioral sciences is a bias resulted from the investigator's or researcher's allegiance to a specific school of thought. Researchers/investigators have been exposed to many types of branches of psychology or schools of thought. Naturally they adopt a school or branch that fits with their paradigm of thinking. More specifically, allegiance bias is when this leads therapists, researchers, etc. believing that their school of thought or treatment is superior to others. Their superior belief to these certain schools of thought can bias their research in effective treatments trials or investigative situations leading to allegiance bias. Reason being is that they may have devoted their thinking to certain treatments they have seen work in their past experiences. This can lead to errors in interpreting the results of their research. Their “pledge” to stay within their own paradigm of thinking may affect their ability to find more effective treatments to help the patient or situation they are investigating.

The treatment and management of COVID-19 combines both supportive care, which includes treatment to relieve symptoms, fluid therapy, oxygen support as needed, and a growing list of approved medications. Highly effective vaccines have reduced mortality related to SARS-CoV-2; however, for those awaiting vaccination, as well as for the estimated millions of immunocompromised persons who are unlikely to respond robustly to vaccination, treatment remains important. Some people may experience persistent symptoms or disability after recovery from the infection, known as long COVID, but there is still limited information on the best management and rehabilitation for this condition.

Robert Brian Haynes OC is a Canadian physician, clinical epidemiologist, researcher and an academic. He is professor emeritus at McMaster University and one of the founders of evidence-based medicine.

Benjamin Djulbegovic is an American physician-scientist whose academic and research focus revolves around optimizing clinical research and the practice of medicine by comprehending the nature of medical evidence and decision-making. In his work, he has integrated concepts from evidence-based medicine (EBM), predictive analytics, health outcomes research, and the decision sciences.