Ubiquitous computing is a concept in software engineering, hardware engineering and computer science where computing is made to appear anytime and everywhere. In contrast to desktop computing, ubiquitous computing can occur using any device, in any location, and in any format. A user interacts with the computer, which can exist in many different forms, including laptop computers, tablets, smart phones and terminals in everyday objects such as a refrigerator or a pair of glasses. The underlying technologies to support ubiquitous computing include Internet, advanced middleware, operating system, mobile code, sensors, microprocessors, new I/O and user interfaces, computer networks, mobile protocols, location and positioning, and new materials.

In human–computer interaction, WIMP stands for "windows, icons, menus, pointer", denoting a style of interaction using these elements of the user interface. Other expansions are sometimes used, such as substituting "mouse" and "mice" for menus, or "pull-down menu" and "pointing" for pointer.

A handheld projector is an image projector in a handheld device. It was developed as a computer display device for compact portable devices such as mobile phones, personal digital assistants, and digital cameras, which have sufficient storage capacity to handle presentation materials but are too small to accommodate a display screen that an audience can see easily. Handheld projectors involve miniaturized hardware, and software that can project digital images onto a nearby viewing surface.

Gesture recognition is an area of research and development in computer science and language technology concerned with the recognition and interpretation of human gestures. A subdiscipline of computer vision, it employs mathematical algorithms to interpret gestures. Gestures can originate from any bodily motion or state, but commonly originate from the face or hand. One area of the field is emotion recognition derived from facial expressions and hand gestures. Users can make simple gestures to control or interact with devices without physically touching them. Many approaches have been made using cameras and computer vision algorithms to interpret sign language, however, the identification and recognition of posture, gait, proxemics, and human behaviors is also the subject of gesture recognition techniques. Gesture recognition is a path for computers to begin to better understand and interpret human body language, previously not possible through text or unenhanced graphical (GUI) user interfaces.

A tangible user interface (TUI) is a user interface in which a person interacts with digital information through the physical environment. The initial name was Graspable User Interface, which is no longer used. The purpose of TUI development is to empower collaboration, learning, and design by giving physical forms to digital information, thus taking advantage of the human ability to grasp and manipulate physical objects and materials.

Ben Shneiderman is an American computer scientist, a Distinguished University Professor in the University of Maryland Department of Computer Science, which is part of the University of Maryland College of Computer, Mathematical, and Natural Sciences at the University of Maryland, College Park, and the founding director (1983-2000) of the University of Maryland Human-Computer Interaction Lab. He conducted fundamental research in the field of human–computer interaction, developing new ideas, methods, and tools such as the direct manipulation interface, and his eight rules of design.

In the field of human–computer interaction, a Wizard of Oz experiment is a research experiment in which subjects interact with a computer system that subjects believe to be autonomous, but which is actually being operated or partially operated by an unseen human being.

Attentive user interfaces (AUI) are user interfaces that manage the user's attention. For instance, an AUI can manage notifications, deciding when to interrupt the user, the kind of warnings, and the level of detail of the messages presented to the user. Attentive user interfaces, by generating only the relevant information, can in particular be used to display information in a way that increase the effectiveness of the interaction.

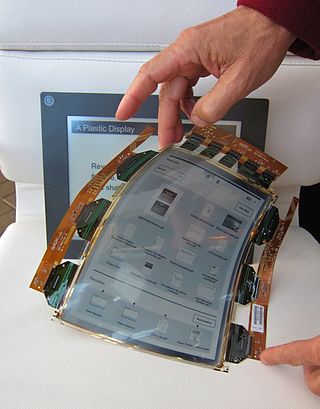

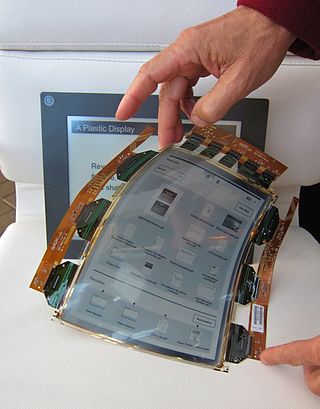

A flexible display or rollable display is an electronic visual display which is flexible in nature, as opposed to the traditional flat screen displays used in most electronic devices. In recent years there has been a growing interest from numerous consumer electronics manufacturers to apply this display technology in e-readers, mobile phones and other consumer electronics. Such screens can be rolled up like a scroll without the image or text being distorted. Technologies involved in building a rollable display include electronic ink, Gyricon, Organic LCD, and OLED.

In computing, multi-touch is technology that enables a surface to recognize the presence of more than one point of contact with the surface at the same time. The origins of multitouch began at CERN, MIT, University of Toronto, Carnegie Mellon University and Bell Labs in the 1970s. CERN started using multi-touch screens as early as 1976 for the controls of the Super Proton Synchrotron. A form of gesture recognition, capacitive multi-touch displays were popularized by Apple's iPhone in 2007. Plural-point awareness may be used to implement additional functionality, such as pinch to zoom or to activate certain subroutines attached to predefined gestures.

In computing, post-WIMP comprises work on user interfaces, mostly graphical user interfaces, which attempt to go beyond the paradigm of windows, icons, menus and a pointing device, i.e. WIMP interfaces.

A projection augmented model is an element sometimes employed in virtual reality systems. It consists of a physical three-dimensional model onto which a computer image is projected to create a realistic looking object. Importantly, the physical model is the same geometric shape as the object that the PA model depicts.

In human–computer interaction, an organic user interface (OUI) is defined as a user interface with a non-flat display. After Engelbart and Sutherland's graphical user interface (GUI), which was based on the cathode ray tube (CRT), and Kay and Weiser's ubiquitous computing, which is based on the flat panel liquid-crystal display (LCD), OUI represents one possible third wave of display interaction paradigms, pertaining to multi-shaped and flexible displays. In an OUI, the display surface is always the focus of interaction, and may actively or passively change shape upon analog inputs. These inputs are provided through direct physical gestures, rather than through indirect point-and-click control. Note that the term "Organic" in OUI was derived from organic architecture, referring to the adoption of natural form to design a better fit with human ecology. The term also alludes to the use of organic electronics for this purpose.

In computing, 3D interaction is a form of human-machine interaction where users are able to move and perform interaction in 3D space. Both human and machine process information where the physical position of elements in the 3D space is relevant.

In computing, a natural user interface (NUI) or natural interface is a user interface that is effectively invisible, and remains invisible as the user continuously learns increasingly complex interactions. The word "natural" is used because most computer interfaces use artificial control devices whose operation has to be learned. Examples include voice assistants, such as Alexa and Siri, touch and multitouch interactions on today's mobile phones and tablets, but also touch interfaces invisibly integrated into the textiles furnitures.

The DiamondTouch table is a multi-touch, interactive PC interface product from Circle Twelve Inc. It is a human interface device that has the capability of allowing multiple people to interact simultaneously while identifying which person is touching where. The technology was originally developed at Mitsubishi Electric Research Laboratories (MERL) in 2001 and later licensed to Circle Twelve Inc in 2008. The DiamondTouch table is used to facilitate face-to-face collaboration, brainstorming, and decision-making, and users include construction management company Parsons Brinckerhoff, the Methodist Hospital, and the US National Geospatial-Intelligence Agency (NGA).

Roeland "Roel" Vertegaal is a Dutch-Canadian interaction designer, scientist, musician and entrepreneur working in the area of Human-Computer Interaction. He is best known for his pioneering work on flexible and paper computers, with systems such as PaperWindows (2004), PaperPhone (2010) and PaperTab (2012).

Jacob O. Wobbrock is a Professor in the University of Washington Information School and, by courtesy, in the Paul G. Allen School of Computer Science & Engineering at the University of Washington. He is Director of the ACE Lab, Associate Director and founding Co-Director Emeritus of the CREATE research center, and a founding member of the DUB Group and the MHCI+D degree program.

Andrew Cockburn is currently working as a Professor in the Department of Computer Science and Software Engineering at the University of Canterbury in Christchurch, New Zealand. He is in charge of the Human Computer Interactions Lab where he conducts research focused on designing and testing user interfaces that integrate with inherent human factors.

Shumin Zhai is a Chinese-born American Canadian Human–computer interaction (HCI) research scientist and inventor. He is known for his research specifically on input devices and interaction methods, swipe-gesture-based touchscreen keyboards, eye-tracking interfaces, and models of human performance in human-computer interaction. His studies have contributed to both foundational models and understandings of HCI and practical user interface designs and flagship products. He previously worked at IBM where he invented the ShapeWriter text entry method for smartphones, which is a predecessor to the modern Swype keyboard. Dr. Zhai's publications have won the ACM UIST Lasting Impact Award and the IEEE Computer Society Best Paper Award, among others, and he is most known for his research specifically on input devices and interaction methods, swipe-gesture-based touchscreen keyboards, eye-tracking interfaces, and models of human performance in human-computer interaction. Dr. Zhai is currently a Principal Scientist at Google where he leads and directs research, design, and development of human-device input methods and haptics systems.