Entropy is a scientific concept, as well as a measurable physical property, that is most commonly associated with a state of disorder, randomness, or uncertainty. The term and the concept are used in diverse fields, from classical thermodynamics, where it was first recognized, to the microscopic description of nature in statistical physics, and to the principles of information theory. It has found far-ranging applications in chemistry and physics, in biological systems and their relation to life, in cosmology, economics, sociology, weather science, climate change, and information systems including the transmission of information in telecommunication.

The second law of thermodynamics is a physical law based on universal experience concerning heat and energy interconversions. One simple statement of the law is that heat always moves from hotter objects to colder objects, unless energy in some form is supplied to reverse the direction of heat flow. Another definition is: "Not all heat energy can be converted into work in a cyclic process."

In statistical mechanics, Maxwell–Boltzmann statistics describes the distribution of classical material particles over various energy states in thermal equilibrium. It is applicable when the temperature is high enough or the particle density is low enough to render quantum effects negligible.

The third law of thermodynamics states that the entropy of a closed system at thermodynamic equilibrium approaches a constant value when its temperature approaches absolute zero. This constant value cannot depend on any other parameters characterizing the system, such as pressure or applied magnetic field. At absolute zero the system must be in a state with the minimum possible energy.

In physics, a partition function describes the statistical properties of a system in thermodynamic equilibrium. Partition functions are functions of the thermodynamic state variables, such as the temperature and volume. Most of the aggregate thermodynamic variables of the system, such as the total energy, free energy, entropy, and pressure, can be expressed in terms of the partition function or its derivatives. The partition function is dimensionless.

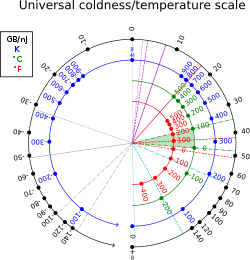

Certain systems can achieve negative thermodynamic temperature; that is, their temperature can be expressed as a negative quantity on the Kelvin or Rankine scales. This should be distinguished from temperatures expressed as negative numbers on non-thermodynamic Celsius or Fahrenheit scales, which are nevertheless higher than absolute zero.

In thermodynamics, the Onsager reciprocal relations express the equality of certain ratios between flows and forces in thermodynamic systems out of equilibrium, but where a notion of local equilibrium exists.

The fluctuation–dissipation theorem (FDT) or fluctuation–dissipation relation (FDR) is a powerful tool in statistical physics for predicting the behavior of systems that obey detailed balance. Given that a system obeys detailed balance, the theorem is a proof that thermodynamic fluctuations in a physical variable predict the response quantified by the admittance or impedance of the same physical variable, and vice versa. The fluctuation–dissipation theorem applies both to classical and quantum mechanical systems.

The laws of thermodynamics are a set of scientific laws which define a group of physical quantities, such as temperature, energy, and entropy, that characterize thermodynamic systems in thermodynamic equilibrium. The laws also use various parameters for thermodynamic processes, such as thermodynamic work and heat, and establish relationships between them. They state empirical facts that form a basis of precluding the possibility of certain phenomena, such as perpetual motion. In addition to their use in thermodynamics, they are important fundamental laws of physics in general, and are applicable in other natural sciences.

An ideal Bose gas is a quantum-mechanical phase of matter, analogous to a classical ideal gas. It is composed of bosons, which have an integer value of spin, and abide by Bose–Einstein statistics. The statistical mechanics of bosons were developed by Satyendra Nath Bose for a photon gas, and extended to massive particles by Albert Einstein who realized that an ideal gas of bosons would form a condensate at a low enough temperature, unlike a classical ideal gas. This condensate is known as a Bose–Einstein condensate.

In statistical mechanics, the grand canonical ensemble is the statistical ensemble that is used to represent the possible states of a mechanical system of particles that are in thermodynamic equilibrium with a reservoir. The system is said to be open in the sense that the system can exchange energy and particles with a reservoir, so that various possible states of the system can differ in both their total energy and total number of particles. The system's volume, shape, and other external coordinates are kept the same in all possible states of the system.

In statistical mechanics, the microcanonical ensemble is a statistical ensemble that represents the possible states of a mechanical system whose total energy is exactly specified. The system is assumed to be isolated in the sense that it cannot exchange energy or particles with its environment, so that the energy of the system does not change with time.

The principle of detailed balance can be used in kinetic systems which are decomposed into elementary processes. It states that at equilibrium, each elementary process is in equilibrium with its reverse process.

In statistical mechanics, a microstate is a specific microscopic configuration of a thermodynamic system that the system may occupy with a certain probability in the course of its thermal fluctuations. In contrast, the macrostate of a system refers to its macroscopic properties, such as its temperature, pressure, volume and density. Treatments on statistical mechanics define a macrostate as follows: a particular set of values of energy, the number of particles, and the volume of an isolated thermodynamic system is said to specify a particular macrostate of it. In this description, microstates appear as different possible ways the system can achieve a particular macrostate.

The concept of entropy developed in response to the observation that a certain amount of functional energy released from combustion reactions is always lost to dissipation or friction and is thus not transformed into useful work. Early heat-powered engines such as Thomas Savery's (1698), the Newcomen engine (1712) and the Cugnot steam tricycle (1769) were inefficient, converting less than two percent of the input energy into useful work output; a great deal of useful energy was dissipated or lost. Over the next two centuries, physicists investigated this puzzle of lost energy; the result was the concept of entropy.

The concept entropy was first developed by German physicist Rudolf Clausius in the mid-nineteenth century as a thermodynamic property that predicts that certain spontaneous processes are irreversible or impossible. In statistical mechanics, entropy is formulated as a statistical property using probability theory. The statistical entropy perspective was introduced in 1870 by Austrian physicist Ludwig Boltzmann, who established a new field of physics that provided the descriptive linkage between the macroscopic observation of nature and the microscopic view based on the rigorous treatment of large ensembles of microstates that constitute thermodynamic systems.

In thermodynamics, the fundamental thermodynamic relation are four fundamental equations which demonstrate how four important thermodynamic quantities depend on variables that can be controlled and measured experimentally. Thus, they are essentially equations of state, and using the fundamental equations, experimental data can be used to determine sought-after quantities like G or H (enthalpy). The relation is generally expressed as a microscopic change in internal energy in terms of microscopic changes in entropy, and volume for a closed system in thermal equilibrium in the following way.

In thermodynamics, entropy is a numerical quantity that shows that many physical processes can go in only one direction in time. For example, someone can pour cream into coffee and mix it, but they cannot "unmix" it; they can burn a piece of wood, but cannot "unburn" it. The word 'entropy' has entered popular usage to refer a lack of order or predictability, or of a gradual decline into disorder. A more physical interpretation of thermodynamic entropy refers to spread of energy or matter, or to extent and diversity of microscopic motion.

In statistical mechanics, thermal fluctuations are random deviations of an atomic system from its average state, that occur in a system at equilibrium. All thermal fluctuations become larger and more frequent as the temperature increases, and likewise they decrease as temperature approaches absolute zero.

The Gibbs rotational ensemble represents the possible states of a mechanical system in thermal and rotational equilibrium at temperature and angular velocity . The Jaynes procedure can be used to obtain this ensemble. An ensemble is the set of microstates corresponding to a given macrostate.