A central processing unit (CPU)—also called a central processor or main processor—is the most important processor in a given computer. Its electronic circuitry executes instructions of a computer program, such as arithmetic, logic, controlling, and input/output (I/O) operations. This role contrasts with that of external components, such as main memory and I/O circuitry, and specialized coprocessors such as graphics processing units (GPUs).

Static random-access memory is a type of random-access memory (RAM) that uses latching circuitry (flip-flop) to store each bit. SRAM is volatile memory; data is lost when power is removed.

Dynamic random-access memory is a type of random-access semiconductor memory that stores each bit of data in a memory cell, usually consisting of a tiny capacitor and a transistor, both typically based on metal–oxide–semiconductor (MOS) technology. While most DRAM memory cell designs use a capacitor and transistor, some only use two transistors. In the designs where a capacitor is used, the capacitor can either be charged or discharged; these two states are taken to represent the two values of a bit, conventionally called 0 and 1. The electric charge on the capacitors gradually leaks away; without intervention the data on the capacitor would soon be lost. To prevent this, DRAM requires an external memory refresh circuit which periodically rewrites the data in the capacitors, restoring them to their original charge. This refresh process is the defining characteristic of dynamic random-access memory, in contrast to static random-access memory (SRAM) which does not require data to be refreshed. Unlike flash memory, DRAM is volatile memory, since it loses its data quickly when power is removed. However, DRAM does exhibit limited data remanence.

In automata theory, sequential logic is a type of logic circuit whose output depends on the present value of its input signals and on the sequence of past inputs, the input history. This is in contrast to combinational logic, whose output is a function of only the present input. That is, sequential logic has state (memory) while combinational logic does not.

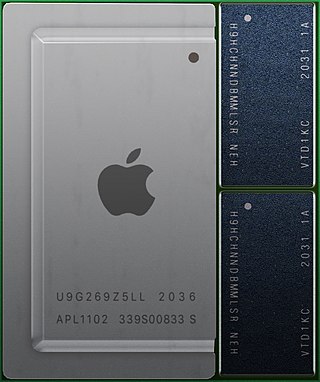

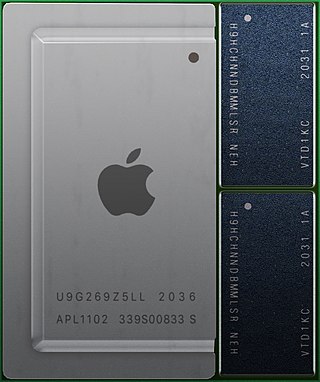

A system on a chip or system-on-chip is an integrated circuit that integrates most or all components of a computer or other electronic system. These components almost always include on-chip central processing unit (CPU), memory interfaces, input/output devices, input/output interfaces, and secondary storage interfaces, often alongside other components such as radio modems and a graphics processing unit (GPU) – all on a single substrate or microchip. SoCs may contain digital, and also analog, mixed-signal, and often radio frequency signal processing functions.

In electronics and especially synchronous digital circuits, a clock signal is an electronic logic signal which oscillates between a high and a low state at a constant frequency and is used like a metronome to synchronize actions of digital circuits. In a synchronous logic circuit, the most common type of digital circuit, the clock signal is applied to all storage devices, flip-flops and latches, and causes them all to change state simultaneously, preventing race conditions.

This is an index of articles relating to electronics and electricity or natural electricity and things that run on electricity and things that use or conduct electricity.

JTAG is an industry standard for verifying designs and testing printed circuit boards after manufacture.

Column address strobe latency, also called CAS latency or CL, is the delay in clock cycles between the READ command and the moment data is available. In asynchronous DRAM, the interval is specified in nanoseconds. In synchronous DRAM, the interval is specified in clock cycles. Because the latency is dependent upon a number of clock ticks instead of absolute time, the actual time for an SDRAM module to respond to a CAS event might vary between uses of the same module if the clock rate differs.

In digital electronics, a synchronous circuit is a digital circuit in which the changes in the state of memory elements are synchronized by a clock signal. In a sequential digital logic circuit, data are stored in memory devices called flip-flops or latches. The output of a flip-flop is constant until a pulse is applied to its "clock" input, upon which the input of the flip-flop is latched into its output. In a synchronous logic circuit, an electronic oscillator called the clock generates a string (sequence) of pulses, the "clock signal". This clock signal is applied to every storage element, so in an ideal synchronous circuit, every change in the logical levels of its storage components is simultaneous. Ideally, the input to each storage element has reached its final value before the next clock occurs, so the behaviour of the whole circuit can be predicted exactly. Practically, some delay is required for each logical operation, resulting in a maximum speed limitations at which each synchronous system can run.

Asynchronous circuit is a sequential digital logic circuit that does not use a global clock circuit or signal generator to synchronize its components. Instead, the components are driven by a handshaking circuit which indicates a completion of a set of instructions. Handshaking works by simple data transfer protocols. Many synchronous circuits were developed in early 1950s as part of bigger asynchronous systems. Asynchronous circuits and theory surrounding is a part of several steps in integrated circuit design, a field of digital electronics engineering.

A synchronous programming language is a computer programming language optimized for programming reactive systems. Computer systems can be sorted in three main classes: (1) transformational systems that take some inputs, process them, deliver their outputs, and terminate their execution; a typical example is a compiler; (2) interactive systems that interact continuously with their environment, at their own speed; a typical example is the web; and (3) reactive systems that interact continuously with their environment, at a speed imposed by the environment; a typical example is the automatic flight control system of modern airplanes. Reactive systems must therefore react to stimuli from the environment within strict time bounds. For this reason they are often also called real-time systems, and are found often in embedded systems.

In electronics, metastability is the ability of a digital electronic system to persist for an unbounded time in an unstable equilibrium or metastable state. In digital logic circuits, a digital signal is required to be within certain voltage or current limits to represent a '0' or '1' logic level for correct circuit operation; if the signal is within a forbidden intermediate range it may cause faulty behavior in logic gates the signal is applied to. In metastable states, the circuit may be unable to settle into a stable '0' or '1' logic level within the time required for proper circuit operation. As a result, the circuit can act in unpredictable ways, and may lead to a system failure, sometimes referred to as a "glitch". Metastability is an instance of the Buridan's ass paradox.

In computer architecture, clock gating is a popular power management technique used in many synchronous circuits for reducing dynamic power dissipation, by removing the clock signal when the circuit is not in use or ignores clock signal. Clock gating saves power by pruning the clock tree, at the cost of adding more logic to a circuit. Pruning the clock disables portions of the circuitry so that the flip-flops in them do not have to switch states. Switching states consumes power. When not being switched, the switching power consumption goes to zero, and only leakage currents are incurred.

Arbiters are electronic devices that allocate access to shared resources.

The primary focus of this article is asynchronous control in digital electronic systems. In a synchronous system, operations are coordinated by one, or more, centralized clock signals. An asynchronous system, in contrast, has no global clock. Asynchronous systems do not depend on strict arrival times of signals or messages for reliable operation. Coordination is achieved using event-driven architecture triggered by network packet arrival, changes (transitions) of signals, handshake protocols, and other methods.

A network on a chip or network-on-chip is a network-based communications subsystem on an integrated circuit ("microchip"), most typically between modules in a system on a chip (SoC). The modules on the IC are typically semiconductor IP cores schematizing various functions of the computer system, and are designed to be modular in the sense of network science. The network on chip is a router-based packet switching network between SoC modules.

In digital electronic design a clock domain crossing (CDC), or simply clock crossing, is the traversal of a signal in a synchronous digital circuit from one clock domain into another. If a signal does not assert long enough and is not registered, it may appear asynchronous on the incoming clock boundary.

The asynchronous array of simple processors (AsAP) architecture comprises a 2-D array of reduced complexity programmable processors with small scratchpad memories interconnected by a reconfigurable mesh network. AsAP was developed by researchers in the VLSI Computation Laboratory (VCL) at the University of California, Davis and achieves high performance and energy-efficiency, while using a relatively small circuit area. It was made in 2006.

SpiNNaker is a massively parallel, manycore supercomputer architecture designed by the Advanced Processor Technologies Research Group (APT) at the Department of Computer Science, University of Manchester. It is composed of 57,600 processing nodes, each with 18 ARM9 processors and 128 MB of mobile DDR SDRAM, totalling 1,036,800 cores and over 7 TB of RAM. The computing platform is based on spiking neural networks, useful in simulating the human brain.