The knapsack problem is the following problem in combinatorial optimization:

The travelling salesman problem, also known as the travelling salesperson problem (TSP), asks the following question: "Given a list of cities and the distances between each pair of cities, what is the shortest possible route that visits each city exactly once and returns to the origin city?" It is an NP-hard problem in combinatorial optimization, important in theoretical computer science and operations research.

In machine learning, support vector machines are supervised max-margin models with associated learning algorithms that analyze data for classification and regression analysis. Developed at AT&T Bell Laboratories by Vladimir Vapnik with colleagues SVMs are one of the most studied models, being based on statistical learning frameworks or VC theory proposed by Vapnik and Chervonenkis (1974).

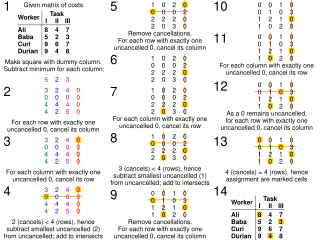

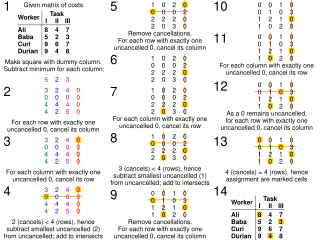

The assignment problem is a fundamental combinatorial optimization problem. In its most general form, the problem is as follows:

An inverse problem in science is the process of calculating from a set of observations the causal factors that produced them: for example, calculating an image in X-ray computed tomography, source reconstruction in acoustics, or calculating the density of the Earth from measurements of its gravity field. It is called an inverse problem because it starts with the effects and then calculates the causes. It is the inverse of a forward problem, which starts with the causes and then calculates the effects.

Multidimensional scaling (MDS) is a means of visualizing the level of similarity of individual cases of a data set. MDS is used to translate distances between each pair of objects in a set into a configuration of points mapped into an abstract Cartesian space.

Combinatorial optimization is a subfield of mathematical optimization that consists of finding an optimal object from a finite set of objects, where the set of feasible solutions is discrete or can be reduced to a discrete set. Typical combinatorial optimization problems are the travelling salesman problem ("TSP"), the minimum spanning tree problem ("MST"), and the knapsack problem. In many such problems, such as the ones previously mentioned, exhaustive search is not tractable, and so specialized algorithms that quickly rule out large parts of the search space or approximation algorithms must be resorted to instead.

In computer science, parameterized complexity is a branch of computational complexity theory that focuses on classifying computational problems according to their inherent difficulty with respect to multiple parameters of the input or output. The complexity of a problem is then measured as a function of those parameters. This allows the classification of NP-hard problems on a finer scale than in the classical setting, where the complexity of a problem is only measured as a function of the number of bits in the input. This appears to have been first demonstrated in Gurevich, Stockmeyer & Vishkin (1984). The first systematic work on parameterized complexity was done by Downey & Fellows (1999).

The quadratic assignment problem (QAP) is one of the fundamental combinatorial optimization problems in the branch of optimization or operations research in mathematics, from the category of the facilities location problems first introduced by Koopmans and Beckmann.

A fully polynomial-time approximation scheme (FPTAS) is an algorithm for finding approximate solutions to function problems, especially optimization problems. An FPTAS takes as input an instance of the problem and a parameter ε > 0. It returns as output a value which is at least times the correct value, and at most times the correct value.

In evolutionary computation, differential evolution (DE) is a method that optimizes a problem by iteratively trying to improve a candidate solution with regard to a given measure of quality. Such methods are commonly known as metaheuristics as they make few or no assumptions about the optimized problem and can search very large spaces of candidate solutions. However, metaheuristics such as DE do not guarantee an optimal solution is ever found.

Optimal job scheduling is a class of optimization problems related to scheduling. The inputs to such problems are a list of jobs and a list of machines. The required output is a schedule – an assignment of jobs to machines. The schedule should optimize a certain objective function. In the literature, problems of optimal job scheduling are often called machine scheduling, processor scheduling, multiprocessor scheduling, or just scheduling.

In applied mathematics, the maximum generalized assignment problem is a problem in combinatorial optimization. This problem is a generalization of the assignment problem in which both tasks and agents have a size. Moreover, the size of each task might vary from one agent to the other.

In the case of systems composed of subsystems, the classification of quantum-entangledstates is richer than in the bipartite case. Indeed, in multipartite entanglement apart from fully separable states and fully entangled states, there also exists the notion of partially separable states.

In the mathematical fields of graph theory and combinatorial optimization, the bipartite dimension or biclique cover number of a graph G = (V, E) is the minimum number of bicliques (that is complete bipartite subgraphs), needed to cover all edges in E. A collection of bicliques covering all edges in G is called a biclique edge cover, or sometimes biclique cover. The bipartite dimension of G is often denoted by the symbol d(G).

David Bernard Shmoys is a Professor in the School of Operations Research and Information Engineering and the Department of Computer Science at Cornell University. He obtained his Ph.D. from the University of California, Berkeley in 1984. His major focus has been in the design and analysis of algorithms for discrete optimization problems.

In the study of algorithms, an LP-type problem is an optimization problem that shares certain properties with low-dimensional linear programs and that may be solved by similar algorithms. LP-type problems include many important optimization problems that are not themselves linear programs, such as the problem of finding the smallest circle containing a given set of planar points. They may be solved by a combination of randomized algorithms in an amount of time that is linear in the number of elements defining the problem, and subexponential in the dimension of the problem.

Biogeography-based optimization (BBO) is an evolutionary algorithm (EA) that optimizes a function by stochastically and iteratively improving candidate solutions with regard to a given measure of quality, or fitness function. BBO belongs to the class of metaheuristics since it includes many variations, and since it does not make any assumptions about the problem and can therefore be applied to a wide class of problems.

Dispersive flies optimisation (DFO) is a bare-bones swarm intelligence algorithm which is inspired by the swarming behaviour of flies hovering over food sources. DFO is a simple optimiser which works by iteratively trying to improve a candidate solution with regard to a numerical measure that is calculated by a fitness function. Each member of the population, a fly or an agent, holds a candidate solution whose suitability can be evaluated by their fitness value. Optimisation problems are often formulated as either minimisation or maximisation problems.