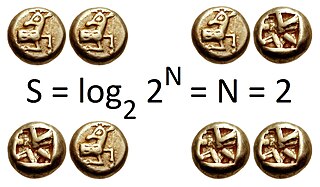

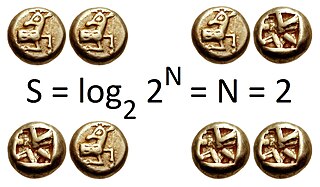

In information theory, the entropy of a random variable quantifies the average level of uncertainty or information associated with the variable's potential states or possible outcomes. This measures the expected amount of information needed to describe the state of the variable, considering the distribution of probabilities across all potential states. Given a discrete random variable , which takes values in the set and is distributed according to , the entropy is where denotes the sum over the variable's possible values. The choice of base for , the logarithm, varies for different applications. Base 2 gives the unit of bits, while base e gives "natural units" nat, and base 10 gives units of "dits", "bans", or "hartleys". An equivalent definition of entropy is the expected value of the self-information of a variable.

In mathematics, the conjugate transpose, also known as the Hermitian transpose, of an complex matrix is an matrix obtained by transposing and applying complex conjugation to each entry. There are several notations, such as or , , or .

In thermodynamics, the Helmholtz free energy is a thermodynamic potential that measures the useful work obtainable from a closed thermodynamic system at a constant temperature (isothermal). The change in the Helmholtz energy during a process is equal to the maximum amount of work that the system can perform in a thermodynamic process in which temperature is held constant. At constant temperature, the Helmholtz free energy is minimized at equilibrium.

In probability theory and information theory, the mutual information (MI) of two random variables is a measure of the mutual dependence between the two variables. More specifically, it quantifies the "amount of information" obtained about one random variable by observing the other random variable. The concept of mutual information is intimately linked to that of entropy of a random variable, a fundamental notion in information theory that quantifies the expected "amount of information" held in a random variable.

In mathematics, the Hausdorff distance, or Hausdorff metric, also called Pompeiu–Hausdorff distance, measures how far two subsets of a metric space are from each other. It turns the set of non-empty compact subsets of a metric space into a metric space in its own right. It is named after Felix Hausdorff and Dimitrie Pompeiu.

In information theory, the conditional entropy quantifies the amount of information needed to describe the outcome of a random variable given that the value of another random variable is known. Here, information is measured in shannons, nats, or hartleys. The entropy of conditioned on is written as .

In mathematical statistics, the Kullback–Leibler (KL) divergence, denoted , is a type of statistical distance: a measure of how much a model probability distribution Q is different from a true probability distribution P. Mathematically, it is defined as

In information theory, the Rényi entropy is a quantity that generalizes various notions of entropy, including Hartley entropy, Shannon entropy, collision entropy, and min-entropy. The Rényi entropy is named after Alfréd Rényi, who looked for the most general way to quantify information while preserving additivity for independent events. In the context of fractal dimension estimation, the Rényi entropy forms the basis of the concept of generalized dimensions.

In mathematics, the Bernoulli scheme or Bernoulli shift is a generalization of the Bernoulli process to more than two possible outcomes. Bernoulli schemes appear naturally in symbolic dynamics, and are thus important in the study of dynamical systems. Many important dynamical systems exhibit a repellor that is the product of the Cantor set and a smooth manifold, and the dynamics on the Cantor set are isomorphic to that of the Bernoulli shift. This is essentially the Markov partition. The term shift is in reference to the shift operator, which may be used to study Bernoulli schemes. The Ornstein isomorphism theorem shows that Bernoulli shifts are isomorphic when their entropy is equal.

In information theory, information dimension is an information measure for random vectors in Euclidean space, based on the normalized entropy of finely quantized versions of the random vectors. This concept was first introduced by Alfréd Rényi in 1959.

In thermodynamics, the fundamental thermodynamic relation are four fundamental equations which demonstrate how four important thermodynamic quantities depend on variables that can be controlled and measured experimentally. Thus, they are essentially equations of state, and using the fundamental equations, experimental data can be used to determine sought-after quantities like G or H (enthalpy). The relation is generally expressed as a microscopic change in internal energy in terms of microscopic changes in entropy, and volume for a closed system in thermal equilibrium in the following way.

In quantum information theory, quantum relative entropy is a measure of distinguishability between two quantum states. It is the quantum mechanical analog of relative entropy.

The study of facility location problems (FLP), also known as location analysis, is a branch of operations research and computational geometry concerned with the optimal placement of facilities to minimize transportation costs while considering factors like avoiding placing hazardous materials near housing, and competitors' facilities. The techniques also apply to cluster analysis.

In theoretical computer science, graph theory, and mathematics, the conductance is a parameter of a Markov chain that is closely tied to its mixing time, that is, how rapidly the chain converges to its stationary distribution, should it exist. Equivalently, the conductance can be viewed as a parameter of a directed graph, in which case it can be used to analyze how quickly random walks in the graph converge.

The partition function or configuration integral, as used in probability theory, information theory and dynamical systems, is a generalization of the definition of a partition function in statistical mechanics. It is a special case of a normalizing constant in probability theory, for the Boltzmann distribution. The partition function occurs in many problems of probability theory because, in situations where there is a natural symmetry, its associated probability measure, the Gibbs measure, has the Markov property. This means that the partition function occurs not only in physical systems with translation symmetry, but also in such varied settings as neural networks, and applications such as genomics, corpus linguistics and artificial intelligence, which employ Markov networks, and Markov logic networks. The Gibbs measure is also the unique measure that has the property of maximizing the entropy for a fixed expectation value of the energy; this underlies the appearance of the partition function in maximum entropy methods and the algorithms derived therefrom.

The entropic vector or entropic function is a concept arising in information theory. It represents the possible values of Shannon's information entropy that subsets of one set of random variables may take. Understanding which vectors are entropic is a way to represent all possible inequalities between entropies of various subsets. For example, for any two random variables , their joint entropy is at most the sum of the entropies of and of :

Inequalities are very important in the study of information theory. There are a number of different contexts in which these inequalities appear.

In statistical mechanics, the cluster expansion is a power series expansion of the partition function of a statistical field theory around a model that is a union of non-interacting 0-dimensional field theories. Unlike the usual perturbation expansion which usually leads to a divergent asymptotic series, the cluster expansion may converge within a non-trivial region, in particular when the interaction is small and short-ranged.

In probability theory and information theory, adjusted mutual information, a variation of mutual information may be used for comparing clusterings. It corrects the effect of agreement solely due to chance between clusterings, similar to the way the adjusted rand index corrects the Rand index. It is closely related to variation of information: when a similar adjustment is made to the VI index, it becomes equivalent to the AMI. The adjusted measure however is no longer metrical.

t-distributed stochastic neighbor embedding (t-SNE) is a statistical method for visualizing high-dimensional data by giving each datapoint a location in a two or three-dimensional map. It is based on Stochastic Neighbor Embedding originally developed by Geoffrey Hinton and Sam Roweis, where Laurens van der Maaten and Hinton proposed the t-distributed variant. It is a nonlinear dimensionality reduction technique for embedding high-dimensional data for visualization in a low-dimensional space of two or three dimensions. Specifically, it models each high-dimensional object by a two- or three-dimensional point in such a way that similar objects are modeled by nearby points and dissimilar objects are modeled by distant points with high probability.