Types

This section needs additional citations for verification .(May 2021) |

The resolution of digital cameras can be described in many different ways.

Pixel count

The term resolution is often considered equivalent to pixel count in digital imaging, though international standards in the digital camera field specify it should instead be called "Number of Total Pixels" in relation to image sensors, and as "Number of Recorded Pixels" for what is fully captured. Hence, CIPA DCG-001 calls for notation such as "Number of Recorded Pixels 1000 × 1500". [1] [2] According to the same standards, the "Number of Effective Pixels" that an image sensor or digital camera has is the count of pixel sensors that contribute to the final image (including pixels not in said image but nevertheless support the image filtering process), as opposed to the number of total pixels, which includes unused or light-shielded pixels around the edges.

An image of N pixels height by M pixels wide can have any resolution less than N lines per picture height, or N TV lines. But when the pixel counts are referred to as "resolution", the convention is to describe the pixel resolution with the set of two positive integer numbers, where the first number is the number of pixel columns (width) and the second is the number of pixel rows (height), for example as 7680 × 6876. Another popular convention is to cite resolution as the total number of pixels in the image, typically given as number of megapixels, which can be calculated by multiplying pixel columns by pixel rows and dividing by one million. Other conventions include describing pixels per length unit or pixels per area unit, such as pixels per inch or per square inch. None of these pixel resolutions are true resolutions[ clarification needed ], but they are widely referred to as such; they serve as upper bounds on image resolution.

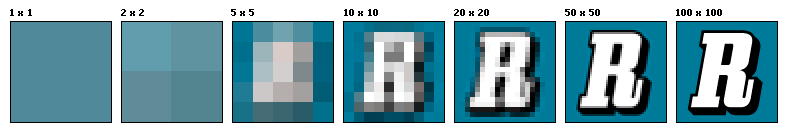

Below is an illustration of how the same image might appear at different pixel resolutions, if the pixels were poorly rendered as sharp squares (normally, a smooth image reconstruction from pixels would be preferred, but for illustration of pixels, the sharp squares make the point better).

An image that is 2048 pixels in width and 1536 pixels in height has a total of 2048×1536 = 3,145,728 pixels or 3.1 megapixels. One could refer to it as 2048 by 1536 or a 3.1-megapixel image. The image would be a very low-quality image (72ppi) if printed at about 28.5 inches wide, but a very good-quality image (300ppi) if printed at about 7 inches wide.

The number of photodiodes in a color digital camera image sensor is often a multiple of the number of pixels in the image it produces, because information from an array of color image sensors is used to reconstruct the color of a single pixel. The image has to be interpolated or demosaiced to produce all three colors for each output pixel.

Spatial resolution

The terms blurriness and sharpness are used for digital images, but other descriptors are used to reference the hardware capturing and displaying the images.

Spatial resolution in radiology is the ability of the imaging modality to differentiate two objects. Low spatial resolution techniques will be unable to differentiate between two objects that are relatively close together.

The measure of how closely lines can be resolved in an image is called spatial resolution, and it depends on properties of the system creating the image, not just the pixel resolution in pixels per inch (ppi). For practical purposes, the clarity of the image is decided by its spatial resolution, not the number of pixels in an image. In effect, spatial resolution is the number of independent pixel values per unit length.

The spatial resolution of consumer displays ranges from 50 to 800 pixel lines per inch. With scanners, optical resolution is sometimes used to distinguish spatial resolution from the number of pixels per inch.

In remote sensing, spatial resolution is typically limited by diffraction, as well as by aberrations, imperfect focus, and atmospheric distortion. The ground sample distance (GSD) of an image, the pixel spacing on the Earth's surface, is typically considerably smaller than the resolvable spot size.

In astronomy, one[ who? ] often[ when? ] measures spatial resolution in data points per arcsecond subtended at the point of observation, because the physical distance between objects in the image depends on their distance away and this varies widely with the object of interest. On the other hand, in electron microscopy, line or fringe resolution is the minimum separation detectable between adjacent parallel lines (e.g., between planes of atoms), whereas point resolution is instead the minimum separation between adjacent points that can be both detected and interpreted e.g., as adjacent columns of atoms, for instance. The former often helps one detect periodicity in specimens, whereas the latter (although more difficult to achieve) is key to visualizing how individual atoms interact.

In Stereoscopic 3D images, spatial resolution could be defined as the spatial information recorded or captured by two viewpoints of a stereo camera (left and right camera).

Spectral resolution

Pixel encoding limits the information stored in a digital image, and the term color profile is used for digital images, but other descriptors are used to reference the hardware capturing and displaying the images.

Spectral resolution is the ability to resolve spectral features and bands into their separate components. Color images distinguish light of different spectra. Multispectral images can resolve even finer differences of spectrum or wavelength by measuring and storing more than the traditional 3 of common RGB color images.

Temporal resolution

Temporal resolution (TR) is the precision of a measurement with respect to time.

Movie cameras and high-speed cameras can resolve events at different points in time. The time resolution used for movies is usually 24 to 48 frames per second (frames/s), whereas high-speed cameras may resolve 50 to 300 frames/s, or even more.

The Heisenberg uncertainty principle describes the fundamental limit on the maximum spatial resolution of information about a particle's coordinates imposed by the measurement or existence of information regarding its momentum to any degree of precision.

This fundamental limitation can, in turn, be a factor in the maximum imaging resolution at subatomic scales, as can be encountered using scanning electron microscopes.

Radiometric resolution

Radiometric resolution determines how finely a system can represent or distinguish differences of intensity, and is usually expressed as a number of levels or a number of bits, for example, 8 bits or 256 levels, which is typical of computer image files. The higher the radiometric resolution, the more subtle differences of intensity or reflectivity can be represented, at least in theory. In practice, the effective radiometric resolution is typically limited by the noise level, rather than by the number of bits of representation.