In mathematical optimization, the method of Lagrange multipliers is a strategy for finding the local maxima and minima of a function subject to equality constraints. It is named after the mathematician Joseph-Louis Lagrange. The basic idea is to convert a constrained problem into a form such that the derivative test of an unconstrained problem can still be applied. The relationship between the gradient of the function and gradients of the constraints rather naturally leads to a reformulation of the original problem, known as the Lagrangian function.

H∞methods are used in control theory to synthesize controllers to achieve stabilization with guaranteed performance. To use H∞ methods, a control designer expresses the control problem as a mathematical optimization problem and then finds the controller that solves this optimization. H∞ techniques have the advantage over classical control techniques in that H∞ techniques are readily applicable to problems involving multivariate systems with cross-coupling between channels; disadvantages of H∞ techniques include the level of mathematical understanding needed to apply them successfully and the need for a reasonably good model of the system to be controlled. It is important to keep in mind that the resulting controller is only optimal with respect to the prescribed cost function and does not necessarily represent the best controller in terms of the usual performance measures used to evaluate controllers such as settling time, energy expended, etc. Also, non-linear constraints such as saturation are generally not well-handled. These methods were introduced into control theory in the late 1970s-early 1980s by George Zames, J. William Helton , and Allen Tannenbaum.

In mathematical analysis, a function of bounded variation, also known as BV function, is a real-valued function whose total variation is bounded (finite): the graph of a function having this property is well behaved in a precise sense. For a continuous function of a single variable, being of bounded variation means that the distance along the direction of the y-axis, neglecting the contribution of motion along x-axis, traveled by a point moving along the graph has a finite value. For a continuous function of several variables, the meaning of the definition is the same, except for the fact that the continuous path to be considered cannot be the whole graph of the given function, but can be every intersection of the graph itself with a hyperplane parallel to a fixed x-axis and to the y-axis.

Global optimization is a branch of applied mathematics and numerical analysis that attempts to find the global minima or maxima of a function or a set of functions on a given set. It is usually described as a minimization problem because the maximization of the real-valued function is equivalent to the minimization of the function .

Topology optimization is a mathematical method that optimizes material layout within a given design space, for a given set of loads, boundary conditions and constraints with the goal of maximizing the performance of the system. Topology optimization is different from shape optimization and sizing optimization in the sense that the design can attain any shape within the design space, instead of dealing with predefined configurations.

Geometry processing, or mesh processing, is an area of research that uses concepts from applied mathematics, computer science and engineering to design efficient algorithms for the acquisition, reconstruction, analysis, manipulation, simulation and transmission of complex 3D models. As the name implies, many of the concepts, data structures, and algorithms are directly analogous to signal processing and image processing. For example, where image smoothing might convolve an intensity signal with a blur kernel formed using the Laplace operator, geometric smoothing might be achieved by convolving a surface geometry with a blur kernel formed using the Laplace-Beltrami operator.

In mathematics, a flow formalizes the idea of the motion of particles in a fluid. Flows are ubiquitous in science, including engineering and physics. The notion of flow is basic to the study of ordinary differential equations. Informally, a flow may be viewed as a continuous motion of points over time. More formally, a flow is a group action of the real numbers on a set.

In mathematics, more particularly in functional analysis, differential topology, and geometric measure theory, a k-current in the sense of Georges de Rham is a functional on the space of compactly supported differential k-forms, on a smooth manifold M. Currents formally behave like Schwartz distributions on a space of differential forms, but in a geometric setting, they can represent integration over a submanifold, generalizing the Dirac delta function, or more generally even directional derivatives of delta functions (multipoles) spread out along subsets of M.

In optics, a caustic or caustic network is the envelope of light rays reflected or refracted by a curved surface or object, or the projection of that envelope of rays on another surface. The caustic is a curve or surface to which each of the light rays is tangent, defining a boundary of an envelope of rays as a curve of concentrated light. Therefore, in the photo to the right, caustics can be seen as patches of light or their bright edges. These shapes often have cusp singularities.

In mathematics and its applications, the signed distance function of a set Ω in a metric space determines the distance of a given point x from the boundary of Ω, with the sign determined by whether x is in Ω. The function has positive values at points x inside Ω, it decreases in value as x approaches the boundary of Ω where the signed distance function is zero, and it takes negative values outside of Ω. However, the alternative convention is also sometimes taken instead.

In mathematics, Bochner spaces are a generalization of the concept of spaces to functions whose values lie in a Banach space which is not necessarily the space or of real or complex numbers.

Linear Programming Boosting (LPBoost) is a supervised classifier from the boosting family of classifiers. LPBoost maximizes a margin between training samples of different classes and hence also belongs to the class of margin-maximizing supervised classification algorithms. Consider a classification function

The topological derivative is, conceptually, a derivative of a shape functional with respect to infinitesimal changes in its topology, such as adding an infinitesimal hole or crack. When used in higher dimensions than one, the term topological gradient is also used to name the first-order term of the topological asymptotic expansion, dealing only with infinitesimal singular domain perturbations. It has applications in shape optimization, topology optimization, image processing and mechanical modeling.

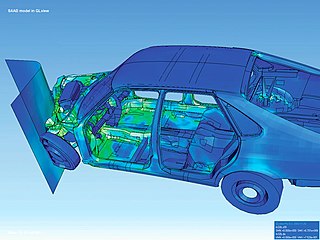

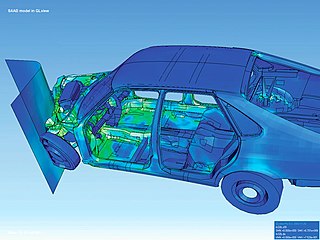

The finite element method (FEM) is a popular method for numerically solving differential equations arising in engineering and mathematical modeling. Typical problem areas of interest include the traditional fields of structural analysis, heat transfer, fluid flow, mass transport, and electromagnetic potential.

The adjoint state method is a numerical method for efficiently computing the gradient of a function or operator in a numerical optimization problem. It has applications in geophysics, seismic imaging, photonics and more recently in neural networks.

In mathematics, a free boundary problem is a partial differential equation to be solved for both an unknown function and an unknown domain . The segment of the boundary of which is not known at the outset of the problem is the free boundary.

The closest point method (CPM) is an embedding method for solving partial differential equations on surfaces. The closest point method uses standard numerical approaches such as finite differences, finite element or spectral methods in order to solve the embedding partial differential equation (PDE) which is equal to the original PDE on the surface. The solution is computed in a band surrounding the surface in order to be computationally efficient. In order to extend the data off the surface, the closest point method uses a closest point representation. This representation extends function values to be constant along directions normal to the surface.

In mathematics, the walk-on-spheres method (WoS) is a numerical probabilistic algorithm, or Monte-Carlo method, used mainly in order to approximate the solutions of some specific boundary value problem for partial differential equations (PDEs). The WoS method was first introduced by Mervin E. Muller in 1956 to solve Laplace's equation, and was since then generalized to other problems.

YaDICs is a program written to perform digital image correlation on 2D and 3D tomographic images. The program was designed to be both modular, by its plugin strategy and efficient, by it multithreading strategy. It incorporates different transformations, optimizing strategy, Global and/or local shape functions ...

In numerical mathematics, the gradient discretisation method (GDM) is a framework which contains classical and recent numerical schemes for diffusion problems of various kinds: linear or non-linear, steady-state or time-dependent. The schemes may be conforming or non-conforming, and may rely on very general polygonal or polyhedral meshes.