A chatbot is a software application that aims to mimic human conversation through text or voice interactions, typically online. The term "ChatterBot" was originally coined by Michael Mauldin in 1994 to describe conversational programs. Modern chatbots are artificial intelligence (AI) systems that are capable of maintaining a conversation with a user in natural language and simulating the way a human would behave as a conversational partner. Such technologies often utilize aspects of deep learning and natural language processing.

Neuromorphic computing is an approach to computing that is inspired by the structure and function of the human brain. A neuromorphic computer/chip is any device that uses physical artificial neurons to do computations. In recent times, the term neuromorphic has been used to describe analog, digital, mixed-mode analog/digital VLSI, and software systems that implement models of neural systems. The implementation of neuromorphic computing on the hardware level can be realized by oxide-based memristors, spintronic memories, threshold switches, transistors, among others. Training software-based neuromorphic systems of spiking neural networks can be achieved using error backpropagation, e.g., using Python based frameworks such as snnTorch, or using canonical learning rules from the biological learning literature, e.g., using BindsNet.

An artificial general intelligence (AGI) is a type of hypothetical intelligent agent. The AGI concept is that it can learn to accomplish any intellectual task that human beings or other animals can perform. Alternatively, AGI has been defined as an autonomous system that surpasses human capabilities in the majority of economically valuable tasks. Creating AGI is a primary goal of some artificial intelligence research and companies such as OpenAI, DeepMind, and Anthropic. AGI is a common topic in science fiction and futures studies.

This is a timeline of artificial intelligence, sometimes alternatively called synthetic intelligence.

OpenAI is an American artificial intelligence (AI) research laboratory consisting of the non-profit OpenAI Incorporated and its for-profit subsidiary corporation OpenAI Limited Partnership. OpenAI conducts AI research with the declared intention of promoting and developing a friendly AI. OpenAI systems run on an Azure-based supercomputing platform from Microsoft.

In the field of artificial intelligence (AI), AI alignment research aims to steer AI systems towards humans’ intended goals, preferences, or ethical principles. An AI system is considered aligned if it advances the intended objectives. A misaligned AI system is competent at advancing some objectives, but not the intended ones.

This article presents a detailed timeline of events in the history of computing from 2020 to the present. For narratives explaining the overall developments, see the history of computing.

Synthetic media is a catch-all term for the artificial production, manipulation, and modification of data and media by automated means, especially through the use of artificial intelligence algorithms, such as for the purpose of misleading people or changing an original meaning. Synthetic media as a field has grown rapidly since the creation of generative adversarial networks, primarily through the rise of deepfakes as well as music synthesis, text generation, human image synthesis, speech synthesis, and more. Though experts use the term "synthetic media," individual methods such as deepfakes and text synthesis are sometimes not referred to as such by the media but instead by their respective terminology Significant attention arose towards the field of synthetic media starting in 2017 when Motherboard reported on the emergence of AI altered pornographic videos to insert the faces of famous actresses. Potential hazards of synthetic media include the spread of misinformation, further loss of trust in institutions such as media and government, the mass automation of creative and journalistic jobs and a retreat into AI-generated fantasy worlds. Synthetic media is an applied form of artificial imagination.

Generative Pre-trained Transformer 3 (GPT-3) is an autoregressive language model released by OpenAI in 2020 that uses deep learning to produce human-like text. When given a prompt, it will generate text that continues the prompt.

Generative Pre-trained Transformer 2 (GPT-2) is an open-source artificial intelligence large language model created by OpenAI in February 2019. GPT-2 translates text, answers questions, summarizes passages, and generates text output on a level that, while sometimes indistinguishable from that of humans, can become repetitive or nonsensical when generating long passages. It is a general-purpose learner; it was not specifically trained to do any of these tasks, and its ability to perform them is an extension of its general ability to accurately synthesize the next item in an arbitrary sequence. GPT-2 was created as a "direct scale-up" of OpenAI's 2018 GPT model ("GPT-1"), with a ten-fold increase in both its parameter count and the size of its training dataset.

Prompt engineering is a concept in artificial intelligence, particularly natural language processing. In prompt engineering, the description of the task that the AI is supposed to accomplish is embedded in the input, e.g. as a question, instead of it being explicitly given. Prompt engineering typically works by converting one or more tasks to a prompt-based dataset and training a language model with what has been called "prompt-based learning" or just "prompt learning".

LaMDA is a family of conversational large language models developed by Google. Originally developed and introduced as Meena in 2020, the first-generation LaMDA was announced during the 2021 Google I/O keynote, while the second generation was announced the following year. In June 2022, LaMDA gained widespread attention when Google engineer Blake Lemoine made claims that the chatbot had become sentient. The scientific community has largely rejected Lemoine's claims, though it has led to conversations about the efficacy of the Turing test, which measures whether a computer can pass for a human. In February 2023, Google announced Bard, a conversational artificial intelligence chatbot powered by LaMDA, to counter the rise of OpenAI's ChatGPT.

The following scientific events occurred or are scheduled to occur in 2023.

ChatGPT is an artificial intelligence (AI) chatbot developed by OpenAI and released in November 2022. The name "ChatGPT" combines "Chat", referring to its chatbot functionality, and "GPT", which stands for Generative Pre-trained Transformer, a type of large language model (LLM). ChatGPT is built upon OpenAI's foundational GPT models, specifically GPT-3.5 and GPT-4, and has been fine-tuned for conversational applications using a combination of supervised and reinforcement learning techniques.

In artificial intelligence (AI), a hallucination or artificial hallucination is a confident response by an AI that does not seem to be justified by its training data, either because it is insufficient, biased or too specialised. For example, a hallucinating chatbot with no training data regarding Tesla's revenue might internally generate a random number that the algorithm ranks with high confidence, and then go on to falsely and repeatedly represent that Tesla's revenue is $13.6 billion, with no provided context that the figure was a product of the weakness of its generation algorithm.

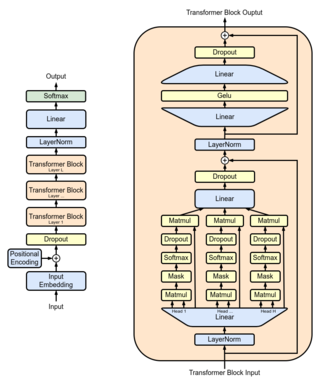

Generative Pre-trained Transformer 4 (GPT-4) is a multimodal large language model created by OpenAI, and the fourth in its numbered "GPT-n" series of GPT foundation models. It was released on March 14, 2023, and has been made publicly available in a limited form via the chatbot product ChatGPT Plus, and with access to the GPT-4 based version of OpenAI's API being provided via a waitlist. As a transformer based model, GPT-4 was pretrained to predict the next token, and was then fine-tuned with reinforcement learning from human and AI feedback for human alignment and policy compliance.

Generative pre-trained transformers (GPT) are a type of large language model (LLM) and a prominent framework for generative artificial intelligence. The first GPT was introduced in 2018 by the American artificial intelligence (AI) company OpenAI. GPT models are artificial neural networks that are based on the transformer architecture, pretrained on large data sets of unlabelled text, and able to generate novel human-like content. As of 2023, most LLMs have these characteristics and are sometimes referred to broadly as GPTs.

Generative artificial intelligence or generative AI is a type of artificial intelligence (AI) system capable of generating text, images, or other media in response to prompts. Generative AI models learn the patterns and structure of their input training data, and then generate new data that has similar characteristics.

The AI boom refers to an ongoing period of rapid and unprecedented development in the field of artificial intelligence, with the generative AI race being a key component of this boom, which began in earnest with the founding of OpenAI in 2016 or 2017. OpenAI's generative AI systems, such as its various GPT models and DALL-E (2021), have played a significant role in driving this development.

Bard is a conversational generative artificial intelligence chatbot developed by Google, based initially on the LaMDA family of large language models (LLMs) and later the PaLM LLM. It was developed as a direct response to the rise of OpenAI's ChatGPT, and was released in a limited capacity in March 2023 to lukewarm responses, before expanding to other countries.