The fundamental thermodynamic relation and statistical mechanical principles can be derived from one another.

Derivation from statistical mechanical principles

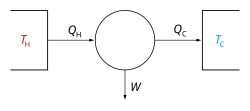

The above derivation uses the first and second laws of thermodynamics. The first law of thermodynamics is essentially a definition of heat, i.e. heat is the change in the internal energy of a system that is not caused by a change of the external parameters of the system.

However, the second law of thermodynamics is not a defining relation for the entropy. The fundamental definition of entropy of an isolated system containing an amount of energy  is:

is:

where  is the number of microstates in a small interval between

is the number of microstates in a small interval between  and

and  . Here

. Here  is a macroscopically small energy interval that is kept fixed. Strictly speaking this means that the entropy depends on the choice of

is a macroscopically small energy interval that is kept fixed. Strictly speaking this means that the entropy depends on the choice of  . However, in the thermodynamic limit (i.e. in the limit of infinitely large system size), the specific entropy (entropy per unit volume or per unit mass) does not depend on

. However, in the thermodynamic limit (i.e. in the limit of infinitely large system size), the specific entropy (entropy per unit volume or per unit mass) does not depend on  . The entropy is thus a measure of the uncertainty about exactly which microstate the system is in, given that we know its energy to be in some interval of size

. The entropy is thus a measure of the uncertainty about exactly which microstate the system is in, given that we know its energy to be in some interval of size  .

.

Deriving the fundamental thermodynamic relation from first principles thus amounts to proving that the above definition of entropy implies that for reversible processes we have:

The relevant assumption from statistical mechanics is that all the  states at a particular energy are equally likely. This allows us to extract all the thermodynamical quantities of interest. The temperature is defined as:

states at a particular energy are equally likely. This allows us to extract all the thermodynamical quantities of interest. The temperature is defined as:

This definition can be derived from the microcanonical ensemble, which is a system of a constant number of particles, a constant volume and that does not exchange energy with its environment. Suppose that the system has some external parameter, x, that can be changed. In general, the energy eigenstates of the system will depend on x. According to the adiabatic theorem of quantum mechanics, in the limit of an infinitely slow change of the system's Hamiltonian, the system will stay in the same energy eigenstate and thus change its energy according to the change in energy of the energy eigenstate it is in.

The generalized force, X, corresponding to the external parameter x is defined such that  is the work performed by the system if x is increased by an amount dx. E.g., if x is the volume, then X is the pressure. The generalized force for a system known to be in energy eigenstate

is the work performed by the system if x is increased by an amount dx. E.g., if x is the volume, then X is the pressure. The generalized force for a system known to be in energy eigenstate  is given by:

is given by:

Since the system can be in any energy eigenstate within an interval of  , we define the generalized force for the system as the expectation value of the above expression:

, we define the generalized force for the system as the expectation value of the above expression:

To evaluate the average, we partition the  energy eigenstates by counting how many of them have a value for

energy eigenstates by counting how many of them have a value for  within a range between

within a range between  and

and  . Calling this number

. Calling this number  , we have:

, we have:

The average defining the generalized force can now be written:

We can relate this to the derivative of the entropy with respect to x at constant energy E as follows. Suppose we change x to x + dx. Then  will change because the energy eigenstates depend on x, causing energy eigenstates to move into or out of the range between

will change because the energy eigenstates depend on x, causing energy eigenstates to move into or out of the range between  and

and  . Let's focus again on the energy eigenstates for which

. Let's focus again on the energy eigenstates for which  lies within the range between

lies within the range between  and

and  . Since these energy eigenstates increase in energy by Y dx, all such energy eigenstates that are in the interval ranging from E − Ydx to E move from below E to above E. There are

. Since these energy eigenstates increase in energy by Y dx, all such energy eigenstates that are in the interval ranging from E − Ydx to E move from below E to above E. There are

such energy eigenstates. If  , all these energy eigenstates will move into the range between

, all these energy eigenstates will move into the range between  and

and  and contribute to an increase in

and contribute to an increase in  . The number of energy eigenstates that move from below

. The number of energy eigenstates that move from below  to above

to above  is, of course, given by

is, of course, given by  . The difference

. The difference

is thus the net contribution to the increase in  . Note that if Y dx is larger than

. Note that if Y dx is larger than  there will be energy eigenstates that move from below

there will be energy eigenstates that move from below  to above

to above  . They are counted in both

. They are counted in both  and

and  , therefore the above expression is also valid in that case.

, therefore the above expression is also valid in that case.

Expressing the above expression as a derivative with respect to E and summing over Y yields the expression:

The logarithmic derivative of  with respect to x is thus given by:

with respect to x is thus given by:

The first term is intensive, i.e. it does not scale with system size. In contrast, the last term scales as the inverse system size and thus vanishes in the thermodynamic limit. We have thus found that:

Combining this with

Gives:

which we can write as:

Derivation of statistical mechanical principles from the fundamental thermodynamic relation

It has been shown that the fundamental thermodynamic relation together with the following three postulates [2]

- The probability density function is proportional to some function of the ensemble parameters and random variables.

- Thermodynamic state functions are described by ensemble averages of random variables.

- The entropy as defined by Gibbs entropy formula matches with the entropy as defined in classical thermodynamics.

is sufficient to build the theory of statistical mechanics without the equal a priori probability postulate.

For example, in order to derive the Boltzmann distribution, we assume the probability density of microstate i satisfies  . The normalization factor (partition function) is therefore

. The normalization factor (partition function) is therefore

The entropy is therefore given by

If we change the temperature T by dT while keeping the volume of the system constant, the change of entropy satisfies

where

Considering that

we have

From the fundamental thermodynamic relation, we have

Since we kept V constant when perturbing T, we have  . Combining the equations above, we have

. Combining the equations above, we have

Physics laws should be universal, i.e., the above equation must hold for arbitrary systems, and the only way for this to happen is

That is

It has been shown that the third postulate in the above formalism can be replaced by the following: [3]

- At infinite temperature, all the microstates have the same probability.

However, the mathematical derivation will be much more complicated.