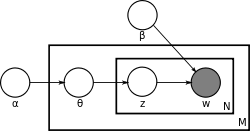

Learning the various distributions (the set of topics, their associated word probabilities, the topic of each word, and the particular topic mixture of each document) is a problem of statistical inference.

Alternative approaches

Alternative approaches include expectation propagation. [16]

Recent research has been focused on speeding up the inference of latent Dirichlet allocation to support the capture of a massive number of topics in a large number of documents. The update equation of the collapsed Gibbs sampler mentioned in the earlier section has a natural sparsity within it that can be taken advantage of. Intuitively, since each document only contains a subset of topics  , and a word also only appears in a subset of topics

, and a word also only appears in a subset of topics  , the above update equation could be rewritten to take advantage of this sparsity. [17]

, the above update equation could be rewritten to take advantage of this sparsity. [17]

In this equation, we have three terms, out of which two are sparse, and the other is small. We call these terms  and

and  respectively. Now, if we normalize each term by summing over all the topics, we get:

respectively. Now, if we normalize each term by summing over all the topics, we get:

Here, we can see that  is a summation of the topics that appear in document

is a summation of the topics that appear in document  , and

, and  is also a sparse summation of the topics that a word

is also a sparse summation of the topics that a word  is assigned to across the whole corpus.

is assigned to across the whole corpus.  on the other hand, is dense but because of the small values of

on the other hand, is dense but because of the small values of  &

&  , the value is very small compared to the two other terms.

, the value is very small compared to the two other terms.

Now, while sampling a topic, if we sample a random variable uniformly from  , we can check which bucket our sample lands in. Since

, we can check which bucket our sample lands in. Since  is small, we are very unlikely to fall into this bucket; however, if we do fall into this bucket, sampling a topic takes

is small, we are very unlikely to fall into this bucket; however, if we do fall into this bucket, sampling a topic takes  time (same as the original Collapsed Gibbs Sampler). However, if we fall into the other two buckets, we only need to check a subset of topics if we keep a record of the sparse topics. A topic can be sampled from the

time (same as the original Collapsed Gibbs Sampler). However, if we fall into the other two buckets, we only need to check a subset of topics if we keep a record of the sparse topics. A topic can be sampled from the  bucket in

bucket in  time, and a topic can be sampled from the

time, and a topic can be sampled from the  bucket in

bucket in  time where

time where  and

and  denotes the number of topics assigned to the current document and current word type respectively.

denotes the number of topics assigned to the current document and current word type respectively.

Notice that after sampling each topic, updating these buckets is all basic  arithmetic operations.

arithmetic operations.

Aspects of computational details

Following is the derivation of the equations for collapsed Gibbs sampling, which means  s and

s and  s will be integrated out. For simplicity, in this derivation the documents are all assumed to have the same length

s will be integrated out. For simplicity, in this derivation the documents are all assumed to have the same length  . The derivation is equally valid if the document lengths vary.

. The derivation is equally valid if the document lengths vary.

According to the model, the total probability of the model is:

where the bold-font variables denote the vector version of the variables. First,  and

and  need to be integrated out.

need to be integrated out.

All the  s are independent to each other and the same to all the

s are independent to each other and the same to all the  s. So we can treat each

s. So we can treat each  and each

and each  separately. We now focus only on the

separately. We now focus only on the  part.

part.

We can further focus on only one  as the following:

as the following:

Actually, it is the hidden part of the model for the  document. Now we replace the probabilities in the above equation by the true distribution expression to write out the explicit equation.

document. Now we replace the probabilities in the above equation by the true distribution expression to write out the explicit equation.

Let  be the number of word tokens in the

be the number of word tokens in the  document with the same word symbol (the

document with the same word symbol (the  word in the vocabulary) assigned to the

word in the vocabulary) assigned to the  topic. So,

topic. So,  is three dimensional. If any of the three dimensions is not limited to a specific value, we use a parenthesized point

is three dimensional. If any of the three dimensions is not limited to a specific value, we use a parenthesized point  to denote. For example,

to denote. For example,  denotes the number of word tokens in the

denotes the number of word tokens in the  document assigned to the

document assigned to the  topic. Thus, the right most part of the above equation can be rewritten as:

topic. Thus, the right most part of the above equation can be rewritten as:

So the  integration formula can be changed to:

integration formula can be changed to:

The equation inside the integration has the same form as the Dirichlet distribution. According to the Dirichlet distribution,

Thus,

Now we turn our attention to the  part. Actually, the derivation of the

part. Actually, the derivation of the  part is very similar to the

part is very similar to the  part. Here we only list the steps of the derivation:

part. Here we only list the steps of the derivation:

For clarity, here we write down the final equation with both  and

and  integrated out:

integrated out:

The goal of Gibbs Sampling here is to approximate the distribution of  . Since

. Since  is invariable for any of Z, Gibbs Sampling equations can be derived from

is invariable for any of Z, Gibbs Sampling equations can be derived from  directly. The key point is to derive the following conditional probability:

directly. The key point is to derive the following conditional probability:

where  denotes the

denotes the  hidden variable of the

hidden variable of the  word token in the

word token in the  document. And further we assume that the word symbol of it is the

document. And further we assume that the word symbol of it is the  word in the vocabulary, i.e.

word in the vocabulary, i.e.  .

.  denotes all the

denotes all the  s but

s but  . Note that Gibbs Sampling needs only to sample a value for

. Note that Gibbs Sampling needs only to sample a value for  , according to the above probability, we do not need the exact value of

, according to the above probability, we do not need the exact value of

but the ratios among the probabilities that  can take value. So, the above equation can be simplified as:

can take value. So, the above equation can be simplified as:

Finally, let  be the same meaning as

be the same meaning as  but with the

but with the  excluded. The above equation can be further simplified leveraging the property of gamma function. We first split the summation and then merge it back to obtain a

excluded. The above equation can be further simplified leveraging the property of gamma function. We first split the summation and then merge it back to obtain a  -independent summation, which could be dropped:

-independent summation, which could be dropped:

Note that the same formula is derived in the article on the Dirichlet-multinomial distribution, as part of a more general discussion of integrating Dirichlet distribution priors out of a Bayesian network.