In the calculus of variations, a field of mathematical analysis, the functional derivative relates a change in a functional to a change in a function on which the functional depends.

In computational learning theory, probably approximately correct (PAC) learning is a framework for mathematical analysis of machine learning. It was proposed in 1984 by Leslie Valiant.

In probability theory, a Chernoff bound is an exponentially decreasing upper bound on the tail of a random variable based on its moment generating function. The minimum of all such exponential bounds forms the Chernoff or Chernoff-Cramér bound, which may decay faster than exponential. It is especially useful for sums of independent random variables, such as sums of Bernoulli random variables.

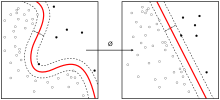

For supervised learning applications in machine learning and statistical learning theory, generalization error is a measure of how accurately an algorithm is able to predict outcome values for previously unseen data. Because learning algorithms are evaluated on finite samples, the evaluation of a learning algorithm may be sensitive to sampling error. As a result, measurements of prediction error on the current data may not provide much information about predictive ability on new data. Generalization error can be minimized by avoiding overfitting in the learning algorithm. The performance of a machine learning algorithm is visualized by plots that show values of estimates of the generalization error through the learning process, which are called learning curves.

In general relativity, the Gibbons–Hawking–York boundary term is a term that needs to be added to the Einstein–Hilbert action when the underlying spacetime manifold has a boundary.

In mathematics, in particular in algebraic geometry and differential geometry, Dolbeault cohomology (named after Pierre Dolbeault) is an analog of de Rham cohomology for complex manifolds. Let M be a complex manifold. Then the Dolbeault cohomology groups depend on a pair of integers p and q and are realized as a subquotient of the space of complex differential forms of degree (p,q).

In computer science, particularly the study of approximation algorithms, an L-reduction is a transformation of optimization problems which linearly preserves approximability features; it is one type of approximation-preserving reduction. L-reductions in studies of approximability of optimization problems play a similar role to that of polynomial reductions in the studies of computational complexity of decision problems.

In information theory, information dimension is an information measure for random vectors in Euclidean space, based on the normalized entropy of finely quantized versions of the random vectors. This concept was first introduced by Alfréd Rényi in 1959.

In coding theory, list decoding is an alternative to unique decoding of error-correcting codes for large error rates. The notion was proposed by Elias in the 1950s. The main idea behind list decoding is that the decoding algorithm instead of outputting a single possible message outputs a list of possibilities one of which is correct. This allows for handling a greater number of errors than that allowed by unique decoding.

In mathematics, Montgomery's pair correlation conjecture is a conjecture made by Hugh Montgomery (1973) that the pair correlation between pairs of zeros of the Riemann zeta function is

The exponential mechanism is a technique for designing differentially private algorithms. It was developed by Frank McSherry and Kunal Talwar in 2007. Their work was recognized as a co-winner of the 2009 PET Award for Outstanding Research in Privacy Enhancing Technologies.

Generalized relative entropy is a measure of dissimilarity between two quantum states. It is a "one-shot" analogue of quantum relative entropy and shares many properties of the latter quantity.

The sample complexity of a machine learning algorithm represents the number of training-samples that it needs in order to successfully learn a target function.

The distributional learning theory or learning of probability distribution is a framework in computational learning theory. It has been proposed from Michael Kearns, Yishay Mansour, Dana Ron, Ronitt Rubinfeld, Robert Schapire and Linda Sellie in 1994 and it was inspired from the PAC-framework introduced by Leslie Valiant.

In PAC learning, error tolerance refers to the ability of an algorithm to learn when the examples received have been corrupted in some way. In fact, this is a very common and important issue since in many applications it is not possible to access noise-free data. Noise can interfere with the learning process at different levels: the algorithm may receive data that have been occasionally mislabeled, or the inputs may have some false information, or the classification of the examples may have been maliciously adulterated.

The multiplicative weights update method is an algorithmic technique most commonly used for decision making and prediction, and also widely deployed in game theory and algorithm design. The simplest use case is the problem of prediction from expert advice, in which a decision maker needs to iteratively decide on an expert whose advice to follow. The method assigns initial weights to the experts, and updates these weights multiplicatively and iteratively according to the feedback of how well an expert performed: reducing it in case of poor performance, and increasing it otherwise. It was discovered repeatedly in very diverse fields such as machine learning, optimization, theoretical computer science, and game theory.

In theoretical physics, the dual graviton is a hypothetical elementary particle that is a dual of the graviton under electric-magnetic duality, as an S-duality, predicted by some formulations of supergravity in eleven dimensions.

Adding controlled noise from predetermined distributions is a way of designing differentially private mechanisms. This technique is useful for designing private mechanisms for real-valued functions on sensitive data. Some commonly used distributions for adding noise include Laplace and Gaussian distributions.

The method of (hypergraph) containers is a powerful tool that can help characterize the typical structure and/or answer extremal questions about families of discrete objects with a prescribed set of local constraints. Such questions arise naturally in extremal graph theory, additive combinatorics, discrete geometry, coding theory, and Ramsey theory; they include some of the most classical problems in the associated fields.

The Karmarkar–Karp (KK) bin packing algorithms are several related approximation algorithm for the bin packing problem. The bin packing problem is a problem of packing items of different sizes into bins of identical capacity, such that the total number of bins is as small as possible. Finding the optimal solution is computationally hard. Karmarkar and Karp devised an algorithm that runs in polynomial time and finds a solution with at most bins, where OPT is the number of bins in the optimal solution. They also devised several other algorithms with slightly different approximation guarantees and run-time bounds.